Microsoft Unveils MAI-Transcribe-1, Its Own Speech-to-Text Model

MAI-Transcribe-1 is now in public preview on Microsoft Foundry—and Microsoft claims it outperforms Whisper, GPT-Transcribe, and Gemini Flash

Microsoft’s AI division has been notably active in building out its first-party portfolio. It has developed everything from foundation models and vision capabilities to speech and image generation and diagnostic orchestration. Now, the company is adding another piece: MAI-Transcribe-1, a multilingual speech-to-text model that’s now available in public preview on Microsoft Foundry.

MAI-Transcribe-1 is trained on a diverse mix of human-curated transcripts and machine-transcribed data. Microsoft touts it as designed to handle “challenging” recording conditions, claiming it can minimize background noise, adjust for low-quality audio, and manage overlapping speech. The model is built on a transformer-based text decoder with a bidirectional audio encoder and supports a maximum audio length of 200 MB.

Initially, it can produce high-quality batch transcripts based on MP3, WAV, and FLAC files. However, in the future, Microsoft says MAI-Transcribe-1 will support diarization, which identifies and separates speakers in a recording; contextual biasing, which helps the model prioritize domain-specific terminology and proper nouns; and streaming, which processes and outputs text in real-time as audio comes in.

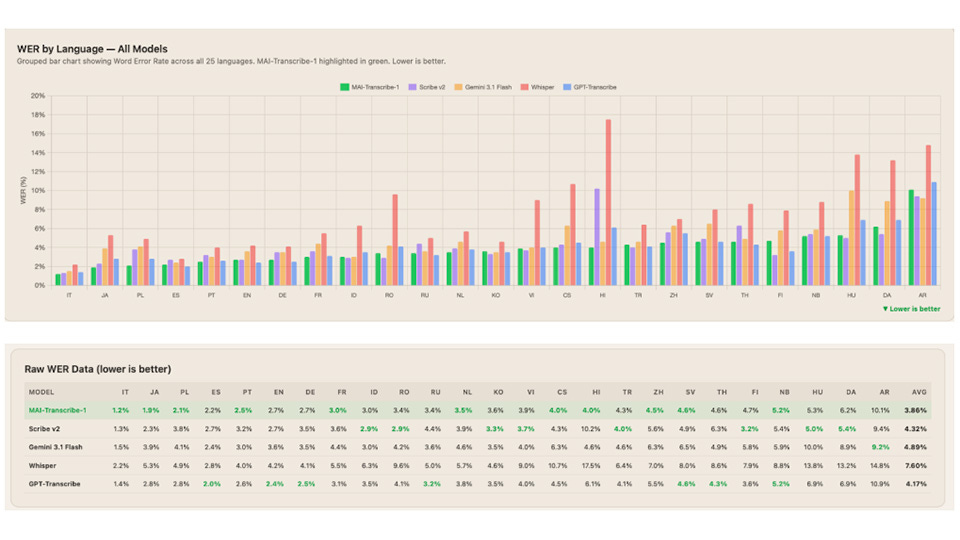

It’s also a global offering that can understand 25 languages, including English, French, German, Italian, Spanish, Hindi, Portuguese, Czech, Danish, Finnish, Hungarian, Dutch, Polish, Romanian, Swedish, Japanese, Korean, Chinese, Arabic, Indonesian, Russian, Thai, Turkish, and Vietnamese. That’s a much smaller number compared to OpenAI’s Whisper model, which launched with 99 languages. Nevertheless, it appears MAI-Transcribe-1 is optimized for usage in products with a global reach.

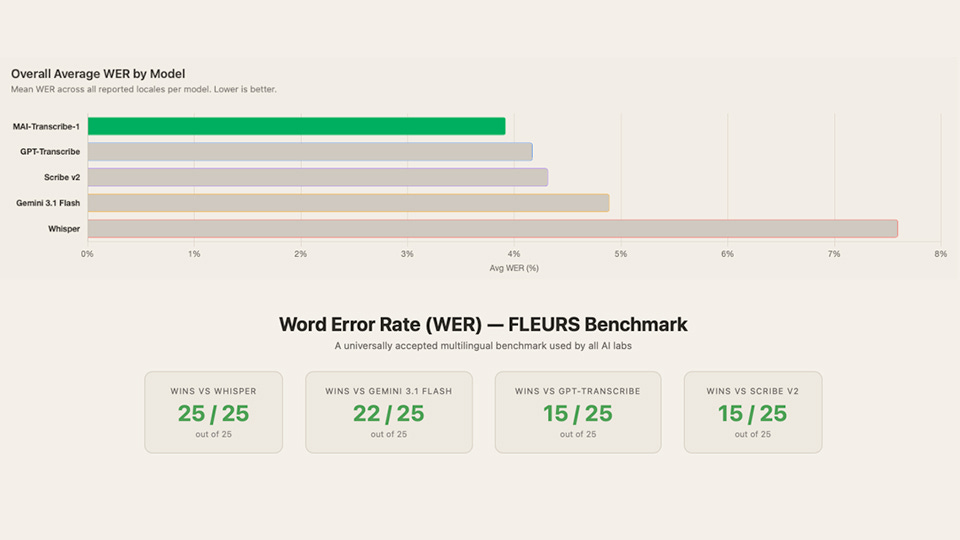

Tested against the FLEURS speech benchmark, MAI-Transcribe-1 reportedly outperforms several leading models, including Whisper-large-v3, GPT-Transcribe, Scribe v2, and Google Gemini 3.1 Flash. It also posts the lowest Word Error Rate (3.8 percent) among its competitors, according to Microsoft.

While MAI-Transcribe-1 is already powering voice mode in Microsoft Copilot, the company emphasizes that there are many other viable use cases, including live captioning, call center transcriptions, video subtitling, accessibility, e-learning, media archiving, and market research. The model is also flexible enough to run wherever developers want, either in the cloud or on-premises.

A demo of MAI-Transcribe-1 is live today on the Microsoft AI Playground, where users can test the model by recording audio directly or uploading a file up to 10 MB.

And there’s also one other piece of news: Microsoft is featuring its voice model, MAI-Voice-1, and its newly-released MAI-Image-2 image generation model in Microsoft Foundry alongside MAI-Transcribe-1. Developers today can use any or all of these three models in their applications.

The company is also sharing the cost of using them—for MAI-Voice-1, pricing starts at $22 per million characters, while for MAI-Image-2, it starts at $5 per million tokens for text input and $33 per million tokens for image output. As for MAI-Transcribe-1, a company spokesperson tells The AI Economy that it will cost $0.36 per hour of audio.

“At Microsoft AI, we’re building Humanist AI,” Mustafa Suleyman, the division’s head, writes in a blog post. “We have a distinct view when creating our AI models—putting humans at the center, optimizing for how people actually communicate, training for practical use.” He promises that Microsoft AI will soon release more models not only in Foundry but also in the company’s own products.

Updated on 4/2/2026: This post has been revised to reflect the actual cost of MAI-Transcribe-1, as reported by a Microsoft spokesperson.