AI Chips and NPUs — Latest News and Developments

Artificial intelligence (AI) is transforming industries across the globe, from healthcare and finance to autonomous vehicles and smart cities. Central to this transformation are AI chips, specialized hardware designed to accelerate machine learning computations. These chips are not just tools—they are the backbone of modern AI advancements, enabling faster, more efficient processing at scale.

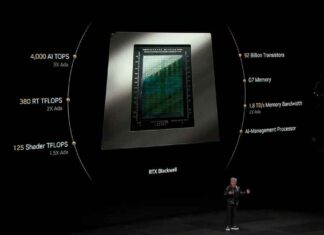

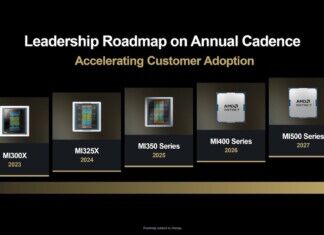

In 2025, the AI chip ecosystem has become more diverse and competitive than ever. Established giants like NVIDIA and AMD continue to innovate, while newer players like Hailo and BrainChip push boundaries in edge computing.

At the same time, technologies like Neural Processing Units (NPUs) and custom-designed AI accelerators are revolutionizing how data centers and devices handle AI workloads.

Key Features and Benefits of AI Chips

Unmatched Performance and Scalability

AI chips are engineered to handle the demanding computations required for training and inference in neural networks. Unlike general-purpose CPUs, these specialized chips deliver unparalleled speed and efficiency through advanced architectures optimized for matrix multiplications, tensor operations, and parallel processing.

For instance, NVIDIA’s H100 GPU, built on the Hopper architecture, features Tensor Cores that support mixed precision (FP8 and FP16), significantly accelerating the training of large-scale models like GPT-4o and DALL-E 3. Similarly, AWS’s Trainium2 offers exceptional scalability for cloud-based AI training, delivering 30-40% better price-performance ratios than traditional GPUs.

Energy Efficiency and Sustainability

Energy efficiency is increasingly critical in AI hardware as model complexity and environmental concerns grow. AI chips like Hailo-8 excel in edge computing, achieving 26 tera operations per second (TOPS) with minimal power consumption, making them ideal for IoT devices and smart cameras.

Neuromorphic chips, such as BrainChip’s Akida, further advance energy efficiency by mimicking the neural architecture of the human brain.

At the data center level, AMD’s MI300 series integrates CPUs and GPUs into a unified design, reducing data transfer bottlenecks and optimizing power usage for high-performance workloads.

Versatile Applications Across Industries

AI chips power a wide range of applications, including:

- Healthcare: Analyzing medical images in real-time to enhance diagnostic accuracy.

- Autonomous Vehicles: Processing sensor data for navigation and safety in real time, as seen with Tesla’s Dojo AI processors.

- Generative AI: Enabling breakthroughs in text, image, and video synthesis through models like Stable Diffusion and MidJourney, powered by chips like NVIDIA’s H100 and Google TPUs.

Types of AI Chips

GPUs vs. NPUs vs. ASICs

The AI hardware landscape is dominated by three primary chip types: GPUs, NPUs, and ASICs. Each has distinct strengths and limitations, making them suitable for specific use cases.

-

Graphics Processing Units (GPUs):

- GPUs, like NVIDIA’s H100 and AMD’s MI300 series, are highly versatile, excelling in both AI training and inference tasks. Their programmability and extensive ecosystem support, such as CUDA for NVIDIA GPUs, make them a go-to choice for developers and enterprises.

- Advantages: Scalability, flexibility, and robust software libraries.

- Drawbacks: High power consumption and cost, making them less ideal for edge devices.

-

Neural Processing Units (NPUs):

- NPUs, including Apple’s Neural Engine and Google’s Edge TPU, are optimized for AI inference tasks. They deliver exceptional efficiency by focusing on neural network operations like matrix multiplications.

- Advantages: Low power consumption and high performance for edge AI applications.

- Drawbacks: Limited flexibility compared to GPUs and ecosystem lock-in for certain platforms.

-

Application-Specific Integrated Circuits (ASICs):

- ASICs, such as Google’s TPU and Amazon’s Inferentia, are designed for specific AI workloads. These chips deliver unmatched performance and efficiency for predefined tasks but lack the adaptability of GPUs or NPUs.

- Advantages: Superior energy efficiency and cost-effectiveness for large-scale, repetitive tasks.

- Drawbacks: Lack of programmability and higher development costs for custom designs.

| Chip Type | Primary Use | Strengths | Limitations |

|---|---|---|---|

| GPUs | Training and inference | Versatility, scalability, ecosystem | High cost and power consumption |

| NPUs | Inference, edge AI | Energy efficiency, edge optimization | Limited flexibility, ecosystem lock-in |

| ASICs | Specialized tasks (training/inference) | Unmatched efficiency for specific tasks | High development costs, limited adaptability |

Comparing Data Center and Edge AI Chips

AI chips are often tailored for two distinct environments: data centers and edge devices. Each category comes with unique requirements and trade-offs.

-

Data Center AI Chips:

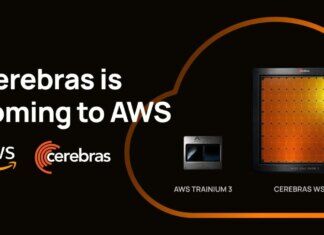

- Chips like NVIDIA’s H100, AWS Trainium2, and Cerebras’ Wafer-Scale Engine dominate data center workloads. These chips are designed for high scalability and performance, making them ideal for training large-scale AI models.

- Key Metrics: FLOPS (Floating Point Operations per Second), memory bandwidth, and interconnect speed.

- Use Cases: Generative AI, large language models, and high-performance computing (HPC).

-

Edge AI Chips:

- Edge chips, such as Hailo-8, BrainChip’s Akida, and Google’s Edge TPU, prioritize low power consumption and compact design. They enable real-time AI processing in resource-constrained environments, such as IoT devices and autonomous vehicles.

- Key Metrics: TOPS (Tera Operations per Second), energy efficiency (TOPS/W), and latency.

- Use Cases: Smart cameras, robotics, and real-time analytics.

Emerging AI Chip Technologies

Neuromorphic Computing

Neuromorphic computing is an experimental approach that mimics the structure and function of the human brain, aiming to process information more efficiently and naturally. BrainChip’s Akida processor exemplifies this trend, using spiking neural networks to achieve ultra-low power consumption and real-time learning capabilities.

These chips are particularly promising for edge applications like robotics and industrial IoT, where energy efficiency and adaptability are critical.

Intel’s Loihi chip is another leader in the neuromorphic space, designed to handle asynchronous spiking neural networks. This technology enables more efficient processing of sensory data, opening new possibilities for AI-driven robotics, prosthetics, and other real-time systems.

In-Memory Computing

In-memory computing eliminates the traditional bottleneck between memory and processing units, enabling data to be processed directly where it is stored. Mythic, a key player in this space, has developed analog AI processors that combine computation and storage to deliver exceptional energy efficiency.

This technology is particularly well-suited for edge AI, where devices must process large volumes of data in real time without relying on cloud resources. By reducing latency and power consumption, in-memory chips could revolutionize industries ranging from healthcare diagnostics to autonomous drones.

Custom AI Chips by Tech Giants

Leading AI developers are increasingly designing their own hardware to optimize performance for their specific workloads:

- OpenAI: Developing its first in-house AI training chip, expected to launch in 2026. The chip will feature a 3nm process and high-bandwidth memory, tailored for large-scale language models like GPT-5.

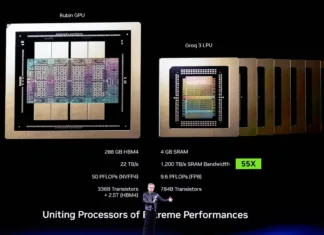

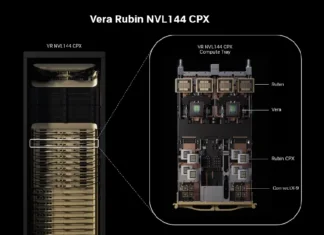

- NVIDIA: Preparing its Rubin architecture as the successor to Blackwell GPUs, featuring hybrid CPU-GPU integration and HBM4 memory. Rubin is expected to redefine performance standards for data center AI workloads.

These custom chips reflect a broader trend toward vertical integration, where companies optimize both hardware and software for their unique requirements. While this approach boosts performance, it also risks fragmenting the AI hardware market by creating proprietary ecosystems.

AI Chip Innovations for Sustainability

As AI workloads grow, so do concerns about their environmental impact. Liquid cooling systems, chiplet-based designs, and dynamic power management are emerging as solutions to reduce energy consumption in data centers.

Companies like AMD and AWS are incorporating these innovations into their next-generation chips, emphasizing sustainability as a core priority.

Research into alternative materials and energy-efficient architectures is also gaining traction. For example, neuromorphic and in-memory chips inherently consume less power, making them promising candidates for sustainable AI processing.

Manufacturing Complexities and Supply Chain Challenges

Producing AI chips is a highly intricate process, requiring advanced fabrication techniques and cutting-edge materials. Companies like NVIDIA and Cerebras Systems push the boundaries of semiconductor technology, but this comes with challenges.

NVIDIA’s reliance on TSMC for its 4nm and upcoming 3nm nodes exemplifies the industry’s dependence on a limited number of foundries. This reliance creates vulnerabilities in the supply chain, as seen during recent global semiconductor shortages.

Cerebras Systems, with its Wafer-Scale Engine (WSE), faces unique manufacturing challenges due to the chip’s unprecedented size. Its enormous surface area requires specialized cooling solutions and extreme precision during fabrication.

Additionally, the environmental impact of producing these chips, particularly the energy and water consumption in fabs, raises sustainability concerns.

Market Barriers and Accessibility

While AI chips revolutionize industries, they remain inaccessible to smaller businesses and independent developers due to high costs. For instance, NVIDIA’s flagship H100 GPU can cost upwards of $30,000 per unit, limiting adoption to well-funded enterprises and research institutions.

Proprietary ecosystems present another barrier. Chips like Google’s TPU are optimized for TensorFlow, creating challenges for developers working with other frameworks like PyTorch. This lack of cross-platform compatibility hinders innovation and locks users into specific ecosystems, reducing flexibility.

Ethical and Social Implications

AI chips enable powerful technologies, but they also raise ethical concerns. For example, the deployment of NPUs and other AI accelerators in facial recognition systems has drawn criticism for their potential misuse in mass surveillance. Countries with weak privacy regulations risk abusing these capabilities, leading to societal pushback.

Similarly, the use of AI chips in military applications, such as autonomous drones and weapons systems, introduces moral dilemmas. Critics question the accountability and oversight of decisions made by AI-driven technologies in high-stakes scenarios. These concerns highlight the urgent need for regulatory frameworks to address the ethical dimensions of AI hardware deployment.