[Video] Office Hours in the Vegas Home Office

When you’ve got questions about architecture, development, or best practices, post ’em at https://pollgab.com/room/brento, and upvote the ones you’d like to see me cover. It’s like free bite-sized consulting.

Here’s what we covered this week:

- 00:00 Start

- 01:37 DBAInAction: Hi Brent, could you please suggest an archival/purging strategy for multi-TB tables to free up disk space? Also, is there a recommended method for designing archiving/purging process for new databases before they become too large? Thank you!

- 03:47 MyTeaGotCold: I was surprised that Mastering Server Tuning never mentioned what you can do with files and filegroups in user databases. Do you find that they solve problems for you?

- 06:52 Forgetful: Do you ever recommend putting the tempdb log file on a different drive to the tempdb data files?

- 07:42 Adrian: Hi, would you enable tempdb ADR by default for new SQL 2025 servers? Any risks or negatives to consider, especially in combination with Availability Groups?

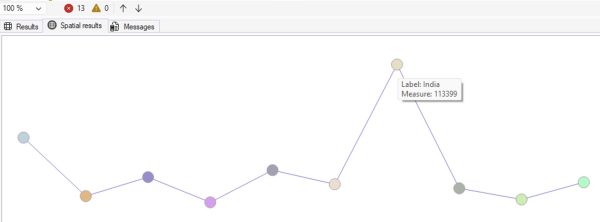

- 11:12 Dopinder: For SQL Server multi tenancy, one database per account seems optimal in limiting potential future corruption. However, doesn’t SQL AG tip over in the one database per account model due to AG database count limits?

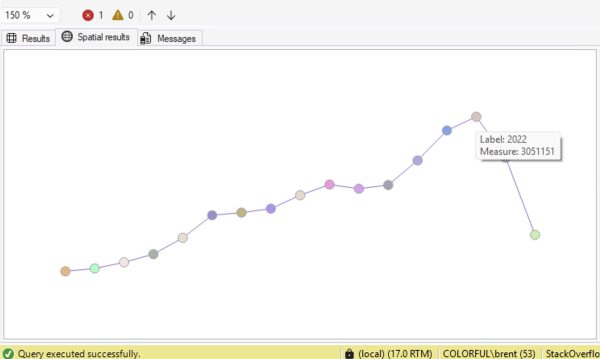

- 13:40 FrugalShaun: What’s your go-to stat for measuring SQL Server throughput? When I want a quick comparison with past performance I check Batch Requests/sec, but that shows requests received, not necessarily work actually completed. What do you rely on instead?

- 15:02 Micen1: I have a customer running MDS on SQL 2019, they are looking to be able to run it on Azure SQL db but it seems that the MDS app still needs IIS on a vm? Do you the future of MDS or could you give your thoughts abouts its future and what to expect?

If your company is hiring, leave a comment. The rules:

If your company is hiring, leave a comment. The rules: