Hugging Face is an open-source platform that helps in building, training and deploying AI models for tasks like natural language processing, computer vision and audio. It offers libraries, ready-to-use models and tools that make it easier for developers, students and researchers to create and work with AI systems efficiently.

Core Components

Hugging Face Transformers provides core components that simplify the machine learning workflow, from data processing to model deployment, making development faster and more efficient.

1. Tokenizers

Tokenizers convert raw input (text, images or audio) into a format that models can process.

- Break input into tokens (subwords, words or IDs)

- Handle padding, truncation and attention masks

- Optimized for speed using Rust based implementations

- Ensure compatibility with specific model architectures

2. Pipeline

Pipeline provides a high level interface to run models quickly without managing internal complexities.

- Combines preprocessing, model inference and postprocessing

- Supports tasks like text classification, translation and image recognition

- Ideal for rapid prototyping and testing

- Reduces boilerplate code significantly

3. Datasets

Datasets library manages large scale data efficiently for training and evaluation.

- Access datasets across NLP, computer vision and audio domains

- Supports fast loading, filtering and transformation

- Enables streaming and memory efficient processing

- Integrates seamlessly with training pipelines

4. Transformers Library

Transformers is the core library that provides model architectures and training capabilities.

- Supports PyTorch, TensorFlow and JAX

- Includes state of the art architectures like BERT, GPT, ViT and more

- Enables fine tuning, training and inference

- Provides modular and reusable APIs for flexibility

5. Model Hub

Model Hub is a centralized repository for sharing and discovering models.

- Hosts thousands of pre trained and fine tuned models

- Allows versioning, collaboration and deployment

- Supports multiple modalities (text, vision, audio, multimodal)

- Encourages open source contributions and experimentation

6. Spaces

Spaces is a user-friendly platform that allows anyone to showcase machine learning models through interactive demos.

- Packages models in a simple interface, making them easy to use and share.

- Provides the computing resources needed to host demos directly on the platform.

- Makes AI models accessible to all users, regardless of technical background.

7. Pre-trained Models

Pre-trained models are ready to use models trained on large datasets to accelerate development.

- Cover NLP, computer vision, audio and multimodal tasks

- Reduce training time and computational cost

- Can be fine tuned for specific use cases

- Examples include BERT, GPT, T5 and Vision Transformers (ViT)

How to Use Hugging Face

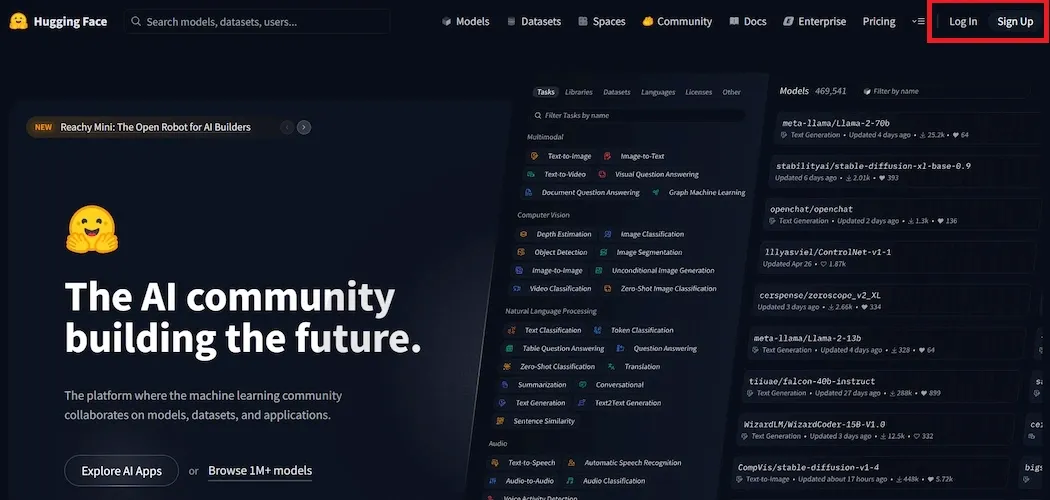

Step 1: Visit the Hugging Face Website

Enter the official Hugging Face website URL in your browser’s address bar. Once loaded, you will land on the homepage where various tools and features are displayed.

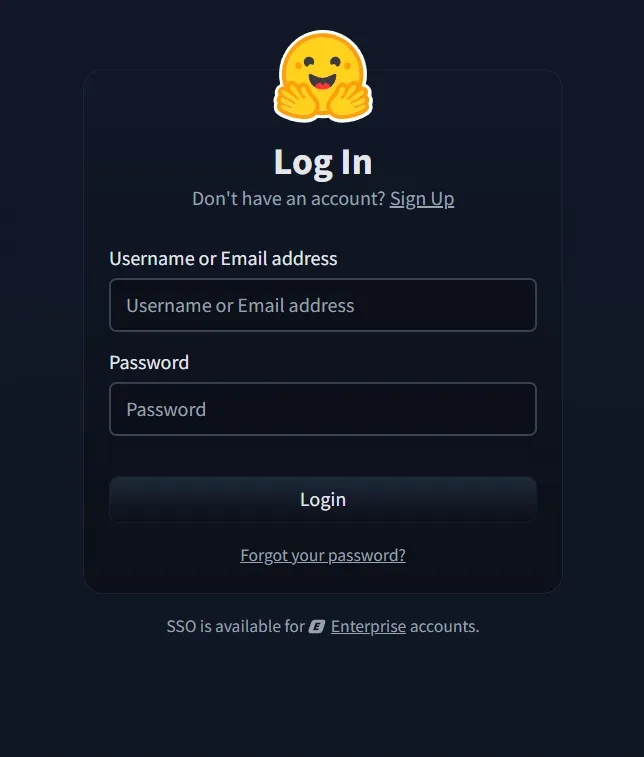

Step 2: Locate the Sign Up Button

Look for a "Sign Up" or "Log in" button displayed on the page. This button is typically found at the top of the website. Click on it and start the registration process.

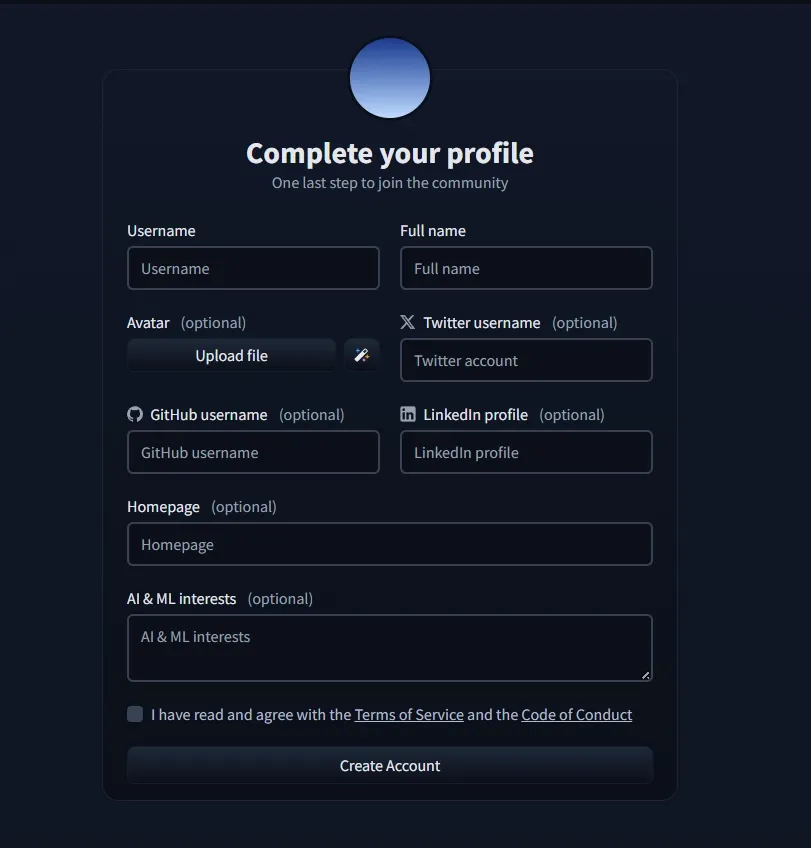

Step 3: Complete the Registration Form

Upon clicking the sign up button we will be directed to a registration page. Here we will need to provide some basic information including our email address, a preferred username and a secure password. Take a moment to carefully fill out the form.

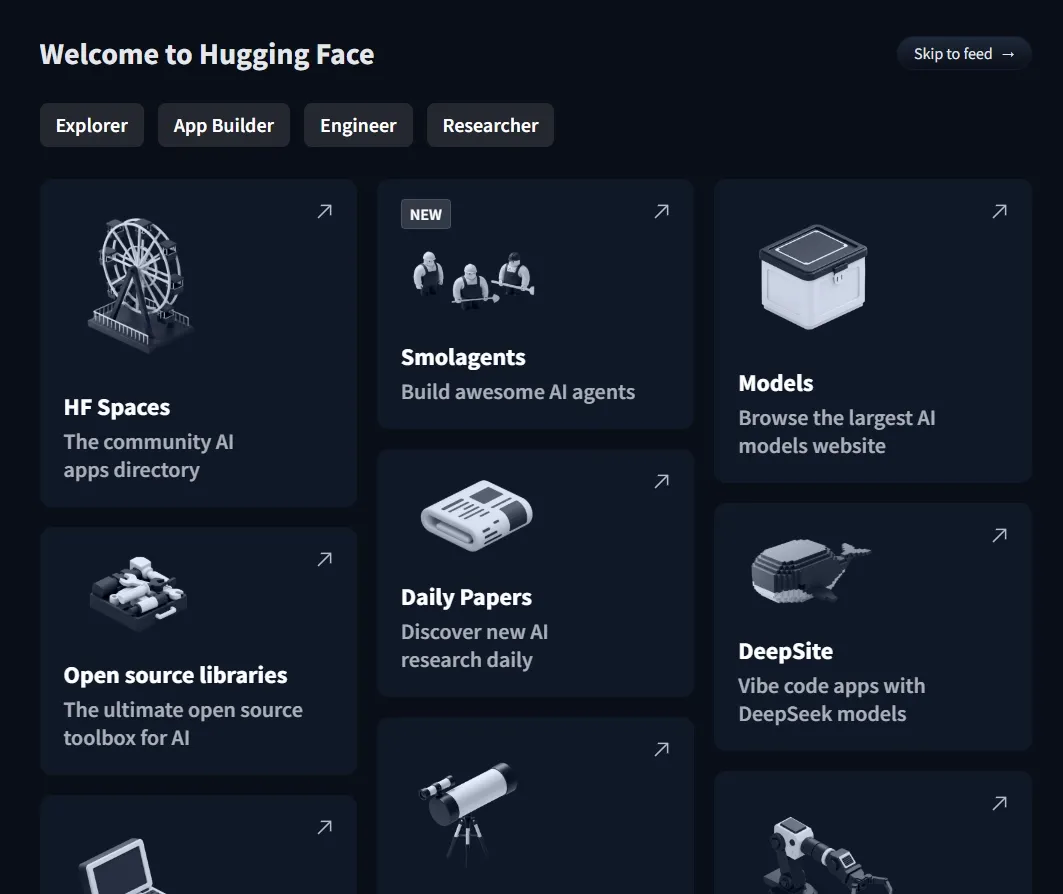

Step 4: Explore and Check for the various features of Hugging Faces

With our account, we can explore collaborative spaces, access pre trained models and engage with like minded individuals passionate about machine learning.

Applications

- Enable automated text classification for tasks such as sentiment analysis and spam detection.

- NER models extract important entities such as names, dates and locations from text.

- Transformers like T5 helps in question answering systems that extract precise answers from text or documents.

- Models like GPT3 and T5 can generate creative text and summarize long documents.

Challenges

- Large models like GPT and BERT require significant computational power which may be hard for users with limited resources.

- Scaling models for enterprise level use can be challenging and may require additional resources.

- Models shared by the community can vary in quality requiring users to assess them carefully.