Inspiration

Editing 30-sec ads in After Effects sucks. Prompt-based tools spit out junk or break. We wanted a deterministic, code-first way to get studio-quality spots from a single prompt.

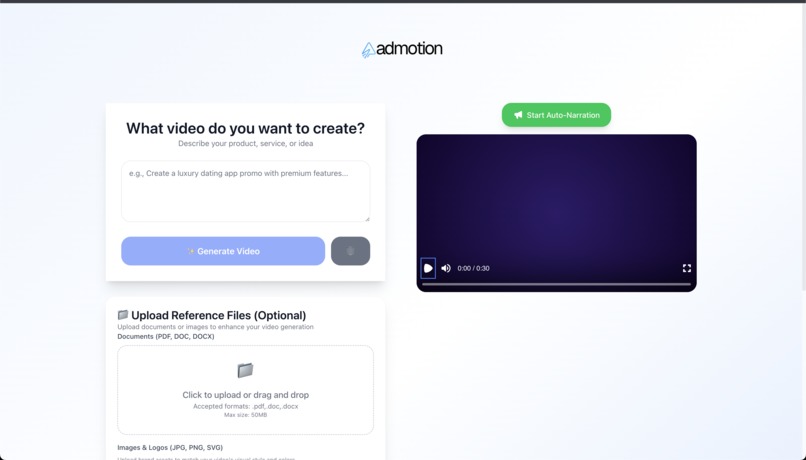

What it does

Takes a prompt → analyzes industry, tone, style → picks scene templates → generates React/Remotion components → renders a 1080p MP4 locally or on AWS Lambda.

Enhanced with advanced capabilities:

- Smart file upload system - Drag & drop brand assets (logos, documents, images)

- AI color extraction - Real-time brand color analysis using k-means clustering

- Voice narration - Auto-narrates video content with browser TTS

- Brand consistency - Videos automatically match uploaded brand colors and assets

How we built it

- Frontend: Next.js 15 with elegant 50/50 layout, drag-and-drop file uploads, real-time color preview

- AI Engine: Claude 3.5 Sonnet as "director" (structure JSON) and "generator" (TSX code)

- Template System: 17+ advanced scene templates with dynamic color integration

- Color Analysis: Client-side k-means clustering for brand color extraction from uploaded images

- Voice Integration: Browser Speech Synthesis API for automatic video narration

- File Management: Robust upload system with image processing and document ingestion

- Rendering: Remotion with TransitionSeries, springs/interpolate, and particle systems

Challenges we ran into

- Getting Claude to always return valid JSON and compilable TSX

- Syncing scene timings to exactly 900 frames without drift

- Race conditions on parallel file writes and cleanup

- Color extraction performance - Processing large images without blocking UI

- File upload reliability - Handling multiple formats and error states gracefully

- Voice timing synchronization - Matching narration to video frame progression

Accomplishments that we're proud of

- End-to-end prompt → MP4 in a single command

- Advanced template system with 17+ reusable scenes (hero, features, stats, CTA, product showcases)

- Intelligent brand integration - Real color extraction and dynamic application

- Seamless file handling - Drag-and-drop with visual feedback and error recovery

- Voice narration system - Auto-reads video content with scene-by-scene timing

- Robust fallbacks and validation so renders rarely fail

- Smooth, modern motion that actually looks like an ad, not a slideshow

What we learned

- Advanced color theory - K-means clustering for dominant color extraction

- File processing patterns - Client-side image analysis without server overhead

- Strong prompting patterns for codegen and schema enforcement

- Deep Remotion internals: performance, transitions, particle systems

- Voice synthesis integration - Browser APIs for real-time narration

- How to structure a template system so designers and devs can both extend it

What's next for Admotion

- Enhanced brand kit processing - Font extraction, style guide analysis

- Professional voice integration - ElevenLabs/VAPI for studio-quality narration

- Advanced color harmonies - Complementary and triadic color scheme generation

- Variable lengths (15/30/60s) and adaptive timing

- Music/SFX library and VO alignment with uploaded audio tracks

- Live preview editor with timeline scrub + per-scene edits

- Real-time collaboration - Multi-user editing with brand asset sharing

- Caching, DB storage, and A/B variant generation for marketers

Built With

- 3.5

- amazon

- amazon-web-services

- api

- claude

- css

- framer

- lambda

- motion

- next.js

- node.js

- react

- remotion

- remotion/transitions

- s3

- sonnet

- tailwind

- typescript

Log in or sign up for Devpost to join the conversation.