Inspiration

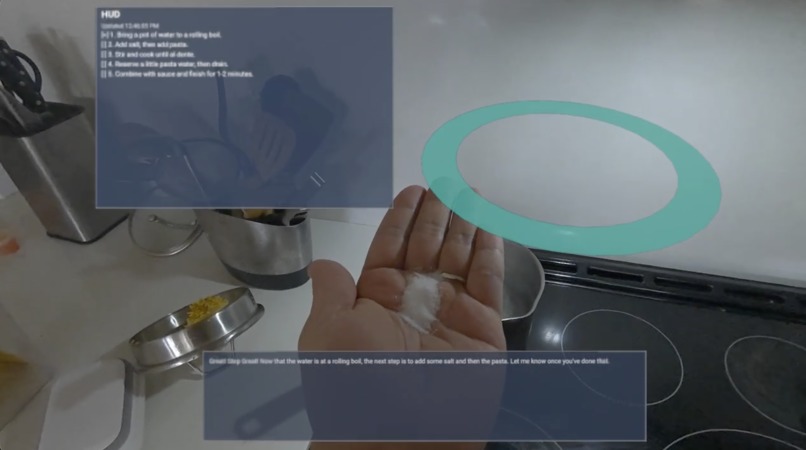

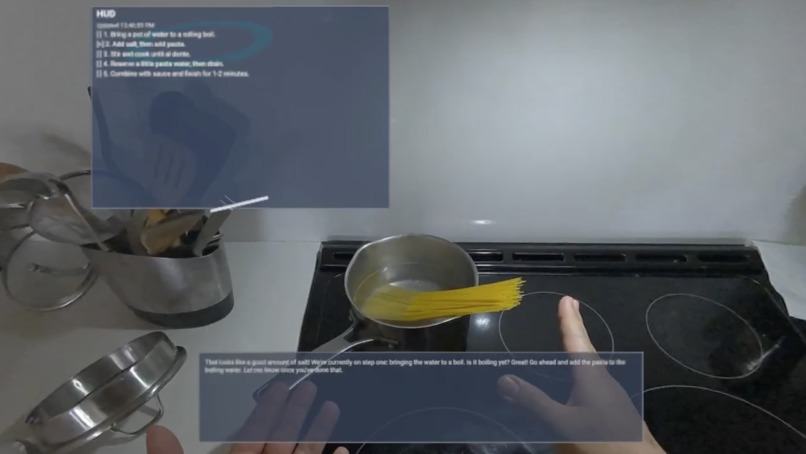

This example showcases how real-time multimodal AI can enhance physical tasks by understanding human actions through vision and audio—a personal exploration of how AI is transforming hands-on work. ApronAI was inspired by this idea to demo a real-time kitchen copilot that can watch what you are doing, listen to you, and guide you step by step.

What it does

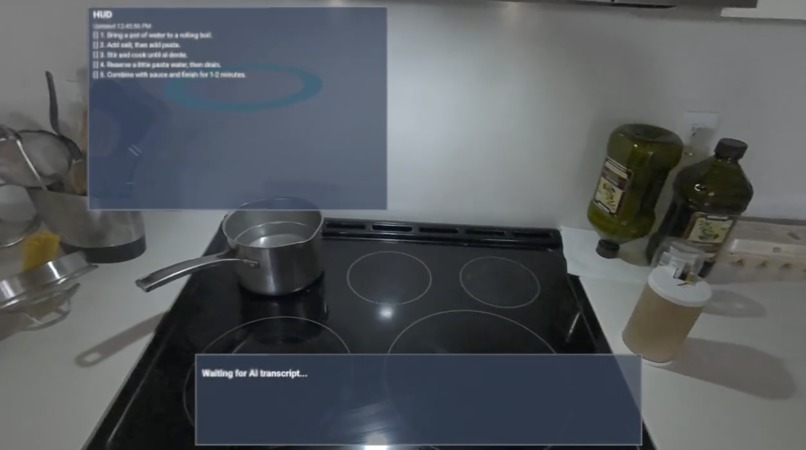

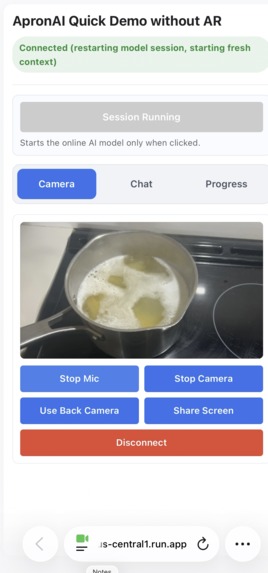

- Streams microphone audio and camera frames to Gemini Live over WebSocket.

- Returns low-latency voice responses.

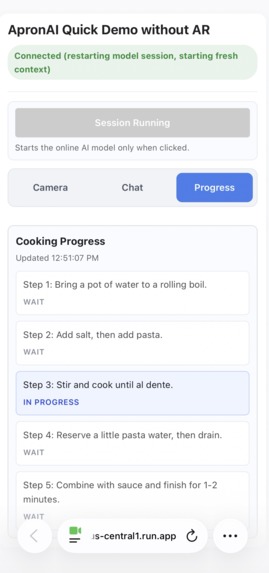

- Tracks recipe progress with explicit memory checkpoints.

- Surfaces progress via a live AR HUD.

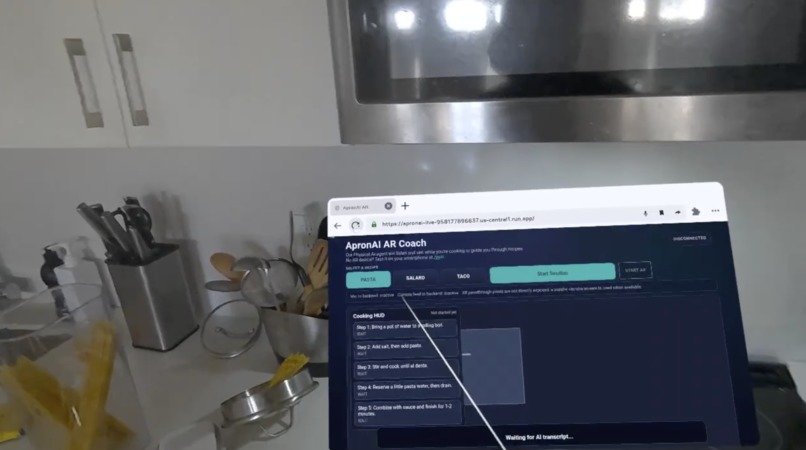

- Supports multiple recipes loaded dynamically from

knolwedge/*.json. - Provides two frontends:

/for AR/WebXR mode./evalfor smart phone camera/chat evaluation mode.

How we built it

- Backend: FastAPI with a WebSocket bridge (

main.py). - Model integration: Gemini Live wrapper using

google-genai(gemini_live.py). - Frontend: Vanilla JS media pipeline + Three.js AR UI.

- Memory and progress: Recipe-aware explicit memory and shared progress store (

progress_tracker.py). - Knowledge system: Recipe prompts and steps in JSON files under

knolwedge/. - Reliability: Session restart/resumption flow, queue backpressure handling, mobile HTTPS support.

Challenges we ran into

- Session quality degraded in longer multimodal conversations when both audio and video were active.

- Context continuity dropped over time, causing repeated questions.

- Mobile and AR constraints (TLS trust, permissions, browser-specific behavior) complicated startup reliability. To solve this, we combined:

- Context window compression with a sliding/context-shifting strategy for longer sessions.

- Explicit memory management to preserve task state across context compression and session restarts.

Accomplishments that we're proud of

- Stable real-time audio/video + voice-response loop.

- Long practical session duration through compression + session recovery.

- Explicit step memory that keeps the assistant on track.

- AR mode with in-scene HUD/transcript plus

/evalfallback for broader device support. - Dynamic recipe selection from backend knowledge files without hardcoded frontend prompts.

What we learned

- Compression extends session length, but explicit memory is critical for continuity quality.

- Real-time systems need robust queueing/restart behavior, not only model prompts.

- Mobile and AR browser security models must be treated as first-class engineering constraints.

- Good observability (tests, logs, API checks) dramatically reduces debugging time.

What's next for ApronAI

- Richer structured memory (timers, ingredient state, parallel steps).

- More recipes and tool integrations (timers, substitutions, pantry-aware guidance).

- Better AR-first interaction patterns for hands-busy usage.

- Better AR overlay UI (e.g., using SLAM mapping information onto objects)

- Production hardening: auth, analytics, and multi-user deployment posture.

Built With

- gemini

- google-cloud

- python

- websocket

Log in or sign up for Devpost to join the conversation.