Inspiration

People possess immense potential to achieve great things, but one of the biggest obstacles they face is the ability to communicate their ideas effectively. Miscommunication can lead to misunderstandings, missed opportunities, and even conflict. I believe that if everyone had the tools to understand each other better, we could foster deeper connections and build more meaningful relationships. Effective communication is not just about conveying information; it’s the foundation of teamwork, collaboration, and problem-solving. When individuals can articulate their thoughts clearly and understand others, systems and groups function more efficiently, especially under pressure or in high-stakes situations. I envision a world where improved communication leads to stronger, more cohesive communities and teams that can tackle challenges together.

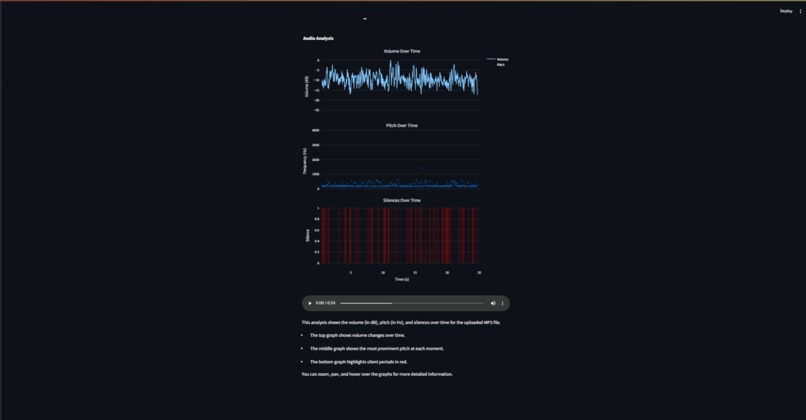

What it does

BetterSpeak is an innovative tool that analyzes the way individuals communicate by leveraging cutting-edge computer vision and audio processing technologies. The application evaluates facial expressions, tone, speaking pace, and overall conversational style in real-time. Using these data points, it offers users comprehensive insights into their communication habits, identifying strengths and areas for improvement. Whether it’s in a professional setting, where clarity and confidence are paramount, or in casual social interactions, where rapport and engagement matter, BetterSpeak provides tailored feedback designed to elevate the user's conversational impact. Users receive detailed, personalized tips and recommendations that help them improve their communication, enhancing conversation flow, clarity, and engagement.

How we built it

We developed BetterSpeak using Streamlit as the front-end framework to create an intuitive and user-friendly interface. The backend of the application is powered by Python, chosen for its robust ecosystem and flexibility. For real-time analysis, we integrated MediaPipe, a machine learning library that excels at facial and body movement tracking, enabling us to capture facial expressions and body language with high precision. On the audio side, we utilized PyAudio to capture and process speech, allowing us to analyze tone, pace, and modulation. These technologies work harmoniously to assess both the visual and auditory aspects of communication, generating a holistic view of how users express themselves. We also employed signal processing techniques and natural language processing to provide meaningful, context-aware feedback that adapts to different conversational styles and settings.

Challenges we ran into

Achieving real-time, synchronized analysis of both visual and audio data presented a significant challenge. Balancing the need for accurate, in-depth analysis with the requirement for smooth, real-time feedback was a technical hurdle. Maintaining low latency while processing both facial expressions and speech without sacrificing precision required multiple rounds of optimization. Furthermore, ensuring that the feedback provided was not only accurate but also contextually appropriate across a wide range of conversational styles was complex. Fine-tuning our algorithms to adapt to users from different backgrounds and environments took considerable effort. Another challenge was making the interface intuitive and accessible to users, regardless of their technical background.

Accomplishments that we're proud of

One of the major accomplishments was successfully integrating real-time audio and visual analysis into a cohesive user experience. We’re particularly proud of how we managed to create a tool that’s both sophisticated and easy to use, offering meaningful insights without overwhelming the user. Another achievement is the depth of analysis the application provides. Despite the challenges, we’ve developed an approach that takes into account the nuances of communication, from non-verbal cues like facial expressions to the subtle shifts in tone and pacing that can make or break a conversation. Personally, I am proud of how much I enjoyed building this application. It was a meaningful project that allowed me to apply and expand my skills as a software engineer. It has fueled my passion for creating technology that can have a real, positive impact on people’s lives.

What we learned

This project taught us the importance of balancing technical precision with user experience. Communication is multifaceted, and analyzing it requires a combination of visual and audio data. We learned that while accuracy is critical, the insights must also be presented in a way that users can easily understand and apply. We discovered how complex and diverse human communication can be, with varying speaking styles, expressions, and tones that require adaptable algorithms. Through trial and error, we gained valuable insights into the importance of context when analyzing communication patterns, as different settings demand different approaches to feedback.

What's next for BetterSpeak

Looking ahead, we plan to enhance BetterSpeak by integrating machine learning models that can generate even more personalized feedback for users, continually improving based on their communication history. This will allow the system to offer dynamic feedback tailored to the specific challenges each user faces. Additionally, we aim to expand the platform’s capabilities to analyze group dynamics, helping users not just in one-on-one conversations but also in team settings or public speaking environments. We’re also exploring the development of a mobile version, enabling users to receive real-time communication analysis on the go. To make BetterSpeak more accessible globally, we plan to include multilingual support, allowing users to benefit from the tool in their native language.

Built With

- openai

- python

- streamlit

Log in or sign up for Devpost to join the conversation.