Inspiration

The day before the Hackathon our team went out to grab some dinner on Clifton. We made a note on just how much litter covered the streets of the Queen City, so when we saw that Social Responsibility was a track for this years UC hackathon we knew what we had to do.

What it does

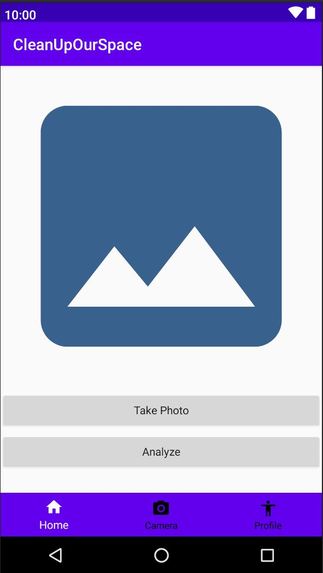

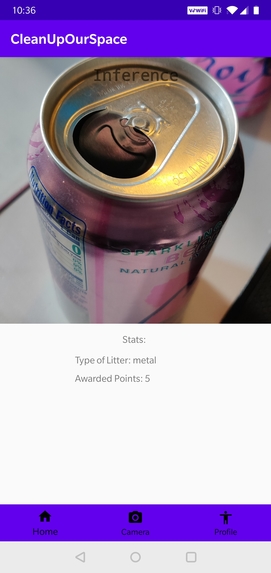

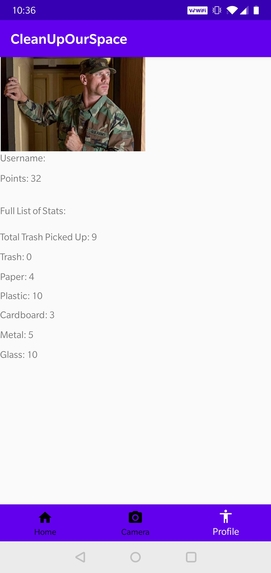

We have designed an alpha version of an android app that utilizes a neural network we trained using the Google Cloud services provided by our sponsors. The provided functionality allows the user to snap a photo of any piece of trash that is picked up and it is then classified into one of the 6 categories that the network was trained on. Based on the category, a point value is awarded to the user. For future implementations, the hope would be to create a rewards system to use the points to further encourage users to continue to pick up trash.

How we built it

The app was developed on Android Studio using Java; Python was used to prepare the data sets and to convert between model architectures for the neural network. The neural network itself was built and trained using Google Cloud services. Finally we used a git repository to share files and keep all of our data organized.

Challenges we ran into

We initially had some trouble linking pieces of the UI to the actual code as nobody in our group had ever used Android Studio before. For the neural network it was a challenge finding a lot of good data and still having correct parameters and time to train the network. We also had some difficulties importing our model from the network to the app development suite.

Accomplishments that we're proud of

We were able to train our neural network to obtain 94.9% precision and a recall of 88.5%. It can classify six different types of litter commonly found around us.

What we learned

We learned an extensive amount about app development and implementation, as well as how to train and use neural networks using machine learning and incorporating multiple functionalities into our application.

What's next for CleanUpOurSpace

A potential Beta version of the app would incorporate an in app camera re-made from the ground up, as well as some quality of life improvements to the activities and making use of fragments so make the app experience runs smoother. The UI would get an update to be less bland and have more color and softer shapes. The neural network could be trained to have more data points and decide between more categories that require more complex judgement. Eventually, if the Dev team could work with the School/local businesses to create a proper reward system that awarded points could be spent on (such as coupons, tickets, prizes etc.). Next, optimizing the app for the Google Play store and the Apple Store would be the next major milestone.

Built With

- a.i.

- android-studio

- google-cloud

- java

- machine-learning

- python

- social-responsibility

- tensorflow

Log in or sign up for Devpost to join the conversation.