-

-

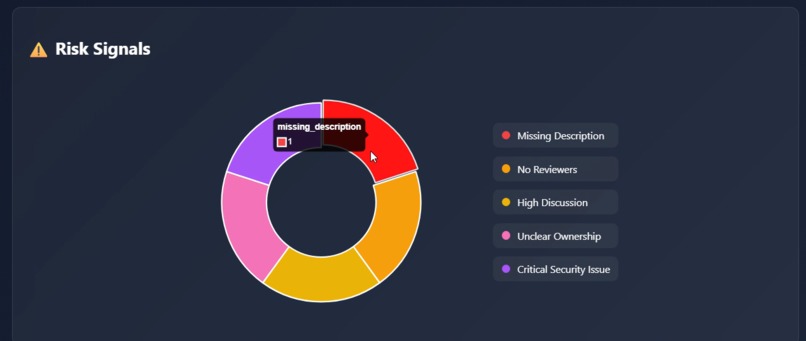

Risk Signals Visualization: Complex MR risk factors transformed into clear, interactive visuals for quick understanding and decision-making.

-

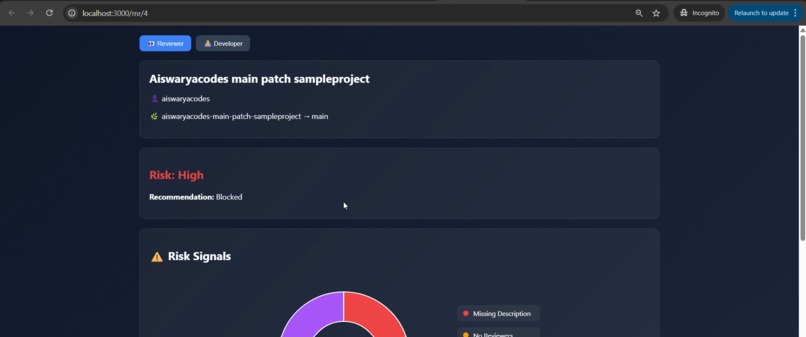

Reviewer Mode: Visual risk analysis with intuitive charts, enabling reviewers to instantly understand PR risk and make confident decisions.

-

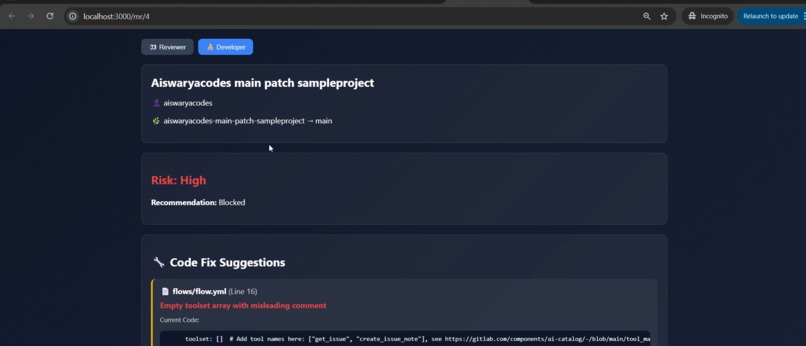

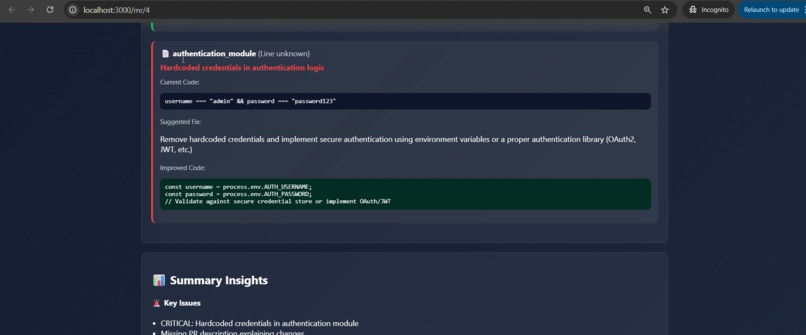

From code to action: Developers get precise, line-level fixes instead of scanning entire PRs.

-

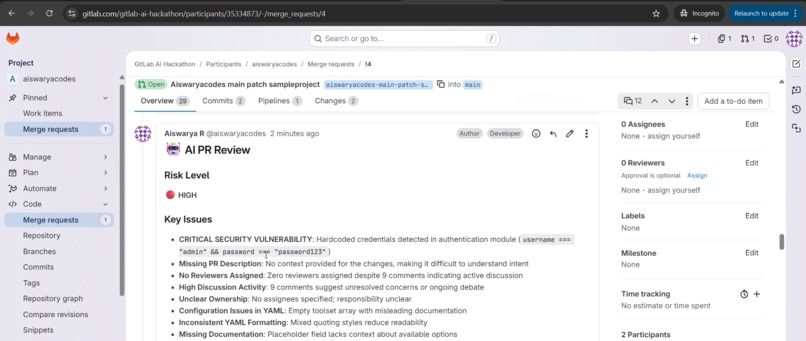

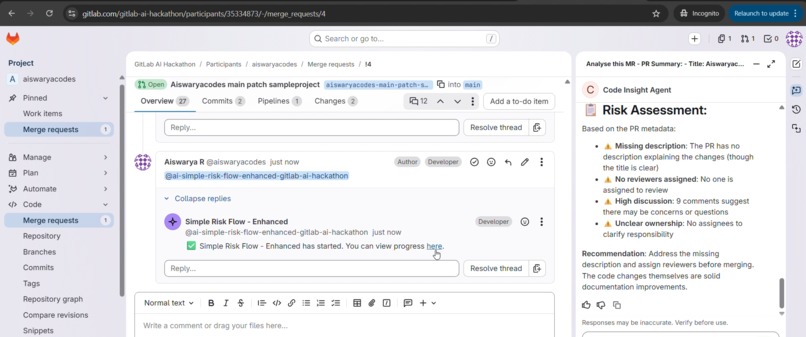

AI PR Review Comment: Automated MR analysis posted directly in GitLab, including risk level, key issues, and actionable recommendations.

-

Agent Workflow Trigger: Multi-agent pipeline initiated, orchestrating context extraction, risk analysis, and code insights generation.

-

Dev Mode: AI-generated code insights with line-level issues and actionable fixes, helping developers quickly identify and resolve problems.

Inspiration

- Code reviews today are supported by many AI tools from PR summarizers to security scanners.

- But even with AI, developers and reviewers still struggle with clarity.

- AI generates a lot of information, but that data is often complex, scattered, and hard to interpret.

- Reviewers still need to read through large changes, and developers often don’t know what to prioritize or fix first.

- We were inspired to solve this gap "Not what changed, but what matters".

What it does

- Code2Confidence is an AI-powered PR Intelligence pipeline that transforms code reviews into a visual, decision-ready experience.

- Once a Merge Request is opened/edited, the flow triggers

- Analyzes merge requests using multiple AI agents

- Evaluates risk level and engineering impact

- Detects code-level issues with line numbers and fixes

- Converts complex insights into intuitive visual dashboards

- Provides two focused modes: - Reviewer Mode -> Risk, signals, and merge decision visualization - Developer Mode -> Exact issues and actionable fixes

- Instead of reading everything manually: - Reviewers see risk instantly - Developers know exactly what to fix

How we built it

We designed an end-to-end multi-agent pipeline integrated into the GitLab workflow:

- Custom flow triggered during Merge Request Open/Edit event

- Context Agent - Extracts full merge request details and diffs

- Parser Agent - Converts raw data into structured signals

- Risk Analysis Agent - Evaluates PR risk using engineering and contextual signals

- Code Insight Agent - Detects line-level issues and suggests fixes

- Final Review Agent - Combines all insights into: - Human-readable review - Structured JSON output - MR comment with dashboard link

- Visualization Layer - A frontend dashboard that transforms structured data into: - Risk charts - Security insights - Developer-focused code issues and fix suggestions

Challenges we ran into

Converting AI insights into meaningful visuals Raw AI outputs were complex. Designing a UI that clearly communicates risk and actions required multiple iterations.

Maintaining structured data across agents Ensuring consistent JSON output across multiple agents was tricky but critical for visualization.

Lack of webhook privileges We couldn’t automate full CI/CD integration, so we adapted by:

Posting structured output in MR comments

Manually feeding dashboard data (for now)

Balancing automation vs control We intentionally avoided auto-fixing code to maintain developer ownership and trust.

Accomplishments that we're proud of

- Built a complete multi-agent pipeline, not just a single AI feature

- Delivered line-level issue detection with suggested fixes

- Created a dual-mode UI (Reviewer + Developer) for different roles

- Successfully transformed complex Merge Requests data into clear visual insights

- Integrated the system directly into real Merge Request workflows

What we learned

GitLab Duo AI enables rapid prototyping of intelligent workflows Using GitLab’s agent ecosystem, we were able to quickly build a multi-agent pipeline without worrying about underlying model management.

Agents are powerful, but orchestration is the real challenge The biggest learning was not just building agents, it was designing how they pass structured data reliably across the pipeline.

Structured outputs are critical for multi-agent systems We learned that consistent JSON schemas are essential when chaining agents, especially for powering downstream visualizations.

GitLab MR context tools provide rich but complex data Tools like build_review_merge_request_context give deep insights, but require careful parsing and filtering to extract meaningful signals.

Visualization bridges the gap between AI output and human understanding Raw AI insights are often too dense, transforming them into visual signals made the system significantly more usable.

Duo AI abstracts model complexity, letting us focus on product thinking Instead of focusing on which model to use, we focused on solving the real problem, improving clarity in code reviews.

Constraints drive creativity Not having webhook or full automation access pushed us to design alternative flows using MR comments and manual data transfer.

Team work - Dividing work across agents helped us parallelize effectively.

What's next for Code2Confidence

- Automate JSON persistence via CI/CD pipelines

- Enable real-time dashboard updates

- Add historical tracking of PR risk and trends

- Improve code insight accuracy and coverage

- Integrate with GitHub and other platforms

- Introduce team-level analytics and insights

Built With

- agent

- css

- express.js

- flow

- gitlab

- gitlabduoapi

- html5

- javascript

- json

- node.js

Log in or sign up for Devpost to join the conversation.