Inspiration

Meetings are multimodal, but most tools only capture text. We wanted to build a copilot that can watch the screen, listen to the conversation, verify claims, and produce a trustworthy recap.

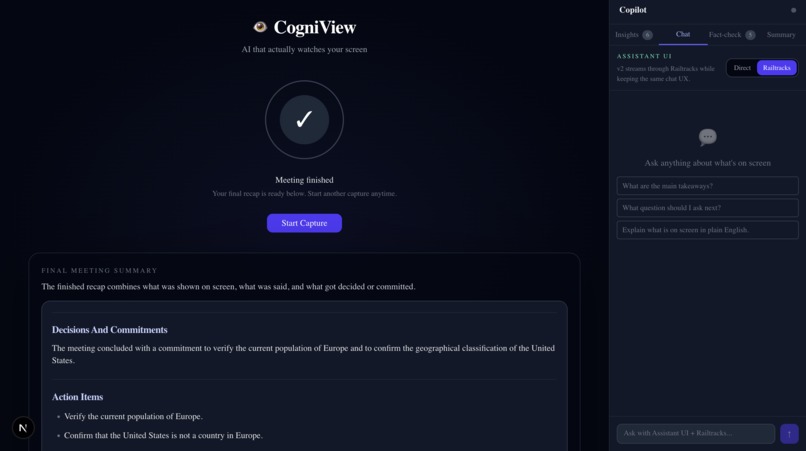

What it does

CogniView is an autonomous meeting copilot for presentations and screen shares. It understands on-screen content, transcribes audio, supports live copilot chat, fact-checks claims in context, generates to-dos, and a final summary after the meeting.

How we built it

We used Lovable to build CogniView with Next.js + TypeScript for the app and Python + Railtracks for the agent workflows. We used Railtracks to orchestrate fact-checking and final-summary flows, Assistant UI for live chat, and Gemini and OpenAI APIs/models for transcription and reasoning.

Challenges we ran into

The hardest parts were real-time audio capture, keeping fact-checking fast enough for live use, reducing repeated insights, and making the final summary include what was shown, said, and verified.

Accomplishments that we're proud of

We’re proud that CogniView goes beyond transcription. It combines live screen understanding, speech understanding, fact-checking, and summary generation into one workflow, with Railtracks powering the most agentic parts of the system.

What we learned

We learned that strong AI products depend on orchestration, validation, and UX just as much as model quality. Real-time agents become much more useful when they are grounded in live context.

What's next for Cogniview

Next, we want to improve automatic fact-check triggering, make background verification even faster, and strengthen the final summary with clearer follow-ups, commitments, and cross-meeting learning.

Log in or sign up for Devpost to join the conversation.