Inspiration

We wanted to build a system that could give visually impaired individuals or anyone with face blindness the ability to identify people around them in real time, knowing not just who someone is, but when and where they were last seen.

What it does

FaceScan is a real-time face-recognition and audio-memory system. It detects faces through a camera, identifies known individuals, and speaks their name along with the date and location they were last seen. For new faces, it records their name through a microphone and stores it permanently. Every person is remembered across sessions.

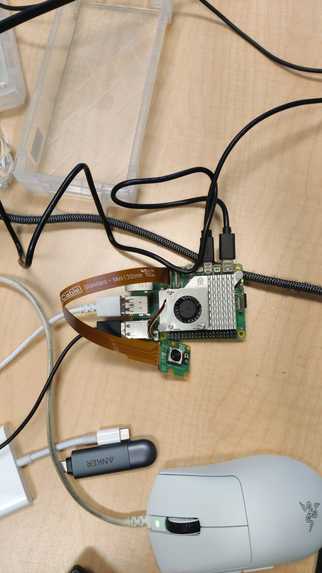

How we built it

OpenCV LBPH for fast local face recognition AWS Rekognition as a high-accuracy backup for uncertain matches, running all comparisons in parallel for speed AWS Comprehend to extract just the name from natural speech (strips filler words like "hey" or "my name is") Google STT for speech-to-text transcription ElevenLabs TTS for natural, human-sounding voice output SQLite for persistent memory of all persons, locations, and timestamps pygame for cross-platform audio playback and beep tones

Challenges we ran into

LBPH confidence thresholds needed careful tuning to avoid misidentifying returning faces as new people AWS Rekognition sequential comparisons caused 10–15 second delays, solved by running all comparisons in parallel with ThreadPoolExecutor macOS quarantine flags on downloaded files made the SQLite database read-only ElevenLabs TTS using afplay (Mac-only) needed to be replaced with pygame. mixer for cross-platform support Cooldown logic needed to persist across restarts so the same person isn't announced repeatedly

Accomplishments that we're proud of

Sub-2-second face identification after initial training Fully cross-platform (Mac and Windows) with the same codebase Natural human-sounding voice responses via ElevenLabs Persistent memory that survives restarts, the system truly "remembers" people

What we learned

Combining a fast local model (LBPH) with a cloud fallback (AWS) gives the best balance of speed and accuracy Parallel API calls dramatically reduce latency in multi-person scenarios An audio pipeline on different OSes requires abstraction to work reliably

What's next for FaceScan

Mobile app version using the phone camera Emotion detection to add context ("Jasnoor looks happy today") Multi-camera support for wider environments A web dashboard to manage known persons and view history

Built With

- amazon-web-services

- elevenlabs

- geocoder

- opencv

- pygame

- python

- rasperrypi

Log in or sign up for Devpost to join the conversation.