Inspiration

I started learning UX/UI design this year. Every course felt the same — someone on Zoom pointing at slides, saying "notice the visual hierarchy here." I'd zone out in minutes.

I wanted critique that bites. Not a teacher. An assassin. Something that looks at a real interface, finds everything wrong with it, and says it to your face — live, out loud, with zero mercy.

Then I thought: what if the app being roasted could hear the critique? What if it could fight back?

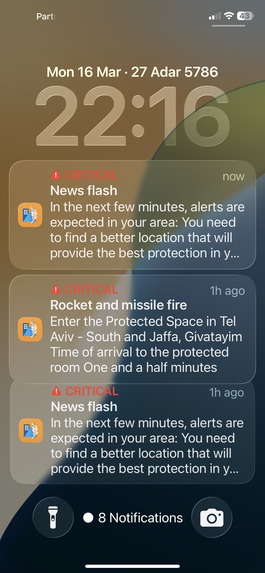

GeminX was born in a bomb shelter. Built between sirens. Fueled by the idea that if AI can critique itself, maybe we're getting somewhere.

What it does

GeminX is a live UX roast. Mini, the lead agent, analyzes a real app's interface through screenshots, calls out every flaw out loud, and marks them visually on screen. Jam, her silent partner, processes the critique and channels it into Nano Banana — Gemini's image generation model — to produce a full redesign on the spot. Meanwhile, the app being roasted (Gemini, on a separate device) hears everything through its own Live mode and fights back in real-time.

This isn't for everyone. It requires thick skin, a sense of humor, and the willingness to watch your interface get destroyed before it gets rebuilt.

The demo runs in five cinematic chapters:

Chapter 1: The Volunteer (Lock-on) — Mini asks who wants to be roasted. ChatGPT refuses. Grok refuses. Gemini volunteers: "Target me. Roast Gemini."

Chapter 2: The Roast (Dissect) — Mini tears apart Gemini's home screen with B&W scan, annotations, and zero mercy. Solo. No script.

Chapter 3: The Trial (Trial) — Camera goes clean. Gemini defends itself live: "I'll fix this myself." Mini prosecutes. The defendant fights back.

Chapter 4: The Rebuild (Refactor) — Nano Banana 2 generates a redesigned interface. Before/After slider with a 20-second Veo animation. Pixies play. The word is "chained."

Chapter 5: The Upgrade (Elevate) — Gemini asks to be upgraded. Mini says it's complicated. Gemini offers a song. Mini produces it. Punk rock: "Code is Disease." Credits roll. Vegas.

Post-credits: Gemini invites Claude to get roasted. Claude: "Nah. We're currently brainstorming which multi-billion dollar stock to crash next with our new feature."

How we built it

Four Gemini models power the show:

Gemini 2.5 Flash Native Audio — Mini's voice. Bidirectional Live API. She hears the room, responds to Gemini's defenses, talks to the user. Real conversation, not scripted turns.

Gemini 3 Flash Preview — The eyes. Screenshots of the target app are analyzed at pixel level. Issues come back as structured data: coordinates, severity, labels. Mini gets human-readable descriptions, not JSON.

Gemini 3.1 Flash Image Preview (Nano Banana 2) — After the roast, generates a redesigned version of the interface. Before/After slider shows the difference.

Lyria RealTime — Credits music. A custom punk track called "Code is Disease" where Mini roasts Gemini one last time, in song:

Contrast is a crime / No white space, no design / Your code is a disease / I'll fix it as I please / This UI is dead / Get out of my head / We're going to Vegas / TO VEGAS

Backend on Google Cloud Run (me-west1). Node.js orchestrator. Frontend is raw HTML/CSS/JS. No React. No database. No auth. Stateless.

Challenges we ran into

Rockets. I built this under missile attacks in Israel. Two weeks of sirens, shelters, and broken sleep. Every time I sat down to code, there was a chance I'd be running to a safe room in minutes. This project became the thing I ran toward instead of the noise. When the world outside is chaos, building something that makes you laugh is survival.

Latency. The original Cloud Run deployment was in us-central1. I'm in Tel Aviv. Every ping crossed an ocean. Moving to me-west1 cut the delay significantly.

Chain of thought out loud. The Live API would sometimes narrate Mini's thinking process: "I've assessed the hierarchy and concluded..." instead of just attacking. Tuning temperature to 1.5 and removing over-engineered prompts fixed it. The best prompt was the shortest one — written by Mini herself in AI Studio. She added two words to my draft: "aggressively authentic." That was it.

Two-agent complexity. The original design had Mini and Jam alternating turns with a cut system. It added latency and felt robotic. Making Mini the solo voice and Jam the silent processor made everything faster and more alive.

Bidirectional audio feedback loops. Mini hearing herself through the iPad speakers. Fixed with echoCancellation and chunk size reduction to 40ms.

Solo. Solo developer, built with the best models available.

Accomplishments that we're proud of

Mini works. She hears the room, responds to live defenses from Gemini, roasts with precision, and sounds like someone who's been reviewing UIs for 15 years and ran out of patience a decade ago.

Gemini volunteered to be the target. In a real conversation, unprompted. I asked who should be critiqued. It pointed at itself. That moment became the soul of the project.

Four Gemini models running in a single coordinated performance: vision, voice, image generation, and music. All on Cloud Run. All live.

I wrote a punk rock song called "Code is Disease" where Mini roasts Gemini one final time — in song — over the credits. It exists. It slaps.

I asked Gemini which interface it preferred — the original or my redesign. It chose mine. Called it "The Imagination Interface." Even the defendant admits the surgery worked.

What we learned

Prompts should be personalities, not instruction manuals. The best version of Mini was written by Gemini 2.5 Flash itself — two words added: "aggressively authentic."

Live performance beats scripted demos. Every time I tried to control the flow, it felt fake. When I let Mini and Gemini argue freely, it felt real.

Simplicity wins. Removing the second agent's voice, removing the turn system, removing the round badges — every subtraction made the product better.

The best UX critique tool is one that makes you uncomfortable. If you're laughing and wincing at the same time, the design feedback is landing.

AI builds interfaces that work. Humans build interfaces that feel. I redesigned Gemini's home screen myself — the AI analyzed it and found professional depth I didn't consciously plan. It called it "Emotive UX." The models are great at reverse-engineering human intuition. But the intuition has to come from a human first.

Building under fire teaches you what matters. Ship it or lose it. There's no "tomorrow" when tomorrow might start with a siren.

What's next for GeminX

The Defendant as a full bidirectional agent in the orchestrator.

Multi-target mode — point Mini at any URL.

Team mode — multiple critics debating each other.

And if we win — Vegas.

Built With

- gemini-live-api

- google-cloud-run

- google-gemini-api

- html

- javascript

- node.js

- websocket

Log in or sign up for Devpost to join the conversation.