Inspiration

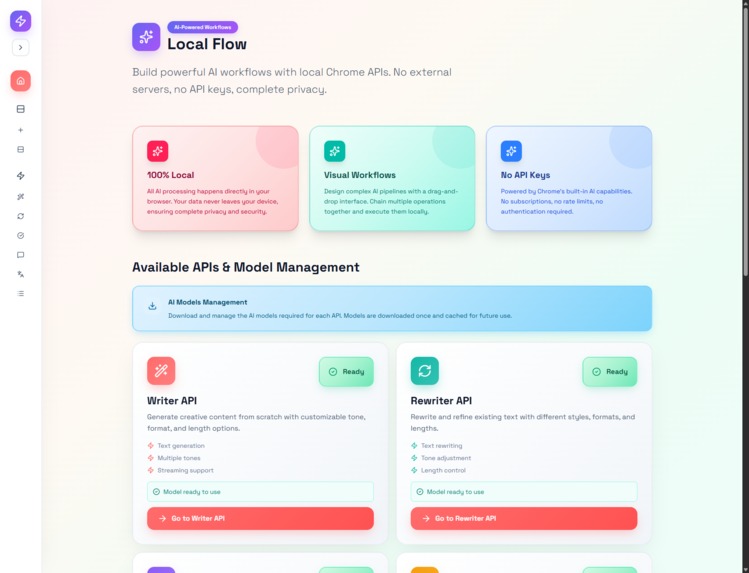

LocalFlow was born from the idea of building smart AI workflows that run entirely inside Google Chrome. Most AI tools depend on servers and API keys, so we wanted to prove that automation could be private, fast, and local using Chrome’s built-in Gemini APIs.

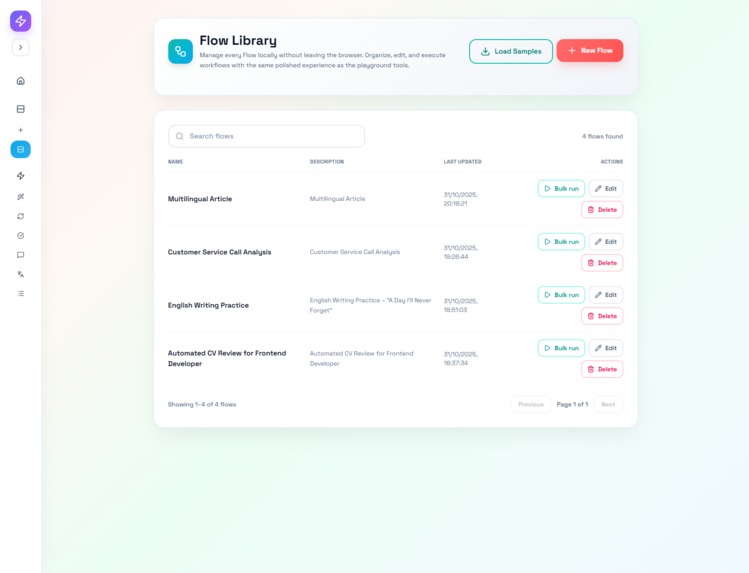

What it does

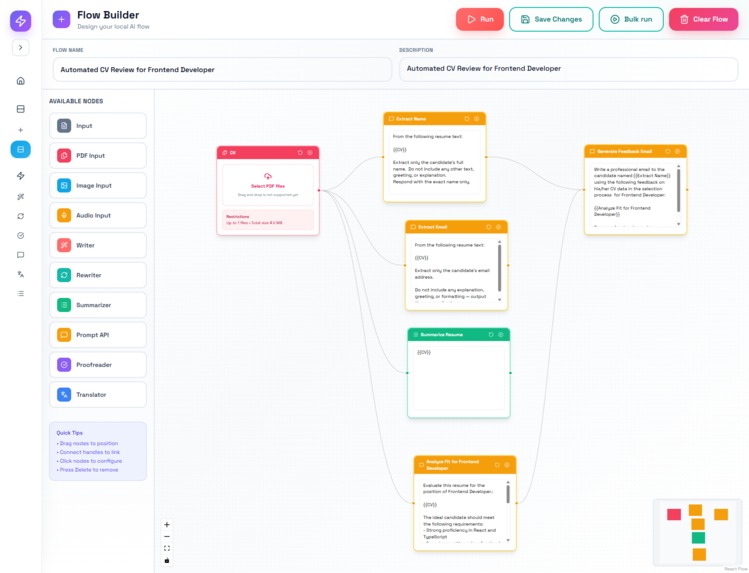

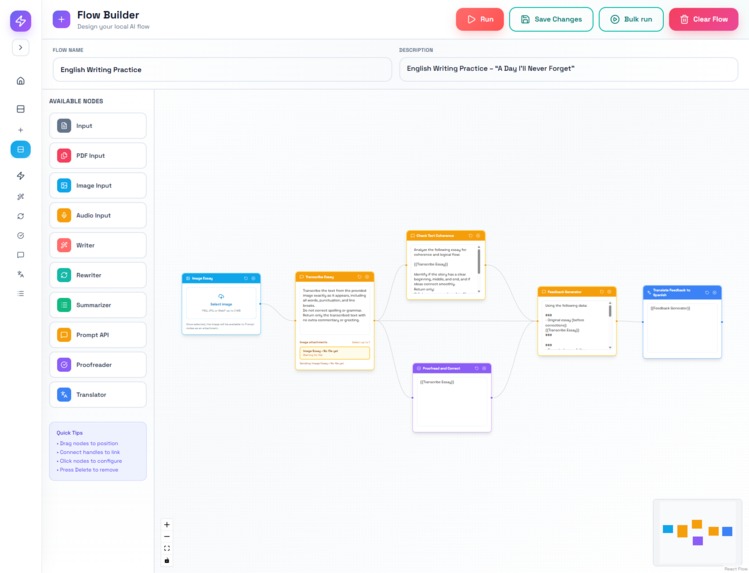

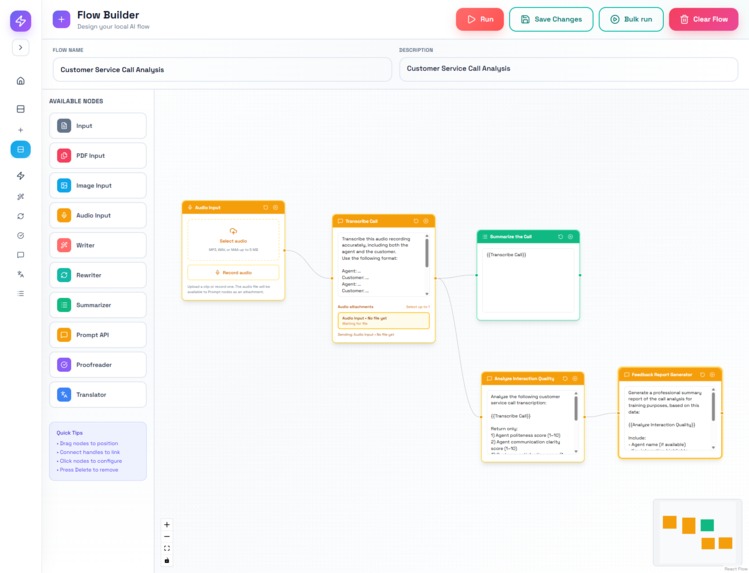

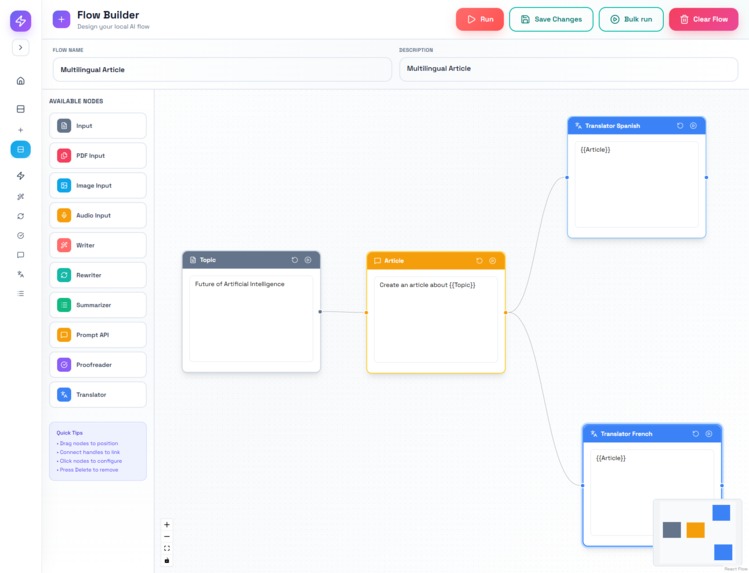

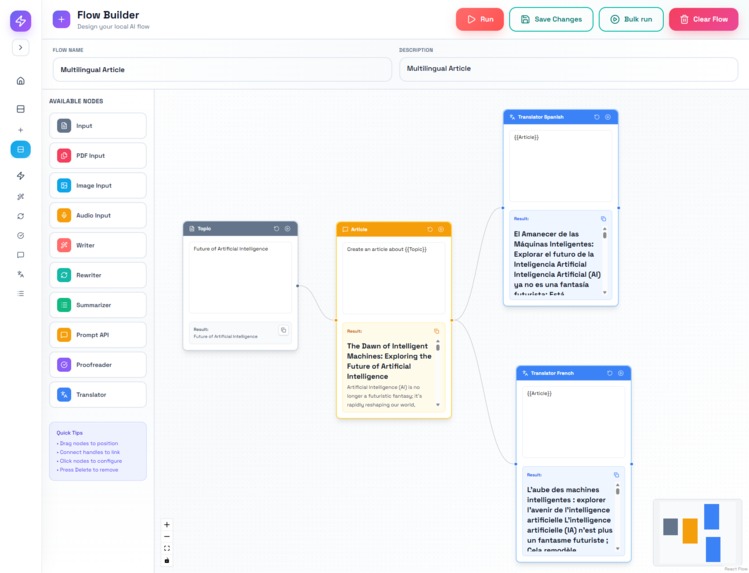

LocalFlow is a visual builder for AI workflows inside Google Chrome. Each node represents an action powered by Chrome’s built-in Gemini APIs — for example, analyzing text, summarizing content, proofreading documents, translating results, or generating feedback.

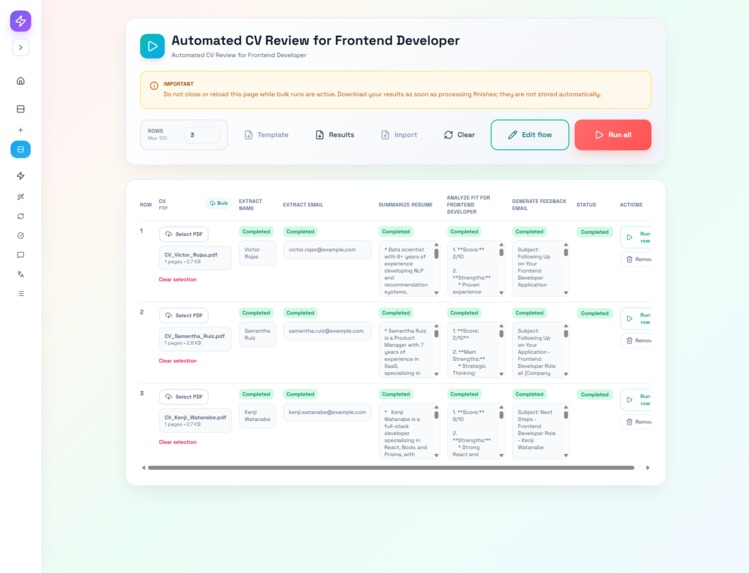

Users can connect multiple nodes to create complex flows that mix different input types (text, PDF, image, audio) and chain multiple AI actions in sequence. It also supports bulk execution, allowing users to process multiple files or inputs simultaneously — for instance, reviewing several CVs, translating multiple articles, or analyzing batches of audio calls at once.

LocalFlow also supports multimodal processing: text, documents, and audio recordings can all be handled in the same environment, using Chrome’s native AI models. Everything runs locally, which means zero latency from external APIs, complete data privacy, and performance that feels instant.

The result is a tool that lets anyone build personalized AI automations visually — without writing a single line of code.

How we built it

We built LocalFlow entirely on top of the Google Chrome Built-in AI APIs, taking advantage of the Gemini Nano model that runs locally in the browser.

For text-based operations, we used the Prompt API for generation and analysis, the Summarizer API for concise output, the Translator API for multilingual tasks, and the Proofreader API for grammar and clarity checks.

Each node in the system connects to one of these APIs through the browser’s chrome.ai interface, so no external backend or third-party model is required.

All data and results are stored locally using Chrome’s storage system, which ensures privacy and immediate access. We also implemented flow chaining so that multiple AI nodes can exchange outputs and run in sequence or in bulk mode. The interface was built with JavaScript (Next.js) and Web Components to keep it light, fast, and consistent across devices.

Challenges we ran into

The biggest challenge was syncing multiple connected nodes in real time while keeping the interface smooth and responsive. We also had to design local data handling that works even when users process large batches of files.

Accomplishments that we're proud of

We managed to create a complete, fully local AI workflow builder that runs only inside Chrome. It can process different file types, chain multiple AI APIs, and handle bulk tasks with just one click.

What we learned

We learned how far browser-native AI can go and how important it is to design for both privacy and speed. Optimizing performance inside the browser was a key learning experience.

What's next for LocalFlow

Next, we’ll add cloud sync and sharing, so users can keep their flows available anywhere while execution remains local. We also plan to expand bulk tools and add more templates for different real-world use cases.

Built With

- next

- react

- react-flow

- tailwind

Log in or sign up for Devpost to join the conversation.