Introduction

We attempted to solve the problem of (piano) music transcription. Our model takes in a recording of piano music, and outputs what’s effectively a sheet music representation of the music. Like previous literature in the field, this relationship was modelled as between recordings in a .wav format, and a sheet representation in MIDI. While MIDI is a control protocol for digital instruments, it can easily be transformed into standard sheet music by programmes such as Sibelius.

In essence, this problem is a form of seq-to-seq modelling. We are translating between the wav and MIDI representations of the same music. For model choice, previous implementations seem to have focused primarily on RNNs and VAEs. Transformers, however, have received little attention (ha!). Applying this architecture in a novel context, as well as the high performance of off-the-shelf transformers to seq-to-seq tasks make them very suitable for this problem, and hence, that’s the architecture we implemented. Thus, our problem statement is formulated as follows: can we train a transformer to convert .wav music files to an equivalent MIDI file?

Related Work

Music transcription isn’t a radically novel idea, so we had an established corpus of prior work to reference and build upon. One such model that we found particularly impressive was MahlerNet: http://urn.kb.se/resolve?urn=urn:nbn:se:kth:diva-267213. This is a seq-to-seq deep recurrent neural network capable of modeling music. What makes it particularly notable is its vast capacity – claiming to model polyphonic harmonies (distinguishing between multiple ‘voices’ in a piece), as well sequences of ‘arbitrary length’ with an ‘arbitrary number of instruments’. The model is created with Tensorflow, and the music is encoded in the MIDI (Music Instrumental Digital Interface) format – which, as the name suggests, is a universal communication standard for digital music instruments. This data was then preprocessed and passed into the main model, a Conditional Variational Autoencoder. Results indicated partial success. When the model was run with ‘teacher forcing’ (ie. the target sequence was fed as input to update the RNN, instead of the model’s own output), it had extremely high accuracy (over 90%). However, without teacher forcing, it often strayed away from the ground truth and had much lower accuracies (ie. analogous to ‘hallucinating incorrectly’). A public implementation of MahlerNet is present on GitHub: https://github.com/fast-reflexes/MahlerNet.

Data

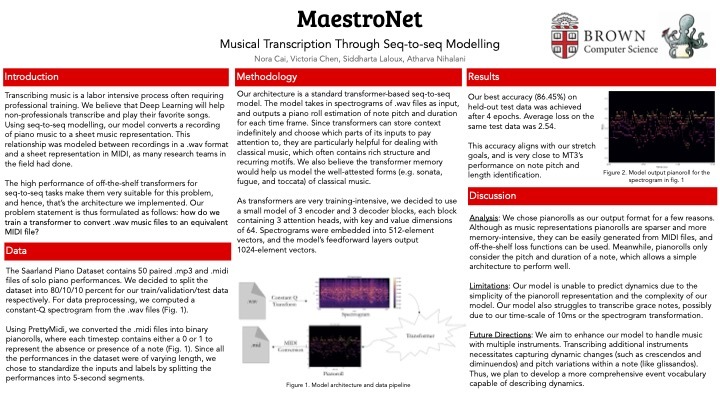

The Saarland Piano Dataset contains paired .mp3 and .midi files of 50 performances, containing 0.15 million notes. We decided to split the dataset into 80/10/10 percent for our train/validation/test data respectively. For preprocessing, we decoded the .mp3 into .wav using LAME and computed a constant-Q spectrogram using the .wav files. We chose to use constant-Q spectrograms over mel spectrograms, which were also commonly used in other automatic music transcription projects, since they provide a constant frequency resolution over high and low frequencies and a more accurate representation of musical pitches. We converted the .midi files into binary pianorolls using PrettyMidi.

Since all the performances in the dataset were of varying length, we chose to standardize the inputs and labels by splitting the performances into 5-second segments. To do this, we determined the number of spectrogram frames and pianoroll frames that corresponded to a 5-second interval of time and padded each spectrogram and pianoroll.

Methodology

Our architecture is a standard transformer-based seq-to-seq model. The model takes in spectrograms of .wav files as input, and outputs a piano roll estimation of note pitch and duration for each time frame. Since transformers can store context indefinitely and choose which parts of its inputs to pay attention to, they are particularly helpful for dealing with classical music, which often contains rich structure and recurring motifs. We also believe the transformer memory would help us model the well-attested forms (e.g. sonata, fugue, and toccata) of classical music.

As transformers are very training-intensive, we decided to use a small model of 3 encoder and 3 decoder blocks, each block containing 3 attention heads, with key and value dimensions of 64. Spectrograms were embedded into 512-element vectors, and the model’s feedforward layers output 1024-element vectors.

Ethics

Most datasets available for automatic music transportant contain only recordings of Western classical music, the limited scope of which fails to represent all bodies of music. Such data representation, on the one hand, excludes popular genres like hip-hop, jazz, and folk music, and on the other hand, the more marginalized, non-Western, non-English language music. As such, our model will be less reliable when transcribing these underrepresented genres. This narrow view regarding music transcription reflects and perpetuates societal biases that prioritize classical music as more sophisticated than other genres, and is thus by itself highly exclusive. This reinforces a hierarchy of cultural value that may alienate marginalized communities and hinder their participation in music education and creation.

While automatic music transcription has the potential to democratize music education, it must address these biases to be truly inclusive. This requires efforts to diversify datasets, conduct research on underrepresented genres, thereby reinvigorating the value of all musical traditions. Only then can automatic transcription technologies contribute to fostering a more equitable and accessible musical landscape.

Our poster can be found in the Additional Info section.

Log in or sign up for Devpost to join the conversation.