Introduction

MAXX is an automated web scraper that analyzes digital expression (i.e. Twitter posts) for linguistic patterns related to extreme states of mind which may present a risk to self (mental health) or others (violence, terrorism).

Inspiration

With 126 million daily users, Twitter has become not only one of the world's largest social media platforms, but also a breeding ground for extremism and violence.

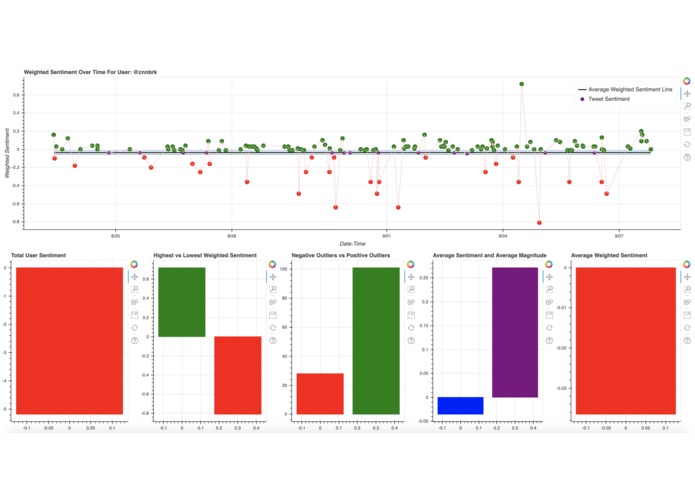

Last month, we were horrified to learn that Connor Betts, the gunman responsible for the Dayton shooting, "liked" several tweets about the antifa movement and the El Paso shooting only hours before. His previous tweets also advocated for the beheading of oil oligarchs to counter climate change encouraged people to "buy a gun and learn to use it responsibly" (Washington Post).

By using natural language processing to analyze Twitter data, we seek to respond preemptively to emerging crises, provide user insight, and, ultimately, save lives.

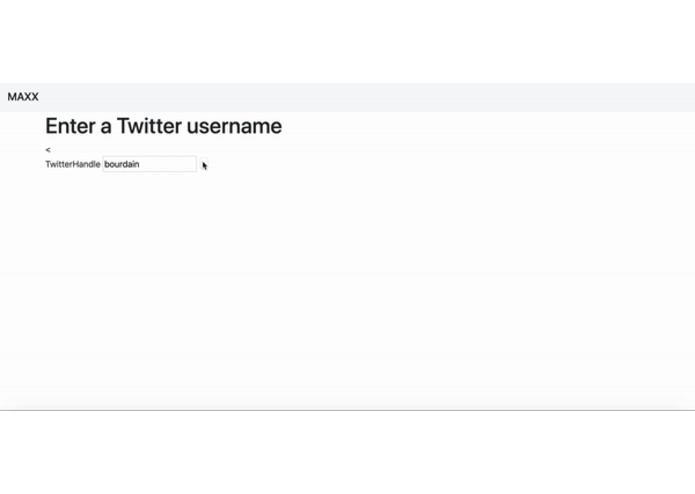

What MAXX does

- compiles tweets from the user account

- provides a risk assessment of the user (weighted sentiment) using Google Cloud’s Natural Language API

- presents data analytics with Bokeh on user dashboard

How we built it

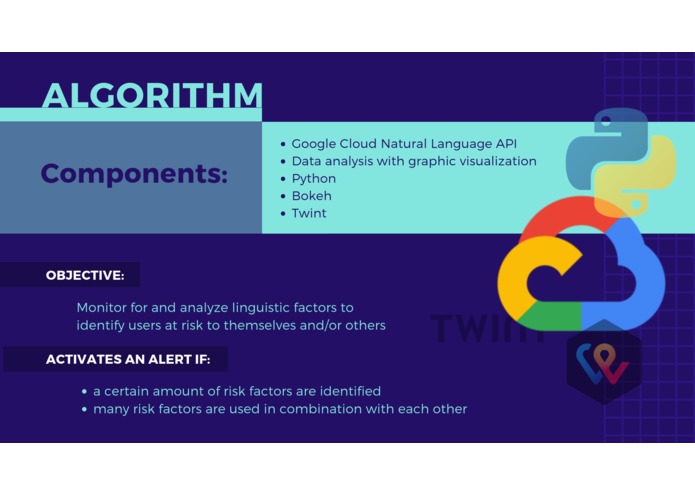

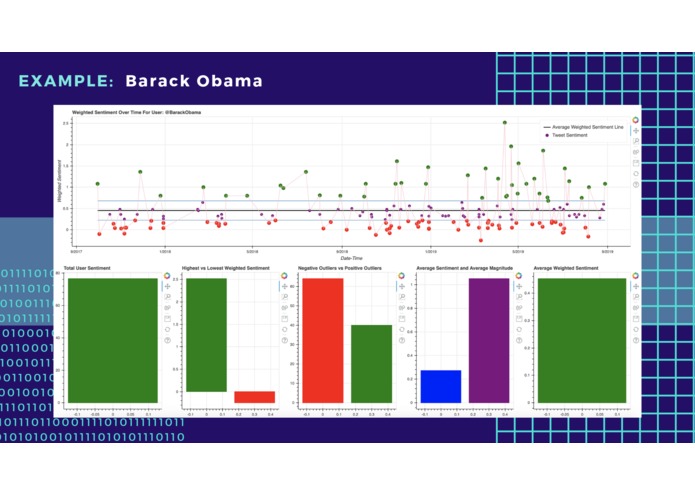

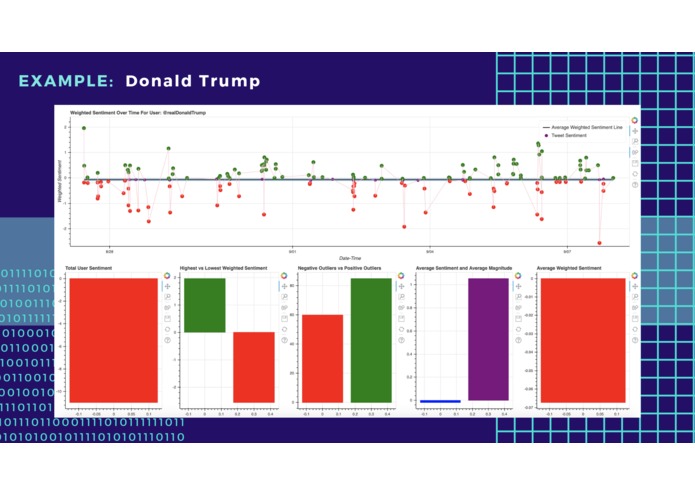

We built MAXX primarily with Python. We used Twint to scrape tweets from each account and analyzed the tweets with Google Cloud’s Natural Language API. Google’s Sentiment Analysis feature identifies the prevailing emotion in a text to label the text as positive, negative, or neutral ('score') on a -1.0 to 1.0 scale. This API also measures the relative strength of the emotional content present ('magnitude'). We multiplied the score by the magnitude to assign a weighted value for a tweet's positivity/negativity.

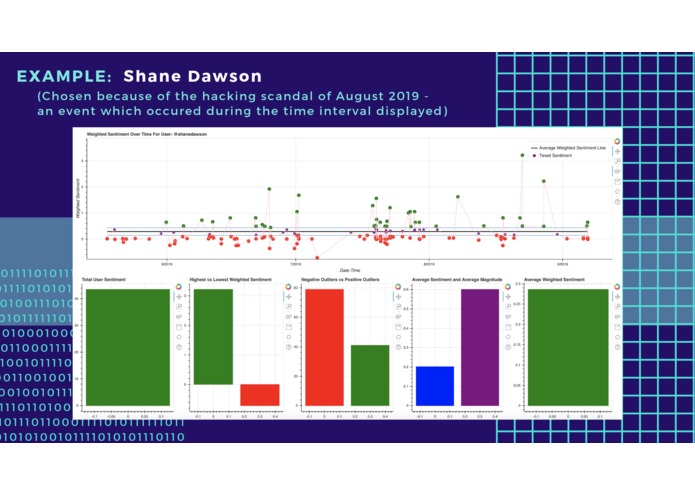

To showcase our data, we created charts and graphs with Bokeh, a Python data visualization library. For each Twitter profile, we created graphs for: weighted sentiment over time; total sentiment (sum of all sentiment scores); negative vs. positive outliers; average sentiment and average magnitude.

Challenges we ran into

- interpreting sentiment analysis scores: identifying outliers in our data, 0s in sentiment scores, variants in text length, etc.

- UX-UI - we initially tried Flask, but we had trouble integrating our functions into web form

- Accomplishments that we're proud of

As a team, we are most proud of experimenting with new tools and applying data science and machine learning to tangible, real-world issues. We are all relative beginners to hackathons: Zain had never used Bokeh before, but he learned Bokeh in the course of the hackathon and ultimately created all of our graphs.

What we learned

- integrating various open-source solutions: Twint, Bokeh, Google Cloud API, and BeautifulSoup (though we didn't end up using this in our final version)

- using code repositories: GitHub, Fork

- collaborative brainstorming - combining different ideas to reach consensus

What's next for MAXX

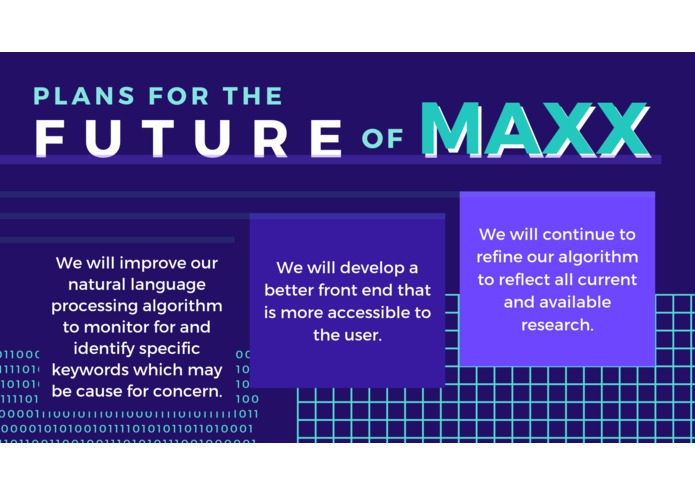

In the future, we would love to improve usability (perhaps develop this idea into app form), improve our natural language processing algorithm to monitor and flag specific keywords, analyze followers and user interests, and create a sentiment web to show how ideas spread. Moreover, we would like to experiment with different language processing APIs, including Microsoft Azure and Amazon Comprehend.

Authors

- Michaela Lozada [mlozada626@gmail.com]

- Zain Ali [zain08816@gmail.com]

- Kirtan Patel [kirt9911@gmail.com]

- Linda Tong [kaihua.linda.tong@gmail.com]

Acknowledgements

We would like to thank the organizers of PennApps for an incredible learning experience and to the mentors (especially Paul, Saniyah, and Ryan of Google Cloud Platforms) for their guidance - we could not have done it without you!

Built With

- bokeh

- google-cloud

- google-cloud-language

- natural-language-processing

- numpy

- pandas

- python

- sentiment-analysis-online

- twint

Log in or sign up for Devpost to join the conversation.