Project Story

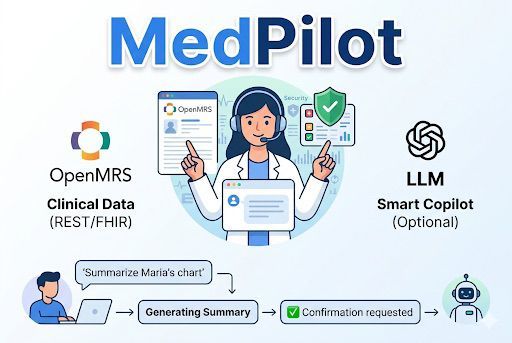

About the Project

The Problem That Started It All

MedPilot started with a simple observation:

Modern healthcare systems have enormous amounts of data, but interacting with that data is still surprisingly difficult.

While exploring OpenMRS, we noticed something interesting. Even basic clinical tasks required too many steps. Finding a patient, reviewing their history, checking medications, or recording new observations often meant navigating multiple pages, remembering identifiers, and repeating searches.

None of this was technically complex. But it was mentally expensive.

That led us to a simple question:

What if clinicians did not have to adapt to software workflows? What if the software adapted to how clinicians already think and work?

That question became MedPilot.

The Idea

We did not want to just add AI to OpenMRS. That would have been easy and honestly not very useful. Instead, we wanted to rethink interaction itself.

Our goal became clear:

Build a clinical copilot that reduces friction, not just adds intelligence.

We imagined a system where a clinician could simply say:

- "Find John Smith"

- "Summarize his chart"

- "Show vitals"

- "Record BP 140/90"

- "Add hypertension"

- "Write a follow-up note"

And instead of navigating menus, the system would understand intent, maintain context, and safely prepare actions.

Not a chatbot. Not automation.

A clinical interaction layer.

That distinction shaped every design decision we made.

How We Built It

We approached MedPilot as a systems problem, not just an AI problem. That meant building a full stack architecture that balanced intelligence with reliability.

Backend Architecture

We built the backend using FastAPI as an orchestration layer responsible for:

- Chat routing

- Intent classification

- Workflow execution

- OpenMRS integration

- Permission enforcement

- Safety confirmations

- Audit logging

We designed the backend to be modular from the start:

app/api/ -> API routes

app/services/ -> Clinical workflows

app/clients/ -> OpenMRS integrations

app/llm/ -> LLM abstraction layer

app/core/ -> Safety and confirmation logic

app/models/ -> Typed schemas

This structure allowed us to treat AI as a component, not the foundation.

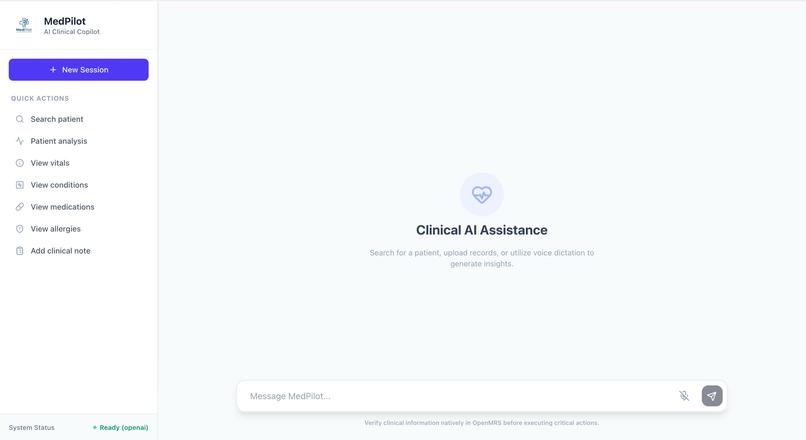

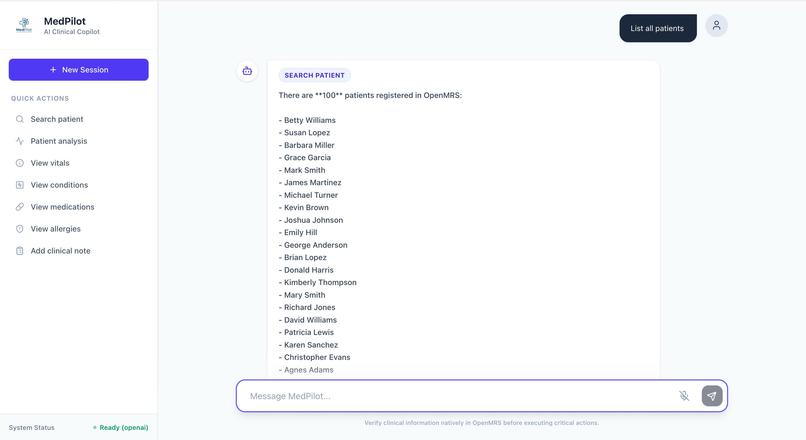

Frontend Design

The frontend was intentionally simple.

We built a React + Vite + Tailwind interface focused around one core idea:

One workspace. One conversation. One clinical context.

Instead of dashboards and navigation trees, the UI emphasizes interaction flow. The user asks, the system responds, and actions are staged transparently.

This decision reduced UI complexity while increasing workflow clarity.

AI + Deterministic Logic

One of the most important decisions we made was not depending entirely on an LLM.

Instead we combined:

- Deterministic routing for common workflows

- Optional LLM reasoning for complex interpretation

- Structured fallbacks

- Multi-provider support (OpenAI, Anthropic, Ollama)

This created an important property:

The system still works even without AI.

That made the architecture more trustworthy and more realistic for healthcare environments.

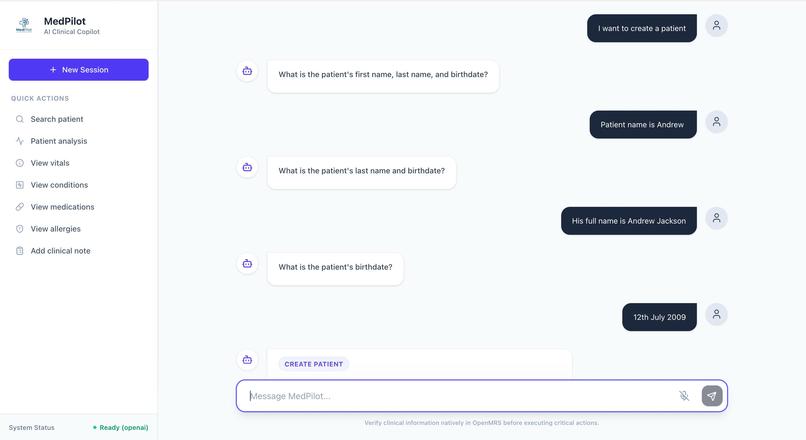

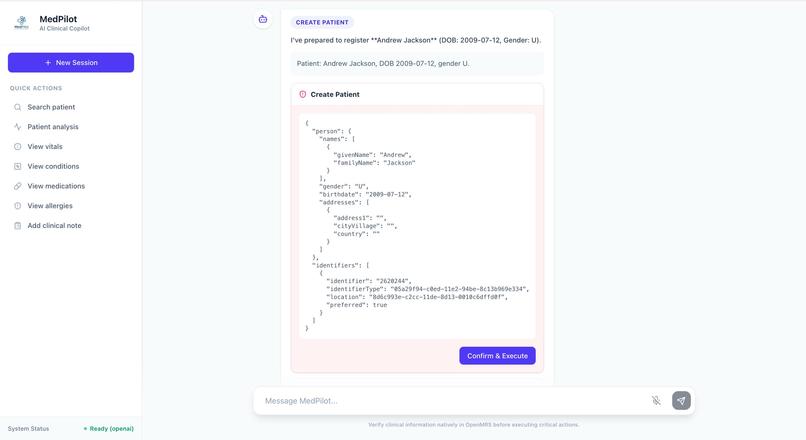

Designing for Safety First

Healthcare software cannot behave like a typical AI assistant. Mistakes have real consequences.

So we designed MedPilot around a strict safety pipeline:

- Intent detection

- Entity extraction

- Payload construction

- Human readable preview

- Explicit confirmation

- Execution

- Audit logging

Every write operation follows this flow.

Nothing executes automatically. Nothing executes silently.

For destructive actions, we added an extra safeguard requiring the user to explicitly type:

DELETE

This was not just a technical decision. It was a trust decision.

We wanted clinicians to always feel in control.

What We Learned

This project changed how we think about AI in healthcare.

Lesson 1: Healthcare UX is really about context

We initially thought we were building a chat interface.

We were wrong.

The real challenge was managing clinical context across time. The system had to remember which patient was active, ensure follow-up actions stayed attached to the correct chart, and prevent cross-patient mistakes.

This turned a UI problem into a state management problem.

Lesson 2: AI alone does not create clinical value

Many healthcare AI demos look impressive but fail under real workflows.

We learned that real clinical software requires:

- Determinism

- Traceability

- Fallback logic

- Human verification

AI became an enhancer, not the foundation.

Lesson 3: Real integrations are messy

Integrating OpenMRS taught us that real healthcare systems are complex in ways demos never show.

FHIR resources. Concept dictionaries. Environment configs. Data mappings.

The hardest problems were not AI problems. They were systems problems.

Lesson 4: Reliability beats cleverness

A workflow that works 100% of the time is more valuable than a smart feature that works 80% of the time.

That realization changed how we prioritized everything.

We stopped asking:

"How can we make this smarter?"

And started asking:

"How can we make this safer and more predictable?"

Challenges We Faced

Challenge 1: Environment setup friction

Getting OpenMRS running consistently was harder than expected. The Docker setup required external database configuration which complicated local testing.

This forced us to better understand the full deployment stack instead of just the API layer.

Challenge 2: Translating natural language into structured medicine

Humans say:

- "Add fever"

- "Record BP"

- "Show meds"

Systems require:

- UUIDs

- Resource mappings

- Concept IDs

- Structured payloads

Bridging this gap required building translation layers between language and clinical data structures.

Challenge 3: Maintaining multi-turn patient state

Remembering which patient is active sounds simple.

It is not.

We had to prevent:

- Context loss

- Patient switching errors

- Accidental cross chart writes

This required explicit state handling rather than relying on conversation memory alone.

Challenge 4: Safety vs usability tradeoffs

Too many confirmations slows clinicians. Too few creates risk.

Finding the balance required thinking like both engineers and product designers.

Challenge 5: Avoiding black box behavior

Clinicians cannot trust a system they cannot understand.

So instead of hiding operations, MedPilot shows the exact payload it plans to send before execution.

Transparency became a feature.

Why This Project Matters

MedPilot is not trying to replace clinicians.

It is trying to solve something more practical:

Reduce the effort required to interact with healthcare software.

In many systems, the real bottleneck is not medical knowledge.

It is workflow friction.

Every extra click adds cognitive load. Every repeated search wastes time. Every context switch increases fatigue.

We wanted to reduce that friction while preserving safety.

MedPilot sits between the user and the EHR as an interaction accelerator, not a decision maker.

What We Would Do Next

If we continued development, our next priorities would be:

- Making OpenMRS deployment easier and reproducible

- Expanding encounter workflows

- Improving FHIR interoperability

- Adding explainability for AI summaries

- Measuring real workflow time savings

- Supporting longitudinal patient review workflows

Most importantly:

We would test with real clinicians.

Because ultimately success is not about architecture.

It is about whether users actually feel:

- Faster

- Safer

- Less mentally burdened

Final Takeaway

MedPilot began with a simple realization:

Healthcare software is powerful, but often painful to use.

We set out to build something different. Not just smarter software, but software that feels easier to work with.

If we had to summarize the biggest lesson from this project, it would be this:

In healthcare, the best software is not the one that feels the most intelligent.

It is the one that helps people move faster without sacrificing trust.

Built With

- antrophic

- backplane-javascript

- css3

- docker

- fastapi

- fhir

- healthgorilla

- html

- httpx

- javascript

- mysql

- ollama

- openai

- openmrs

- pydantic

- pypdf

- pytest

- python

- restapi

Log in or sign up for Devpost to join the conversation.