Inspiration

We are bridging the gap between online and in person attendees.

It is increasingly common to have hybrid events like this year’s RealityHack - where some people are present in person, and others are attending remotely. Attending remotely is a vastly different experience though - largely disconnected from what is happening in person.

At MagFest, they had implemented a VRChat space during the pandemic to link the real world venue with a chat space using a “Magic Mirror” that allowed people to talk in real-time across the disconnect without necessarily needing to sit in front of a computer or put on a VR headset.

What it does

By creating a mirror portal between physical and virtual worlds we are restoring the connection between local and worldwide remote participants.

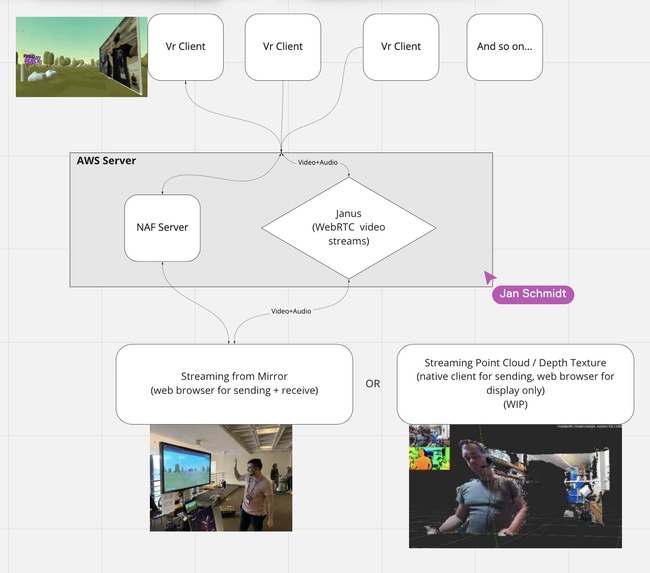

This portal is made of a virtual chat room built with AFrame and WebXR, and a web client to stream between the chatroom and the physical hackerspace. We have built a window to allow both virtual and in person to communicate and interact with each other in the same space.

Challenges we ran into

There are lot of technical challenges to build this kind of interaction:

- Assembling the VR space using the largely unfamiliar Networked A-Frame web framework

- Having to deal with the lack of bandwidth to send the video stream to multiple users

- We solved this by using a Janus media router to stream to each client

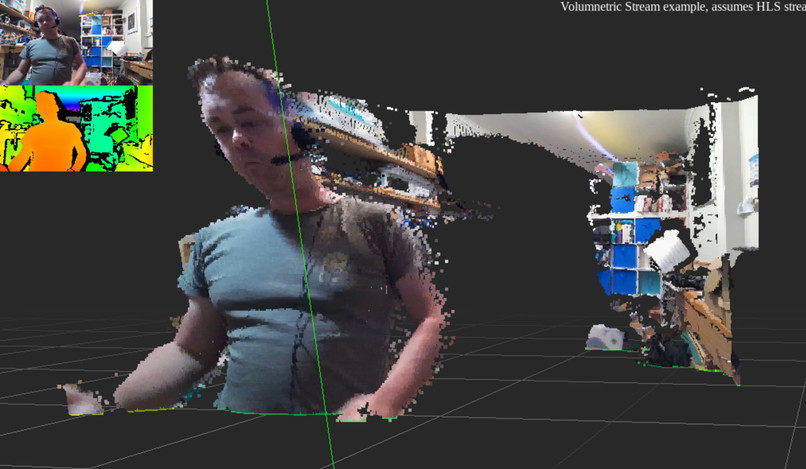

- Trying to connect the Azure Kinect RGB+Depth video stream as a volumetric input

Accomplishments that we’re proud of

Getting the architecture for Janus up and running, and building a framework that we can continue to iterate on. Also the latency between getting both virtual and in person people talking was small, and made it easy to talk to each other!

What we learned

We learned about developing on AFrame and WebXR across multiple devices, about setting up a Janus server on an Ubuntu machine through AWS, and experimented with Point Cloud mapping though Azure Kinect with GStreamer.

What’s next for Mirror Portals

To create more tools for linking the real world and the virtual. For example, instead of flat windows using volumetric capture to really import the real world. It is possible to capture and record real world events and objects - taking photos of the magic mirror, or scooping out portions of a volumetric display and turning it into a preserved virtual object.

In the other direction, adding tools to the virtual world to reach out into the real world:

Regions in VR space that represent Looking Glass displays. Place an object in that space, and it is shown to the people nearby. These objects could also remain present after the creator has left the space. If a video stream is coming from a telepresence robot, the VR world could have controls to drive the robot around.

Built with

- AFrame

- Networked AFrame

- Janus WebRTC Server

- Azure Kinect

- Vive Focus 3

- Oculus Quest

Built With

- aframe

- amazon-web-services

- azurekinect

- gstreamer

- janus

- node.js

- webrtc

- webxr

Log in or sign up for Devpost to join the conversation.