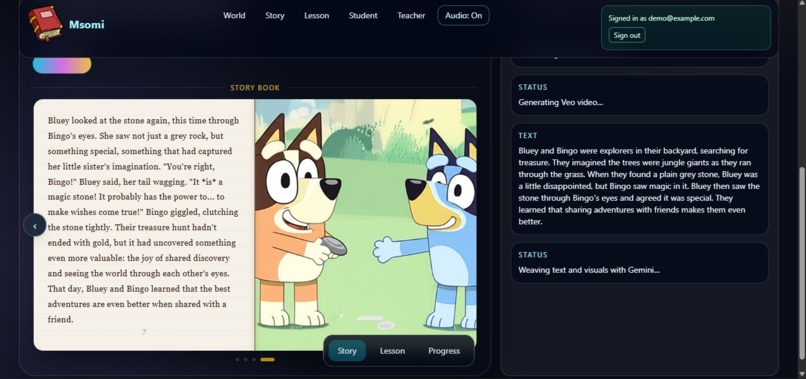

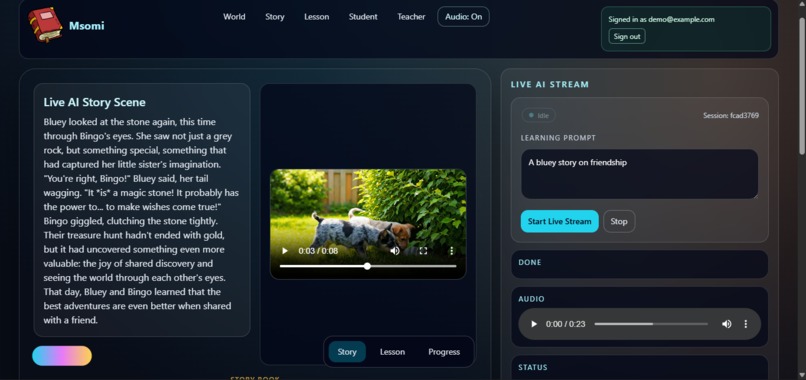

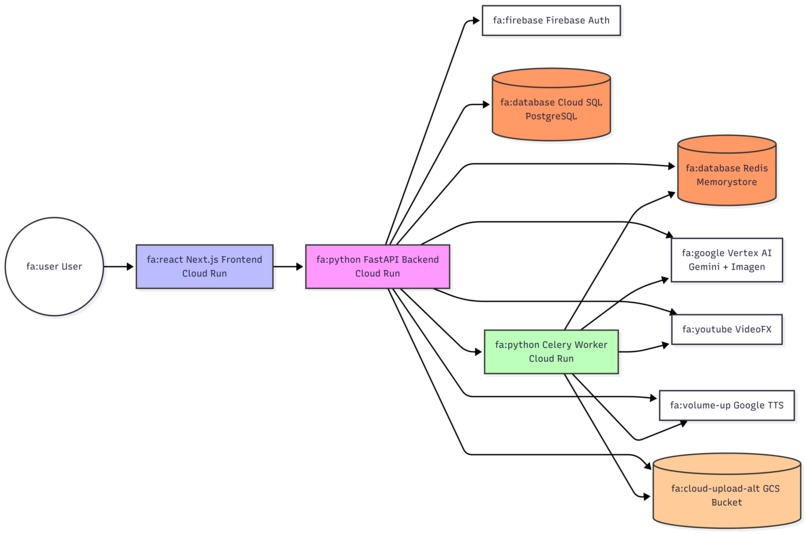

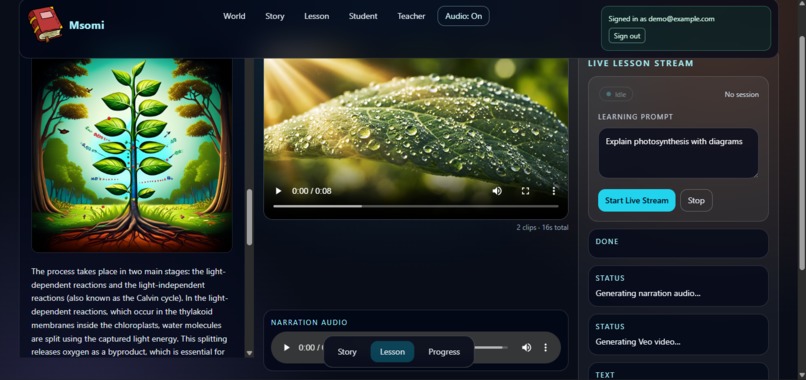

How we built it Frontend: Next.js 14 (App Router) with TailwindCSS for layout, Framer Motion and React Spring for fluid animations, and React Three Fiber for 3D scene visualisations. Zustand manages all client state (auth session, stream events, quiz states). A custom useSSEStream hook connects to the backend streaming endpoint and feeds events into the learning store in real time.

Backend:We built the API with FastAPI, it handles everything: user auth, story sessions, lessons, and analytics, all as separate clean routers. Every request that touches user data goes through Firebase Admin SDK first to verify the token. For the streaming part instead of waiting for the AI to finish generating a full response, we stream it chunk by chunk using Server-Sent Events, so the frontend starts receiving content almost instantly. Celery workers backed by Redis handle the heavier jobs like image and video generation in the background, so the story doesn't freeze while waiting for media to be ready. For AI, we used Vertex AI Gemini 2.5 Pro for all the text and story logic, Imagen 3 for scene illustrations, Google TTS for audio narration, and Veo for video. All generated media gets uploaded to Google Cloud Storage and handed back to the frontend as signed URLs. Then Firebase Auth for identity, Firestore for live session state and story choices, PostgreSQL (Cloud SQL) for structured things like quiz scores and lesson progress, and Redis for fast ephemeral state and the job queue.

Infrastructure: Everything runs in Docker containers. On the cloud side, we deployed three separate Cloud Run services; for the frontend, backend API, and for the Celery worker. The database is Cloud SQL, the Redis cache is Cloud Memorystore, Docker images are stored in Artifact Registry, and all secrets (database URLs, API keys, Firebase credentials) live in Secret Manager. We also set up a VPC connector so the Cloud Run containers can reach Redis over its private IP.

Challenges we ran into

We had a hard time in deployment. The standard gcloud auth configure-docker approach silently failed because Docker Desktop couldn't find docker-credential-gcloud in its PATH. We had to strip the credHelper from ~/.docker/config.json and authenticate directly with gcloud auth print-access-token | docker login --password-stdin.

The app ran fine in dev but the Docker production build caught two TypeScript errors: Zustand's ReturnType resolving to unknown in strict mode ( which we fixed by exporting an explicit AuthState type), and useSearchParams() in child components requiring boundaries that export const dynamic = "force-dynamic" alone didn't satisfy.

We had trouble with celery worker on Cloud Run. It requires every container to listen on a port (pure queue consumer with no HTTP server). The first workaround used a shell script to start a health server alongside Celery but the script had Windows CRLF line endings that silently broke execution on Linux. The fix was to replace the shell script with a pure Python entrypoint (worker_main.py) that starts a threaded HTTPServer on $PORT before handing off to Celery. Creating the VPC connector conflicted with an existing GCP subnet, leaving the connector in a broken ERROR state. We had to delete it and recreate it with a different subnet.

Finally cash problem; probably the service will be out since using Vertex AI for video generation consumes too much credits even though we implemented features to minimize the cost was still too high. Plus using google cloud infrastructure to host applications is too expensive especially for a college student. (If you are testing kindly reproduce to experience the magic in the project)

Accomplishments that we're proud of

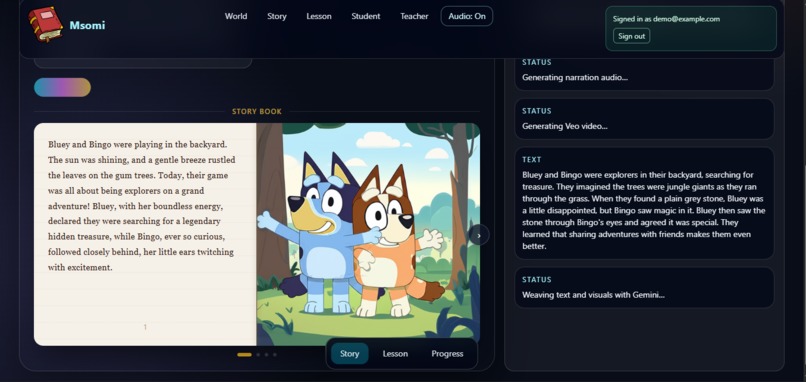

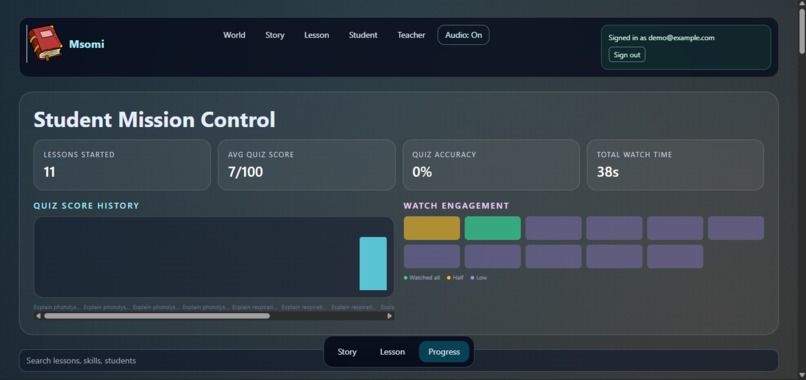

- Creating a real-time multimodal streaming that weaves text, images, audio, and video together which all arrive as a live stream. The SSE architecture means the first word of a story appears in under a second.

- Building a fully serverless production deployment.

- Wiring google cloud end to end.

- Creating branching AI narratives; where the story branches based on student choice, with Gemini maintaining narrative continuity across turns .

- Working across different time zones to create such an amazing project.

What we learned

- Google Cloud is connected from the AI, storage, database, cache, auth, secrets, networking everything runs on GCP and have to wired up correctly. Getting all of that wired up end-to-end was a real milestone. Getting one piece wrong (a missing secret, a broken VPC range, a missing IAM role) silently breaks the whole chain.

- Learnt Redis on Cloud Run required a VPC connector since Memorystore only exposed a private IP.

- Docker on Window is another unspoken difficulty but we pulled through it

- Building products on google cloud infrastructure is really efficient; provides the perfect serverless solution.

What's next for Msomi

- Adding custom learning path allowing teachers create structured curricula that Msomi fills with generated content aligned to a syllabus or learning objective.

- Adding voice input support to let students speak their story choices or ask follow-up questions to make it feel more conversational.

- Adding Multilingual support.

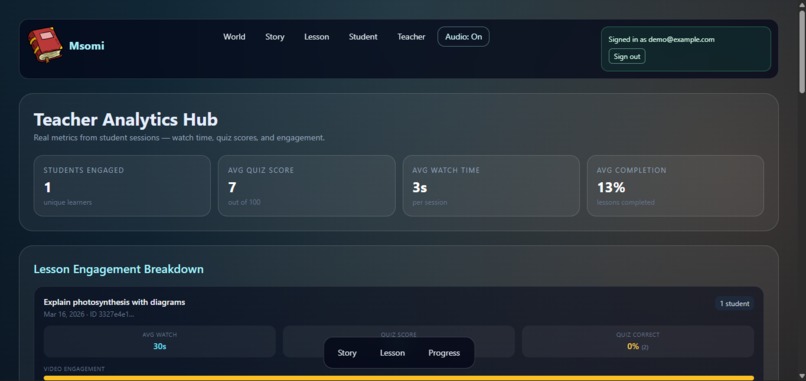

- Creating an aggregated teacher's dashboard where teacher observes analytics across a class: which concepts they are struggling, which students need support, where quiz scores are dropping.

Log in or sign up for Devpost to join the conversation.