-

-

App icon

-

Home screen

-

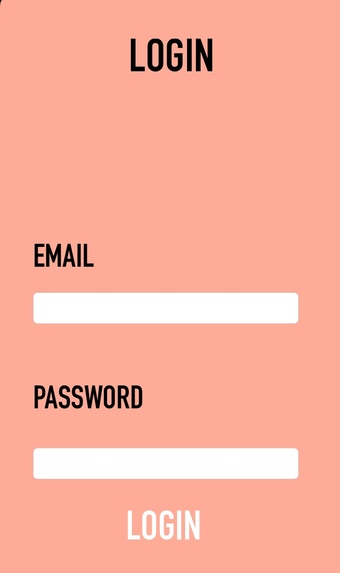

Login page

-

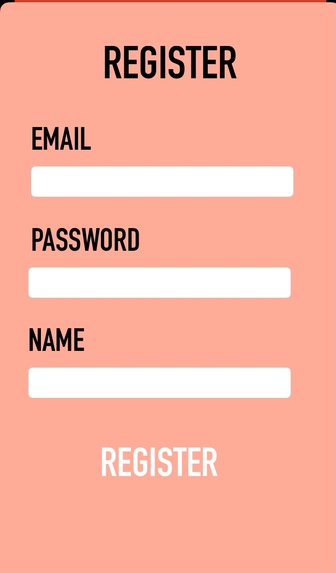

Register page

-

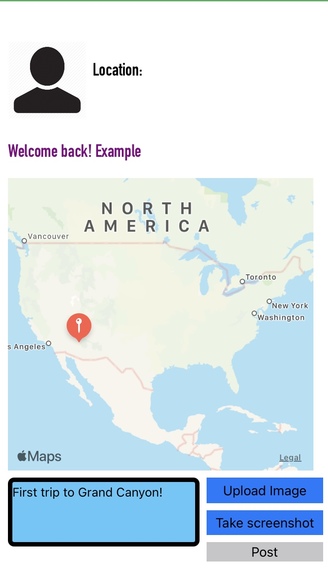

User page. Can add captions and select points on the map to add the caption about. Uses Radar.io reverse geocoding API

-

Take screenshot

-

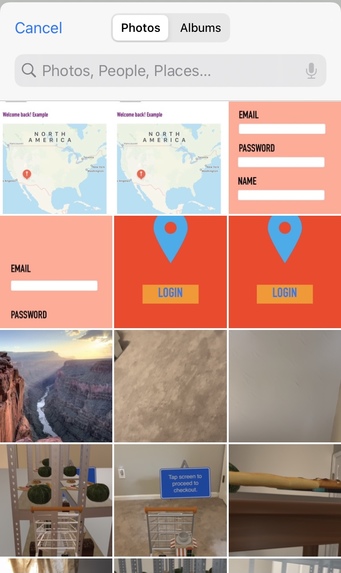

Upload image form image library

-

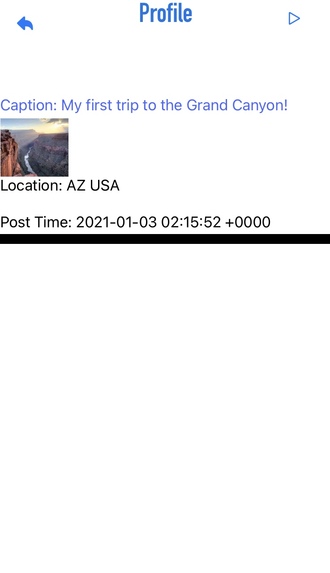

Profile page to see all posts, including location, images, date, and AR feature

-

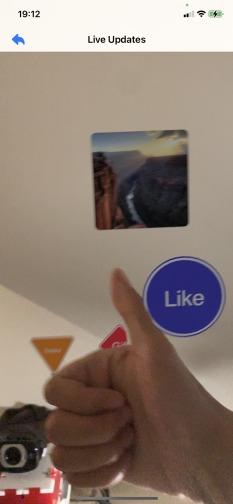

Augmented reality scene: interaction with hands

-

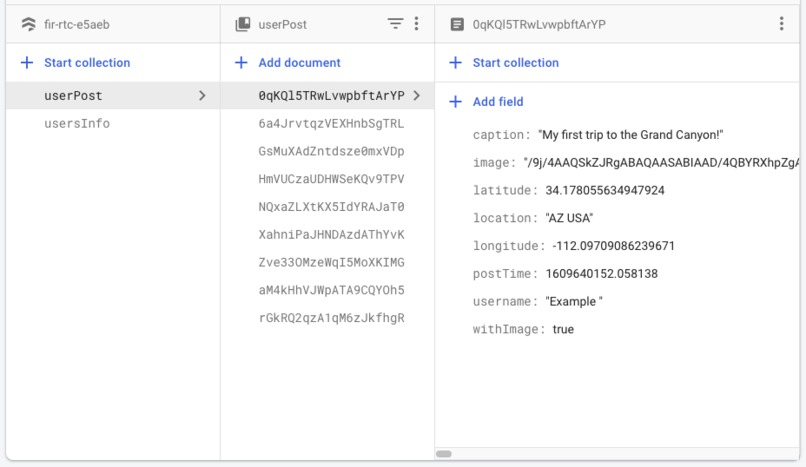

Storing user data in Google Firebase

Inspiration

With the new year coming up, people need a chance to look back at their old memories and relive them and create new ones to have a better year ahead of them. We wanted to help people by bringing them closer together and helping them relive their memories by housing all the places they went, pictures they took, and ultimately have augmented reality to help them relive the moment.

What it does

Neomap lets users to create their own accounts so they can store all their fun memories. Users simply select places on the map, add captions to describe what happened (like a interactive journal), and can take a picture or upload a screen shot and post it to view later. When users come back to our app, they can see all their old memories and relive them by using our augmented reality app and seeing it in a 3D space. Users can even like and comment on their own pictures using hand gestures!

How we built it

We used Swift to mainly program the app. We also used Radar.io API to implement our most important map feature. This feature let users to select their specified place to write about and we used a specific api called reverse geocoding to accomplish this. To save the users login credentials, photos, captions, and places, we use Google cloud (firebase). To further implement the AR feature, we used ARKit and Reality Kit and used Apples's Vision library and machine learning framework to detect hand gestures like liking a photo.

Challenges we ran into

We encountered lots of errors, but ultimately got through them. The hardest part was implementing the AR feature, which made our app different from any other journal app. We also had difficulty combing 3D AR scenes with other pages. Besides, it is the first time for some of us to adopt MVVM design pattern, which made maintenance and debugging much easier despite its initial learning curve.

Accomplishments that we're proud of

We are proud of fully implementing Radar.io reverse geocoding api. This let users fully control where they want to write about their memory. Also, creating a interactive AR feature was really cool since it actually let users to see it again and "relive" the memory. Furthermore, we made some optimizations in the AR scene that makes hand pose detection much less laggy.

What we learned

We learned how to integrate Radar.io in Swift applications which was really cool since their were so many features that the api offered. The reverse geocoding feature was the most important one for us, and we learned how to integrate that with our app. We also learned how to combine 2D images into a 3D space and create interactive hand gestures to interact with the image and overall learning how to integrate augmented reality with the app.

What's next for Neomap

The next feature for Neomap would be to add a friends feature in which you could see you friends posts and interact with them in a AR environment and use our Hand Pose machine learning library to like, dislike, and heart each post, making a interactive AR themed social media app.

Log in or sign up for Devpost to join the conversation.