Inspiration

We started our ideation process with how AI has progressively become more useful in terms of mimicking human interaction, specifically the voice. But at the same time, we wanted to have something of a physical form because that gives the object people are interacting with a soul or something that they can place sentimental value on. We decided on creating a machine that can be used as a practice for people with social anxiety to date. As dating could be a tremendous task for people that has social anxiety.

What it does

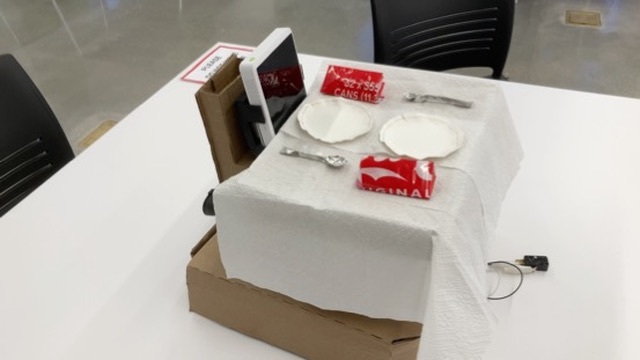

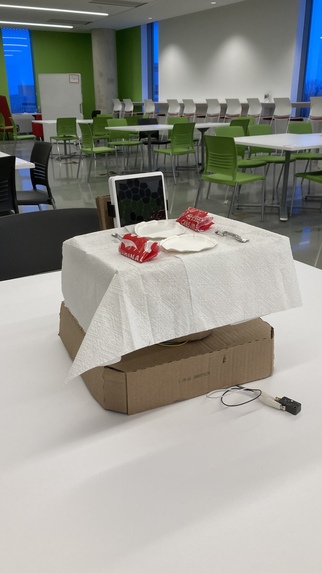

OLI scans any object of your choice and creates a unique personality. This persona is projected into a physical "dinner date" model, allowing you to chat with them in an immersive, real-life setting.

How we built it

The system can be split into 3 section, Voice, display, and Movement,

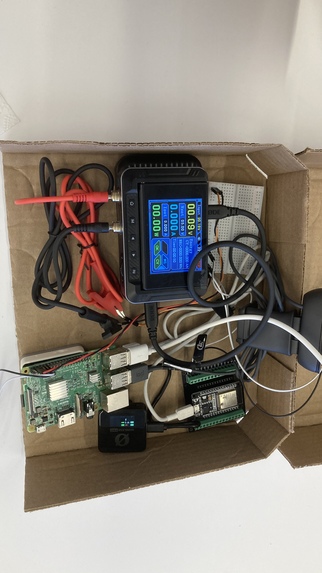

The voice and display are hosted on a Raspberry Pi 3b, and we used Gemini API to create our persona through the image that is taken on our logitech camera, and ElevenLabs to create a realistic voice. Additionally, adapted our code to be able to support the RODE Wireless mic system to better enhance the voice input. And we use an M5 Stack as an speaker for design.

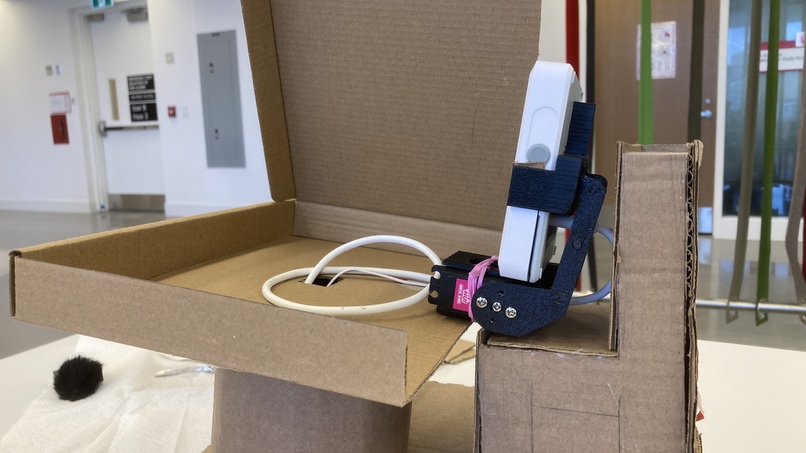

The movement system, is controlled by ESP-32 which is controlled by the Raspberry Pi through UART(USB Serial). The ESP-32 is connected to a Servo, and optionally an RGB backlit display if needed.

Power for Raspberry Pi(indirectly, ESP-32), Servo, as well as the SenseCap Indicator D1 is powered off a powerbank. Specifically, Raspberry Pi, Servo is connected to a 5V portable powersupply, in which is connected to the power bank.

The servo also have a custom designed 3D printed arm that is directly attached to the SenseCap Indicator D1, so that the screen can be more dynamic, making the maching more lively

Challenges we ran into

Throughout our hack, our biggest challenge was actually trying to intergrate our Backend API to all of our hardware used, i.e, routing the voice produced by Eleven Labs to our M5 module over Wifi.

And it was a hard balance between design cleaniness and user experience, as we try to reduce the amount of buttons that a user need to interact with, making it more simple, and intuitive to use.

Accomplishments that we're proud of

- Delivering a fully interactive, voice-driven romantic simulation

- Creating a dynamic “interest scoring” system that evolves during conversation

- Achieving smooth synchronization between AI responses and servo movement

- Designing a 3D printed arm that allows linear motion within the time limit

What we learned

Throughout our hack, we found our selves getting more fond of our projects, which further validate our point of giving a voice Ai a physical form to be interface with.

What's next for Object Love Interface

We can further expand the support for more post-conversational analysis and data summaries, and enable more physical customization options for the OLI.

Built With

- c++

- elevenlabs

- esp32

- express.js

- gemini

- javascript

- m5-stack

- python

- raspberry-pi

- sense-cap

Log in or sign up for Devpost to join the conversation.