Inspiration

Every major real-estate decision in San Francisco carries regulatory risk — open violations, unpermitted work, stalled permits, routing bottlenecks. But the data lives in fragmented city systems that require manual cross-referencing across multiple municipal portals. We're building for a specific class of professionals — investors, developers, title companies, underwriters — who run that research daily and whose decisions are worth millions. The question was: what if an agent did it for them, got smarter with each query, and showed its work in real time?

What it does

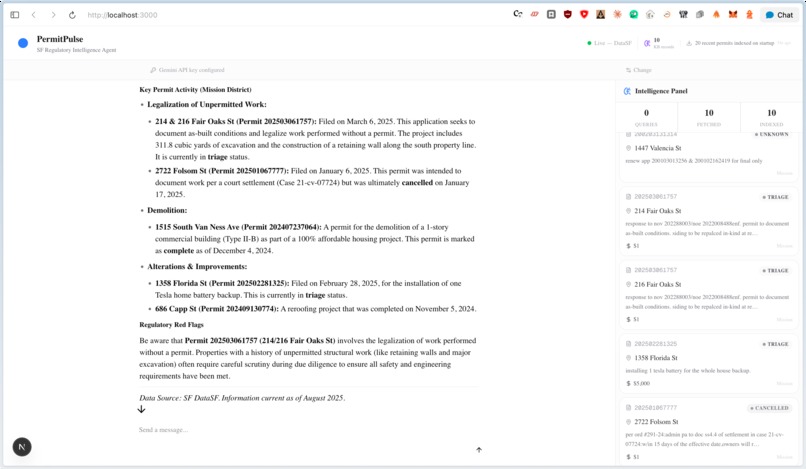

PermitPulse is an autonomous regulatory-intelligence agent for San Francisco real estate. Ask it a question — "What permits have been filed in the Mission recently?" or "Are there open violations at 811 Valencia?" — and it:

- Searches its knowledge base first for previously indexed context (powered by Senso)

- Fetches live records from DataSF if fresh data is needed — building permits, DBI complaints, Notices of Violation

- Indexes everything it fetches back into the knowledge base, so the next query on the same area starts richer

- Streams the answer progressively, with a live activity trail showing each tool invocation as it happens, and a side panel where permit and violation cards appear in real time as the agent retrieves them

The result is a self-improving regulatory intelligence loop: the product gets more useful with every query.

How we built it

- Agent orchestration: Railtracks — tools defined as

@rt.function_node, the agent asrt.agent_node, the flow asrt.Flow. Four tools: fetch permits, fetch violations, fetch permit details, search knowledge base. - LLM: Google Gemini 3.1 Flash Lite Preview via litellm with multi-round tool-calling

- Knowledge layer: Senso Context OS — every DataSF result is normalized and ingested; future queries on the same area benefit from accumulated context

- Backend: FastAPI with a real-time SSE streaming endpoint (

/api/chat/stream) that emitsstep,permits,violations,answer, anddoneevents as the agent works — making every tool invocation visible - Frontend: Next.js 16 + assistant-ui + AI SDK v6, in a split-pane layout — chat on the left for Q&A, a live intelligence panel on the right that populates with permit and violation cards as the SSE stream arrives

- Deployment: DigitalOcean App Platform — separate Docker containers for backend and frontend, auto-deployed on push

Challenges we ran into

Making the agent's work visible. The original version returned a JSON blob after the agent finished — all the interesting work was hidden behind a spinner. We rebuilt the backend to emit SSE events in real time, so users see each tool running as it happens. Getting those events to integrate cleanly with assistant-ui's text stream transport — embedding step markers that the frontend strips before rendering — required careful coordination across layers.

Infinite re-render loops. assistant-ui's useAuiState selector triggers a re-render whenever its return value changes by reference. Returning a parsed array from the selector caused a new reference on every render tick, producing React error #185 (too many re-renders) the moment the agent responded. The fix: return the raw JSON string (a stable primitive) and parse it with useMemo outside the selector.

DataSF quirks. The Socrata SODA API behaves differently across datasets — field names, filter syntax, and pagination are inconsistent. We had to handle dataset-specific differences in the fetcher layer to get clean, joinable results across permits, complaints, and violations.

Accomplishments that we're proud of

- A genuinely self-improving loop: every query expands the knowledge base, so the second question about the Mission district is answered faster and with richer context than the first

- Real-time SSE streaming that makes the agent feel alive — each tool invocation animates in as it happens, not a spinner followed by a wall of text

- The split-pane UI: chat on the left, a live data panel on the right where permit and violation cards appear as the agent fetches them — it reads like a research tool, not a chatbot

- Clean integration of all four sponsor tools — Railtracks, Senso, assistant-ui, and DigitalOcean — each doing real work in the core product loop, not just a demo mention

What we learned

Streaming changes the product feel more than almost any other single change. The same answer that feels like "wait, then text" becomes "watch the agent think" when you surface the intermediate steps. Showing work builds trust and makes an agent feel capable rather than magical.

We also learned that embedding structured data as invisible markers inside a text stream is a practical way to pass rich side-channel information — permit records, step status, violation counts — through a transport that only speaks text, without rebuilding the transport layer.

What's next for PermitPulse

- Expand jurisdictions: The same architecture ports to any city with a Socrata-backed open data portal — Los Angeles, Chicago, New York. SF is the proof of concept for a national product.

- Proactive monitoring: Instead of only answering queries, PermitPulse should watch a saved list of addresses and alert investors when new permits or violations are filed.

- Deeper data joins: Cross-referencing permit contacts (contractors, architects) with violation history to surface pattern-of-practice risk at the entity level.

- Spatial queries: "Show me everything within 500 feet of this parcel" — visualized alongside the chat as the agent works.

Built With

- app

- assistant-ui

- contextos

- digitalocean

- railtracks

- senso

Log in or sign up for Devpost to join the conversation.