Inspiration

To create, we first need to acquire knowledge, and the way we do so is fundamentally the same as it was a thousand years ago. The faster, more effectively we are able to gain it, the more freedom we will possess to invent, to build, to innovate.

To do so, it is imperative to automate rather monotonous and repetitive tasks which inhibit creativity and consume unrecoverable time.

Kairos, instead of thinking how to have more time, gives you more time to think; gives you the liberty to develop the quintessential aspects of the human intellect.

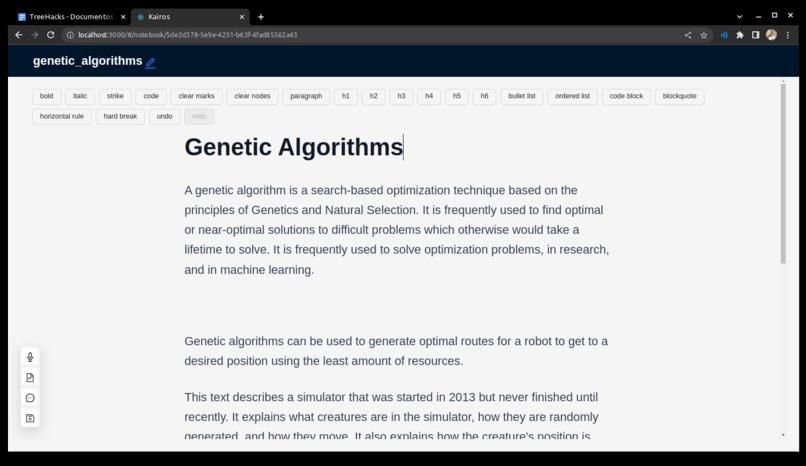

What it does

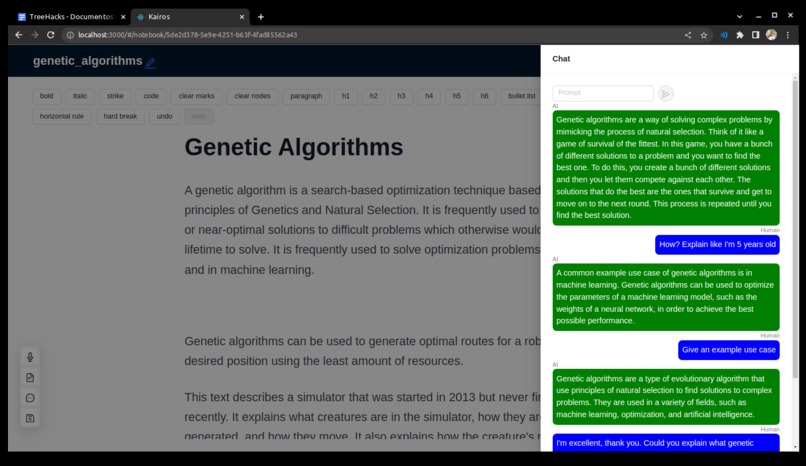

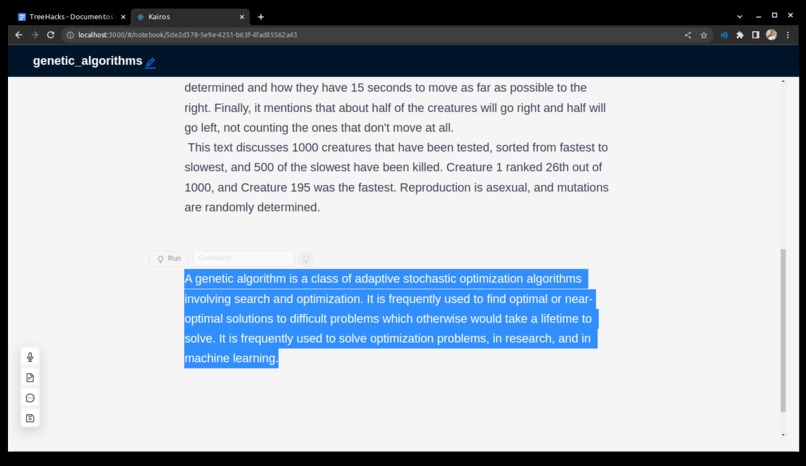

- Assisted writing: Suggests and tailors text generation to fit the user-given context. It leverages the available information and claims to generate factual and relevant information.

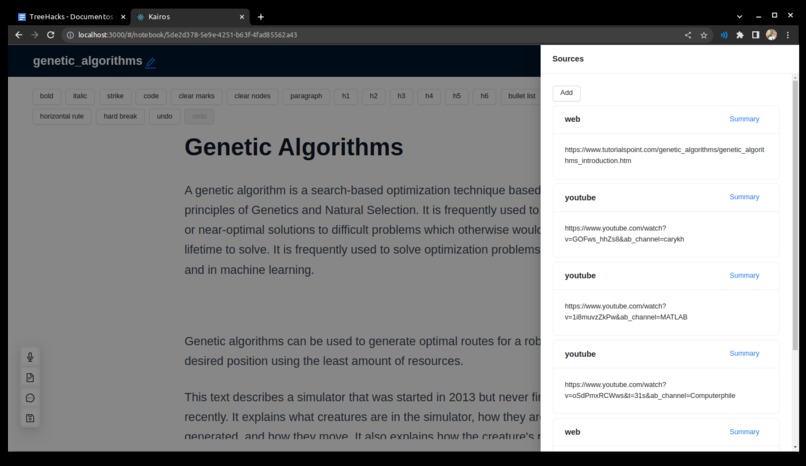

- Unlimited contextual knowledge and retrieval: The user can add as many sources as they want. Kairos uses a dense vectorial space to encode information, and samples datapoints (i.e., information, claims, facts) by computing the semantic similarity between the current query or result of interest and the existing knowledge.

- Discrete reasoning: The models are capable of step-by-step reasoning (and also showing its train-of-thought). In practice, the model is able to determine that to accomplish Task A, it also needs to do Task A1, A2 and A3.

- Factual generation: Given that the model samples information from trusted sources, the contextual information and searching capabilities help reduce the “hallucination”

- Reasoning and combining claims from different sources (i.e., papers, videos, conferences, etc.): The model is able to gather information from different sources and combine them to create new information.

- Interactive knowledge consumption: The conversational capabilities allow the user to ask follow-up questions and tasks, fundamentally changing the historical learning paradigm of unilateralism (i.e., just reading and absorbing).

- Summaries and actionables: The model can extract actionable information or the key main points from any given source.

- Brainstorming: The model is capable of looking at the current notebook’s content and the knowledge database to suggest ways to advance the writing process.

How we built it

Powered by OpenAi’s Davinci model, we enhanced several state-of-the art technologies and platforms that together, in synergy, provide the user an innovative, effective experience.

By accessing tools such as an user-defined database, Google’s API, and Wolfram Alpha, the model was taught how to interact with the world, generating a real, trustworthy experience.

With an embeddings-based knowledge database for all the user-given sources, we extracted the features and the semantic meanings of the data, and stored it efficiently for future retrieval and querying. We are able to compute semantic similarity by taking the cosine distance between the samples and the query’s embedding.

With prompt engineering, the model was encouraged to do step-by-step reasoning, being able to determine when and where to use tools or query the knowledge database

Finally, all of this is built-in in a performant threaded codebase.

Challenges we ran into

- Dependency on large language models: very recently, Meta showed how to teach mid-sized language models to use tools, but the code has not yet been released, and replicating the results in one hackathon would be unfeasible and unfruitful. Therefore, we are still dependent on LLMs.

- Prompt engineering, since LLMs are blackboxes and we cannot visualize the inner state. Manually tweaked the prompts and input formats.

Accomplishments that we're proud of

Unlimited, user-provided contextual information Factual text generation Having a model interacting and gathering information from the real world “autonomously”, since the model decides what and when to query or use the tools

What we learned

By using multiple tools and state-of-the-art technologies, it is feasible to maximize the efficiency with which humans gather information so that the latter can be the basis for creative and innovative processes which require time that previously was unavailable.

References

- Augmented Language Models: a Survey

- Toolformer: Language Models Can Teach Themselves to Use Tools

- REAC T: SYNERGIZING REASONING AND ACTING IN LANGUAGE MODELS

What's next for Kairos

Multimodal learning (https://www.deepmind.com/blog/tackling-multiple-tasks-with-a-single-visual-language-model)

Replicating Meta's result (achieving new SOTA in several tasks with mid-sized language models)

Built With

- embeddings

- faiss

- gpt3

- langchain

- python

- pytorch

- react

- serpapi

- threading

- transformers

- typescript

- whisper

- wolfram-technologies

- zero-shot

Log in or sign up for Devpost to join the conversation.