Inspiration

In today's digital ecosystem, users constantly exchange personal data for access to online services — often without realizing the scale or value of what they are giving away. Consent has become routine, but understanding has not.

We were driven by a central question:

If personal data fuels billion-dollar business models, why are users unaware of its value and impact?

This motivated us to build a system that brings transparency to the data economy — helping users see, quantify, and understand how their data is being collected and monetized.

What We Built

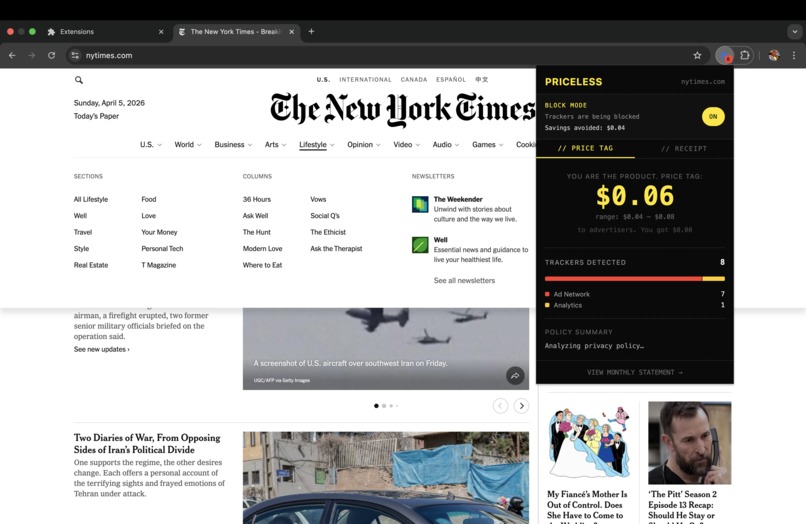

A Chrome extension with an embedded AI and machine learning engine that audits websites in real time and converts complex privacy signals into simple, actionable insights. Everything runs locally — no data leaves the device.

Core capabilities include:

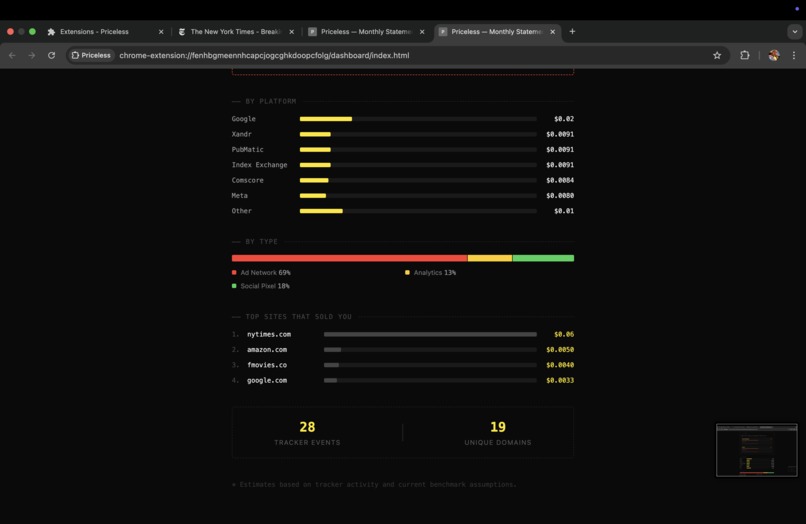

- Real-time detection of trackers, cookies, and third-party requests

- ML-based estimation of the economic value of user data, with confidence intervals

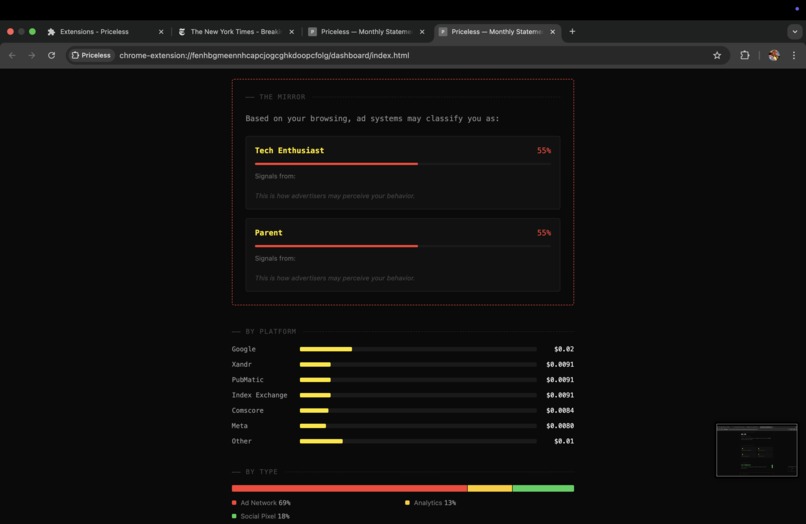

- Reconstruction of the audience profile ad systems have built on the user's browsing history

- AI-powered summarization of privacy policies into concise, human-readable insights

- Contradiction detection — flags when a site's policy claims contradict its observed tracker behavior

- Policy change detection — alerts users when a privacy policy is quietly updated

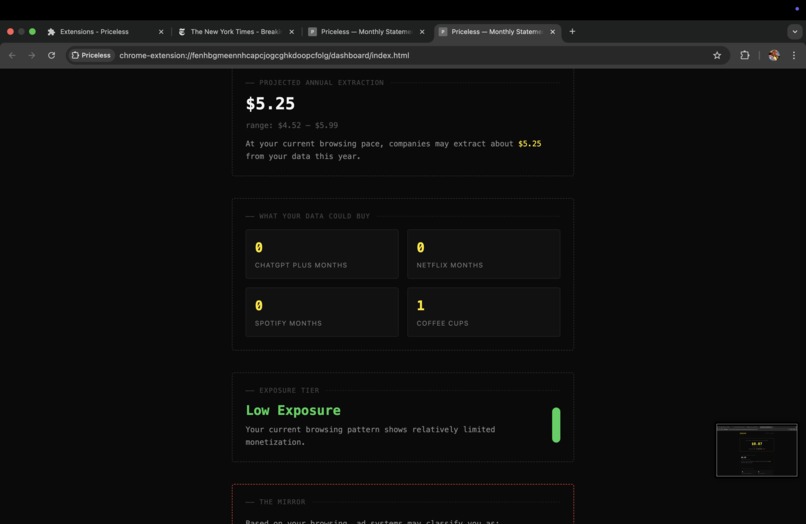

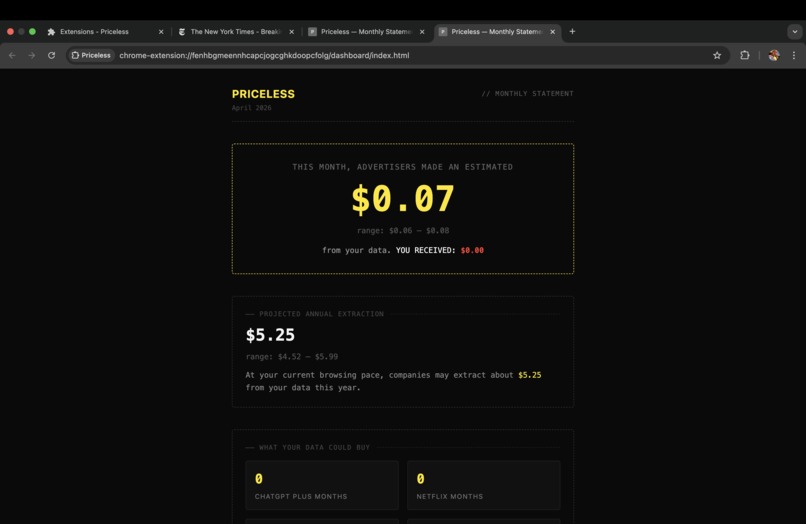

- A dashboard that aggregates and visualizes data exposure across the month

We model the estimated value of user data per tracker event as:

$$ V = \sum_{i=1}^{n} (w_i \cdot d_i) $$

where:

- $d_i$ represents detected signals (page category, tracker density, device type, geo signal, time of day)

- $w_i$ represents weights learned from IAB CPM benchmarks and WhoTracksMe tracker data

How We Built It

The system is entirely self-contained within the Chrome extension — there is no external server or backend. All inference, storage, and processing happens on-device.

Browser Extension Layer (Chrome MV3)

- Content scripts detect third-party tracker requests using

PerformanceObserverand resource timing APIs - The background service worker orchestrates all inference, storage, and API calls

declarativeNetRequestpowers the block mode with dynamic rule injection

Machine Learning Layer (ONNX Runtime Web — runs in the service worker)

Valuation model:

- PyTorch regression network:

17 features → 64 → 32 → 2 outputs - Trained on WhoTracksMe tracker density data and IAB CPM benchmarks

- Outputs a low/mid/high confidence interval per tracker event

Mirror model:

- PyTorch multi-label classifier:

URL → BoW → LSA (64-dim) → 128 → 64 → 8 sigmoid outputs - Trained on 1.27M URL samples from the Curlie dataset

- Reconstructs which of 8 IAB audience segments an ad system would assign to the user

Both models are exported to ONNX and run entirely via WASM inside the service worker — no GPU, no server.

AI Layer (Claude API)

- Privacy policy text is fetched, hashed with SHA-256, and analyzed by Claude on first visit or when the policy changes

- Structured JSON output includes:

- Plain-English summary

- Boolean policy claims

- Consent dark pattern score

- Change summary

- Plain-English summary

- All results are cached in

chrome.storage.local— Claude is only called when necessary

Processing pipeline per page visit:

- Content script detects tracker requests and scores the cookie consent banner for dark patterns

- Service worker runs the ONNX valuation model to assign a dollar value with confidence interval

- Service worker fetches and hashes the site's privacy policy; calls Claude if the hash has changed

- Contradiction engine cross-references policy claims against observed tracker categories

- All events are stored locally; dashboard aggregates them into the monthly statement

- Mirror model runs against the full browsing history to reconstruct the user's ad profile

What We Learned

- Running ONNX models inside a Chrome MV3 service worker requires specific WASM configuration (

wasm-unsafe-evalCSP, single-threaded execution, explicit WASM path resolution) - Privacy-related data is highly unstructured; preprocessing and feature engineering matter more than model complexity

- Combining language models with local ML creates a strong hybrid system — Claude handles open-ended text understanding, ONNX handles low-latency structured inference

- Users respond more strongly to quantitative, dollar-denominated insights than abstract privacy warnings

Challenges We Faced

- Chrome MV3's Content Security Policy blocks WebAssembly by default — required adding

wasm-unsafe-evaland configuring ONNX Runtime Web for single-threaded WASM execution - Lack of labeled ground-truth datasets for data valuation required building a training set from WhoTracksMe tracker density statistics combined with IAB CPM benchmarks

- Class imbalance in the mirror training dataset (some audience segments 30× more common than others) required weighted loss functions and per-class confidence thresholds

- Privacy policy formats vary wildly across sites, making reliable text extraction and consistent LLM output structure a significant engineering challenge

Built With

- chrome-extension-mv3

- chrome.storage

- claude-api

- declarativenetrequest

- iab

- javascript

- onnx-runtime-web

- python

- pytorch

- react

- tailwind-css

- vite

- web-crypto-api

- webassembly

- whotracksme

Log in or sign up for Devpost to join the conversation.