Inspiration

As college students who have published research and have a strong interest in academics, we love reading and learning from research papers. However, scientific jargon and dense text make incredibly interesting papers inaccessible to non-experts. In our eyes, the most important aspect of these papers is to walk away with an intuitive, comprehensive understanding of the key ideas discussed and greater curiosity to learn more, not feel unmotivated and unprepared to explore cutting-edge fields. Taking inspiration from the famous 3Blue1Brown channel, we created ReelResearch to synthesize and intuitively visualize research papers in interactive animations tailored to user skill level so you can gain further insight whether you are a graduate student trying to refine your understanding or a high schooler curious about the field.

What it does

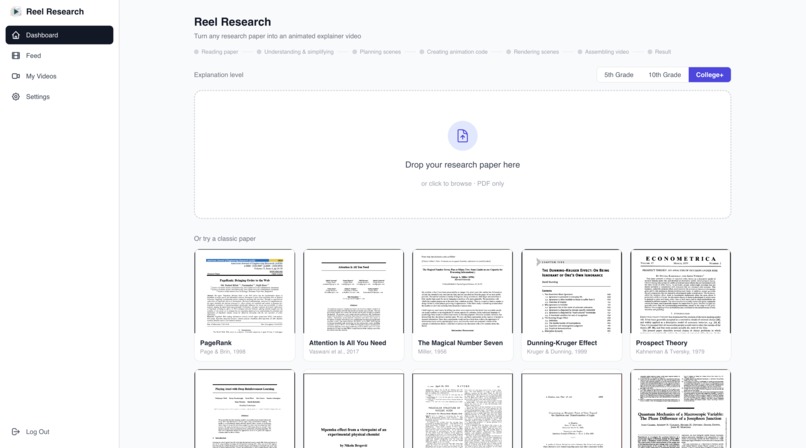

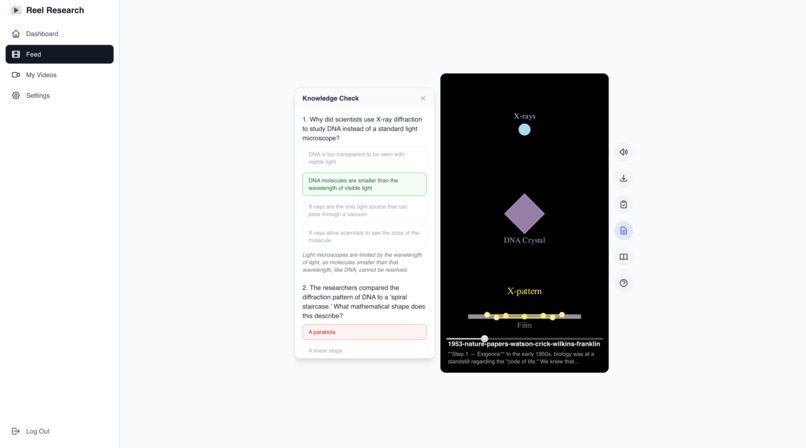

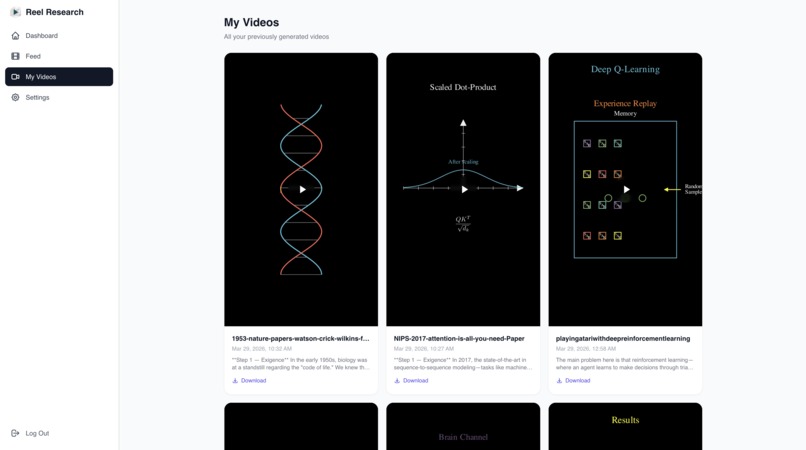

ReelResearch allows a user to upload a research paper, select which grade level the explanatory video should be (5th grade, 10th grade, College+), and generate a 1-2 minute video using a narrator and visual animations (graphs, diagrams, data visualizations, and motion graphics) designed to provide simple intuition to complicated topics. In addition to generating video and audio, ReelResearch can give users a quiz about the video, a knowledge summary of the video, a list of similar papers to read, and a chatbot that answers more questions.

How we built it

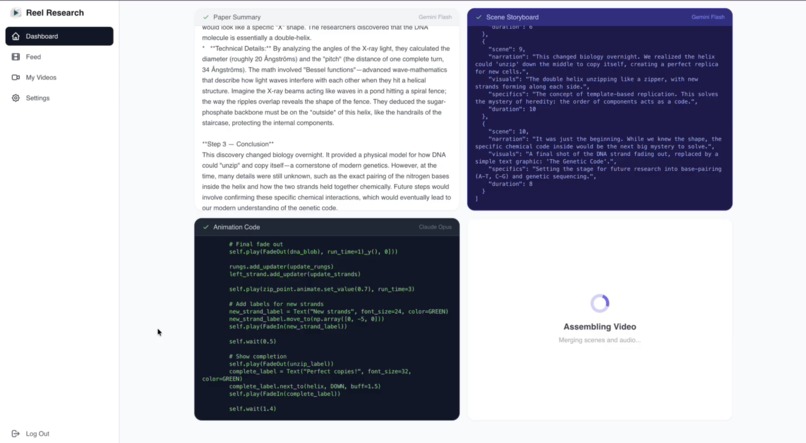

React frontend, django backend. The process uses three AI models: Gemini for summarization and narration scripting, Claude Opus for generating animation code, and ElevenLabs for text-to-speech. The animation is rendered server-side and merged with audio via FFmpeg. Clerk handles authentication.

Challenges we faced

Challenge 1: The narrator’s speech often wasn’t aligned with a relevant animation. In order to fix this issue, we designed our program to make a storyboard that includes both the animations and what words should be said within concrete time stamps. Challenge 2: The animations often produced overlapping shapes and misaligned objects, among other visual bugs. We fixed this by standardizing how AI models produce animation instructions with how to implement them. Challenge 3: Additionally, we found that our model struggled with elaborating on the key concepts in the paper, instead focusing on presenting the problem and the solution to the problem – thus missing the key in-between processes that explained precisely why the solution worked and how scientists discovered it. By editing the models to identify and summarize the key, AHA! Moments of the papers that made the research novel, we allowed the animations to truly create an intuitive understanding of the underlying mechanisms for the solution.

What we learned

With this hackathon being the first for three out of four members of our team, this was the first time many of us designed a full-stack application and used frameworks like React and Postgres. It was the biggest project three out of four of us have ever designed, so we learned how to work on a large python codebase. In addition, we also learned how to prompt engineer complex requests for code and script and use APIS that pull from AI like Claude and Gemini.

Built With

- api

- claude/gemini

- clerk

- css

- django

- elevenlabs

- ffmpeg

- postgresql

- python

- react-native

Log in or sign up for Devpost to join the conversation.