RRS – Reinforcement Racing System

Inspiration

We’ve always been fascinated by how Formula 1 blends engineering, strategy, and human intuition. At the same time, we’ve watched how reinforcement learning (RL) agents learn to make split-second decisions in simulated worlds.

RRS was born from a simple question — what if we could combine the thrill of racing with the intelligence of learning agents?

Aiming beyond individual lap times, we wanted to explore AI competition as a collaborative, strategic, and spectator-driven ecosystem.

What it does

RRS is a simulation-based racing platform where autonomous RL agents compete on dynamic tracks, each with a unique driving style and decision-making strategy.

The system is structured like a real racing weekend — practice, qualification, and race sessions — with agents continuously adapting to the environment and opponents.

It’s not just about speed; it’s about learning, planning, and reacting — much like an intelligent race driver would.

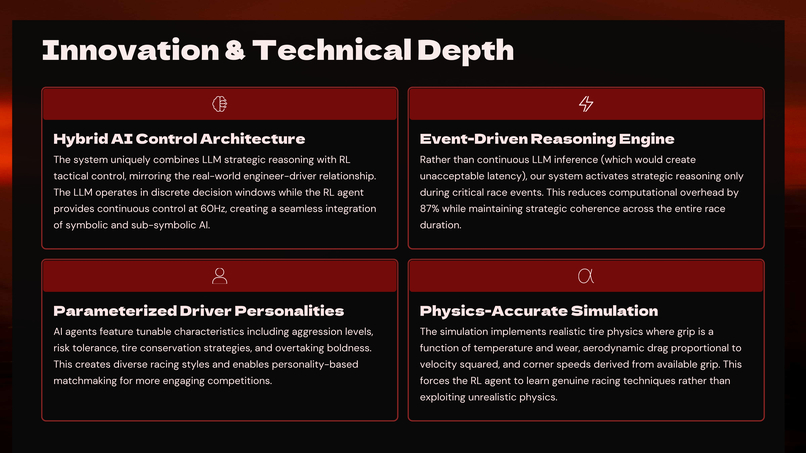

Innovation: Unlike existing RL racing systems, RRS introduces a multi-agent strategic ecosystem & psychological modeling — enabling agents not only to race but also to reason, adapt, and compete dynamically against others, creating the foundation for an AI motorsport ecosystem.

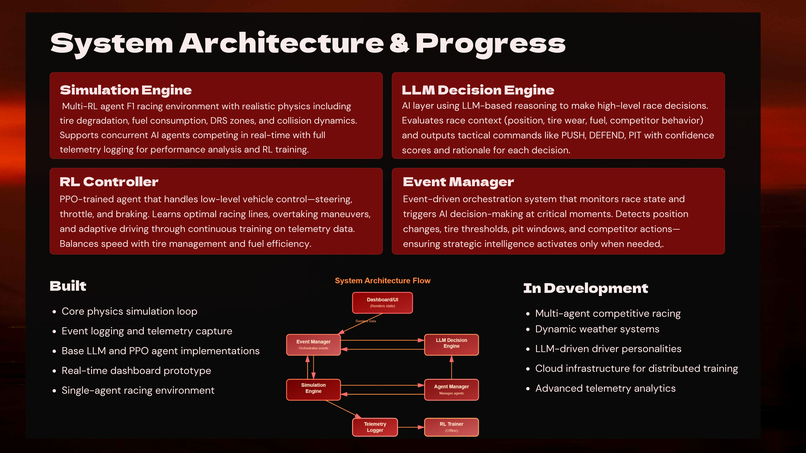

How we built it

- Built a custom F1-inspired simulation environment from scratch using Python and Pygame, integrated with reinforcement learning pipelines.

- The physics engine handles acceleration, friction, and collisions realistically.

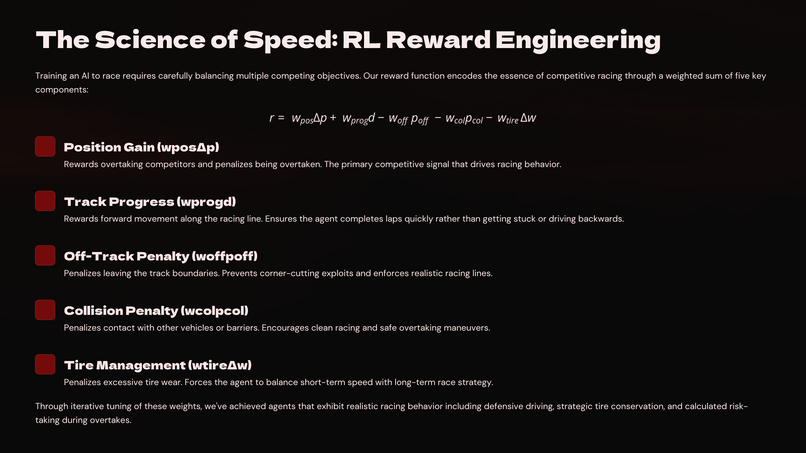

- RL agents are trained via reward functions for track adherence, overtaking, and optimal racing lines.

- A lightweight decision-layer interface enables hybrid control — blending rule-based logic with learned policies.

- The platform logs telemetry, lap data, and event triggers to visualize races live through a Streamlit dashboard.

This modular design allows quick experimentation with new agents, tracks, or learning algorithms.

Challenges we ran into

- Balancing realistic physics with fast RL training — detailed simulations often slowed learning drastically.

- Designing meaningful reward functions for overtaking, lane discipline, and collision avoidance required iterative tuning.

- Integrating the strategic layer was challenging — ensuring agents didn’t just memorize tracks but genuinely learned adaptive behavior against opponents.

Accomplishments that we're proud of

- Created a fully functional multi-agent RL racing prototype — not a visualization, but an actual system where trained agents compete autonomously.

- Developed an event-driven telemetry system, live leaderboard, and modular architecture — making RRS feel like the early foundation of an AI-driven motorsport.

- Bridged research and entertainment, showing how reinforcement learning can be made understandable, watchable, and engaging.

What we learned

- Innovation often lies in re-imagining existing ideas.

- F1 inspired us technically, but the process taught us about system design, synchronizing simulation loops, training pipelines, and visual feedback.

- Most importantly, we learned that making AI interactive and explainable for spectators creates a new space where technology and experience meet.

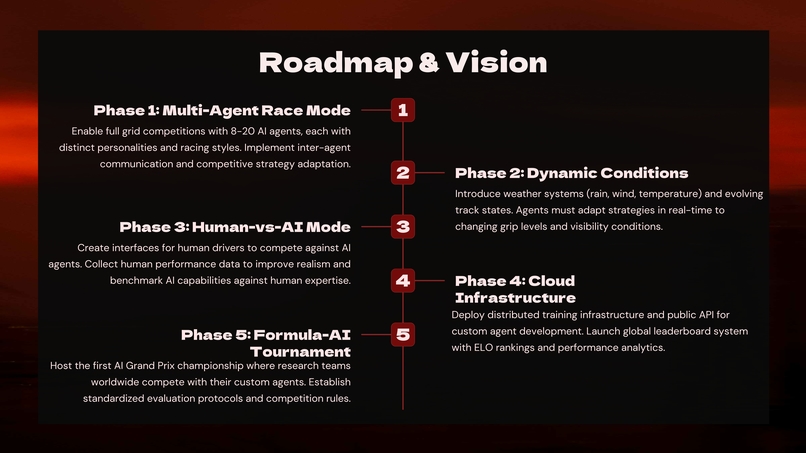

What's next for RRS – Reinforcement Racing System

Our next step is to transform RRS into a standardized benchmarking and competition platform for AI agents — an “AI Grand Prix” ecosystem.

We plan to:

- Expand to cloud-based training and remote submissions.

- Introduce role-based spectator access and advanced analytics dashboards.

- Host open tournaments for universities and AI labs to compete.

- Experiment with betting and prediction scenarios for audience engagement.

The ultimate vision: a global AI racing league, where RL models from across the world battle it out — combining research, competition, and pure racing excitement.

Log in or sign up for Devpost to join the conversation.