Inspiration

Often, humans misinterpret and misunderstand sarcastic language. Would it be possible that AI does the same? It turns out, while the latest AIs have made improvements to catch sarcastic phrasing, there are still some ways to go.

We told ChatGPT that "the E in DMV stands for efficiency," hinting that the DMV is notoriously inefficient. However, ChatGPT completely missed the joke, stating, "No, DMV does not have an E." While frontier models have made strides in natural language, they still stumble on sarcastic phrasing, a major blind spot for agentic reliability and robust AI safety.

Evidently, AI has room to grow for sarcasm detection, as agreed by multiple research papers up till 2026. In a Nature paper published by V. Grover in 2026, NLP models displayed significant improvement in detecting sarcasm when emojis and text are combined, serving as a multimodal anchor for tone. If we can build an AI model to accurately predict an emoji given some text, we can automate the curation of a highly effective training dataset for LLMs weak in sarcasm.

What it does

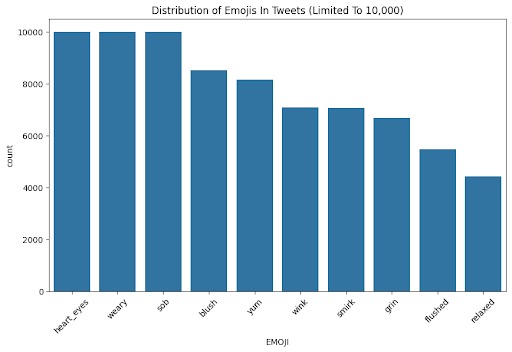

We utilize the twitter emoji dataset provided by StrataScratch in the following ways:

- Explore the data and clean the data.

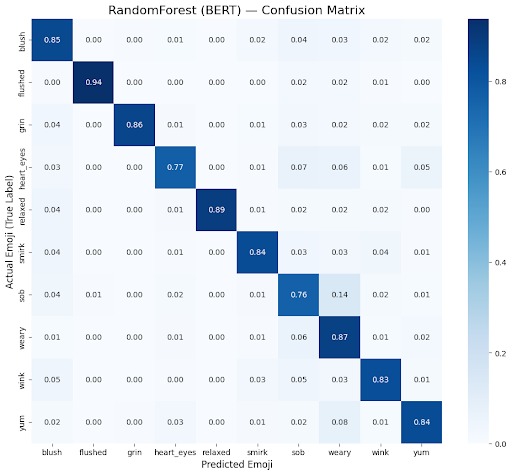

- Built a vector embedding and cosine similarity k-NN approach to predict emojis.

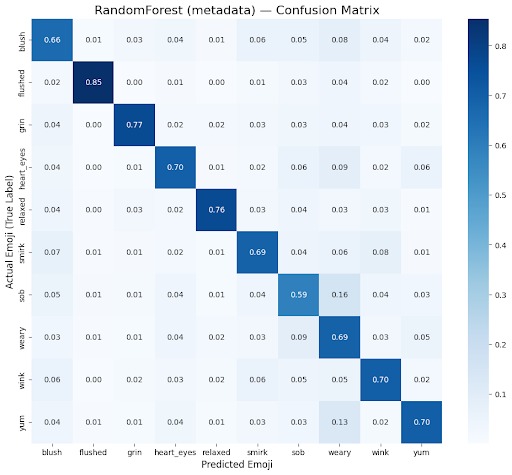

- Built a random forest classifier using 10+ identified features in data exploration to predict emoji.

- Compare and contrast the 2 approaches. (Does the semantic knowledge of text embedding matter?)

How we built it

Embedding model We used the local BERT model to embed a Twitter post into a vector. We compare the output vector with the vectors of the emojis and perform a cosine similarity search. We predict the emoji that is closest to the vector.

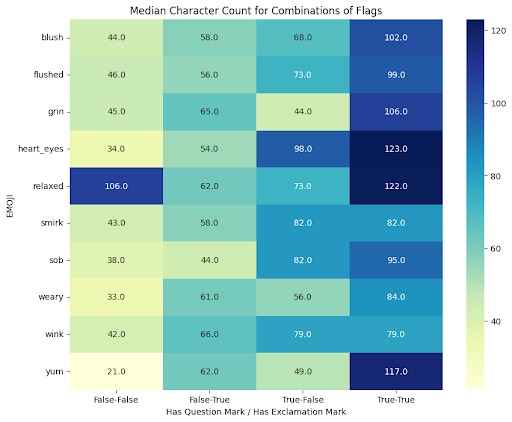

Random Forest Model We engineered features for the Random Forest model based on the Twitter posts. By plotting how the presence of certain words and punctuations differ across different emojis, we can determine useful features for the random forest classifier. Additionally, we can find patterns in the number of words, character counts, and other numerical features for more features. Lastly, we iterate through the bins of emojis to find common words for each emoji. The presence of these common words becomes another feature for the random forest classifier.

Challenges we ran into

While the naive interpretation of emojis at the end of tweets is the sentiment of the tweet, we found that there are some cases where the emojis tend to be tangential to the meaning of the written prose. We spent a long time before coming to the conclusion that the emojis themselves convey semantic meaning. Since emojis convey semantic meaning, they can be used to tease out context that the prose does not provide, leading to our deep dive into AI and sarcasm.

The embedding model was easier to build and run as the model had been trained. Additionally, embeddings are more general than feature engineering from text for a random forest. During data exploration, we spent a substantial amount of time on feature engineering for our random forest. Ultimately, the simplicity of the random forest was not enough to beat the semantic knowledge of relatively small embedding models.

Accomplishments that we're proud of

- Cleaned ~225K row dataset consisting of messy Tweets, hashtags, URLS, etc. into one training-ready CSV (removing noise and directly improving model accuracy).

- Developed automated dataset splitter, data rotation, and other tools to support model training.

- Discovered 15+ unique features in Tweets to Emojis during Data Exploration using visualization Python tools like Matplotlib and Seaborn, allowing for feature engineering of Random Forest / XGBoost.

- Trained Random Forest and Embedding model, achieving a weighted F1-score of 71.57% and 85.12% respectively.

What we learned

- Prioritize Data Exploration: Improved upon model by interpreting confusion matrices and relating them to our initial data exploration / deep understanding of dataset.

- Complex does not always mean better: Initially we developed simple BERT transformers to replicate frontier models but could not perform well due to computational cost and inability to complete training. This pushed us for simple, but yet effective models like Random Forest, XGBoost, and Embeddings.

What's next for Sarcasji

We have already identified multiple additional Twitter datasets where we can run both of our Sarcasji models. The outputs of these models will serve as a targeted, high-quality dataset to fine-tune future LLMs, directly improving their ability to comprehend nuance and sarcasm.

Furthermore, we do not need to limit ourselves to just tweets. Sarcasji can be deployed on Instagram, Reddit, and Facebook posts to capture different dialects of internet sarcasm. This will allow us to create a massive, cross-platform dataset to train production-ready models that understand context across diverse social environments.

Built With

- bert

- embedding

- pytorch

- randomforest

- scikit-learn

- stratascratch

Log in or sign up for Devpost to join the conversation.