Inspiration

When we initially heard the theme of acceleration, we decided to focus more on a group of people who not only don't get chances to accelerate very often, but who are often overlooked in discussions. With the new advancements in AI computer vision, we decided to focus more on the deaf community and how we can help make the world more accessible for them.

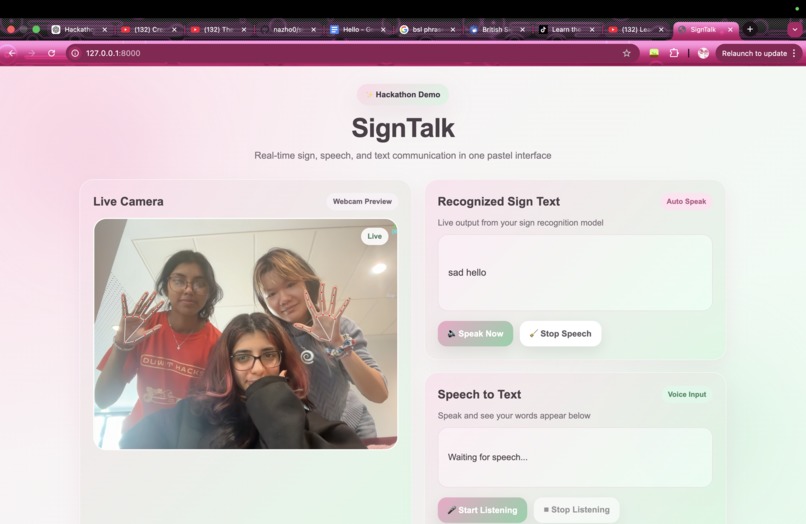

What it does

Our project watches a user perform sign language, and translates that sign language into speech in real-time. It also listens to others and translates their speech into text, meaning that the individual is able to uphold conversations comfortably and consistently.

How we built it

We built Sign2Talk using Python, OpenCV, and MediaPipe for hand tracking, trained our models with scikit-learn, and connected everything to a web interface using Flask, JavaScript, and the Web Speech API for real-time speech output.

Challenges we ran into

We had multiple issues gathering and analysing the training data, as well as linking the AI to our actual web page.

Accomplishments that we're proud of

We are very proud of the fact that our AI model can detect simple words and phrases to a certain extent.

What we learned

How to use cv and hand landmarking to make the model training process significantly easier compared to other ways such as using yolo v5

What's next for Sign2Talk

In the future, perhaps glasses can be made with built in cameras which automatically detect sign language in day-to-day life and translate it in such a way that only the glasses users can hear the translation.

Log in or sign up for Devpost to join the conversation.