Inspiration

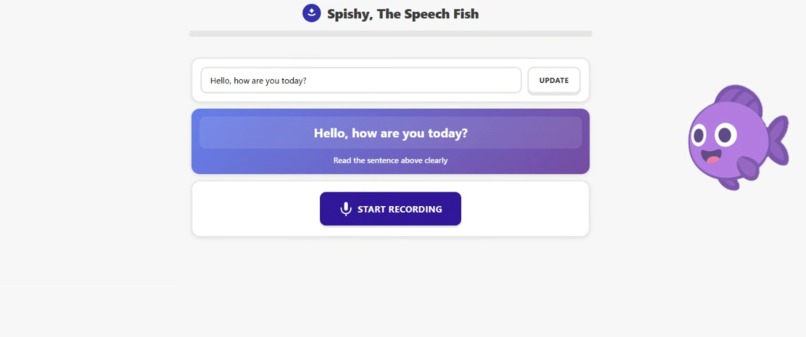

Our inspiration comes from our appreciation of speech, which we didn't realize until not long ago how powerful it is. Most of us take it for granted, but life without it would make communication arduous and slow, limiting us in what we can do and undermining our quality of life. We believe that everyone ought to be able to communicate seamlessly, and we want to help bridge that gap for the hard of hearing. Our solution was thus implemented as such, taking additional inspiration in Duolingo's model to gamify the process and present our application as a caring character: Spishy, the speech fish.

What it does

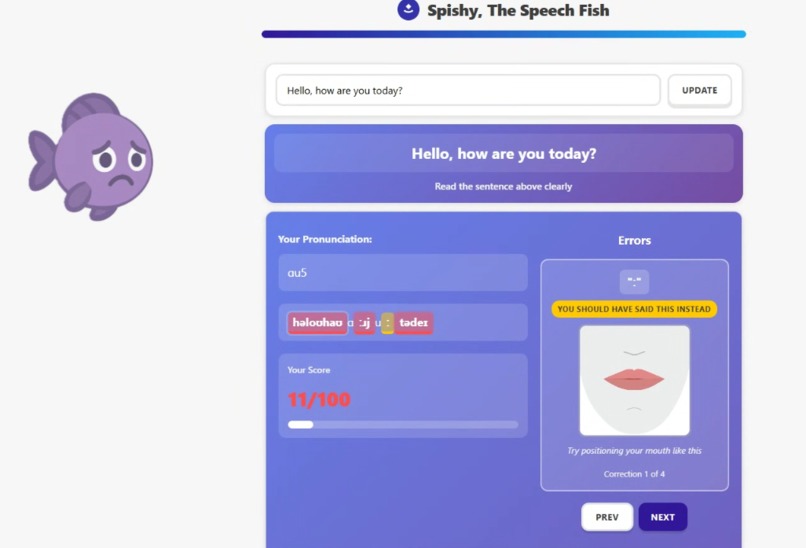

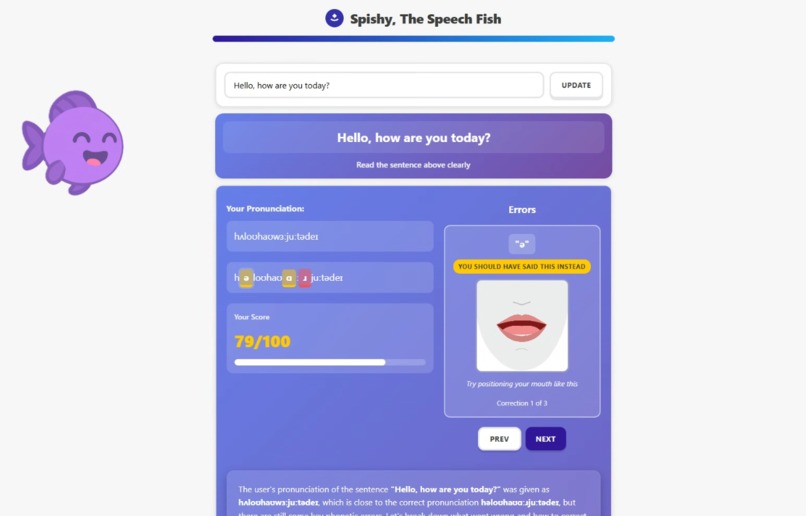

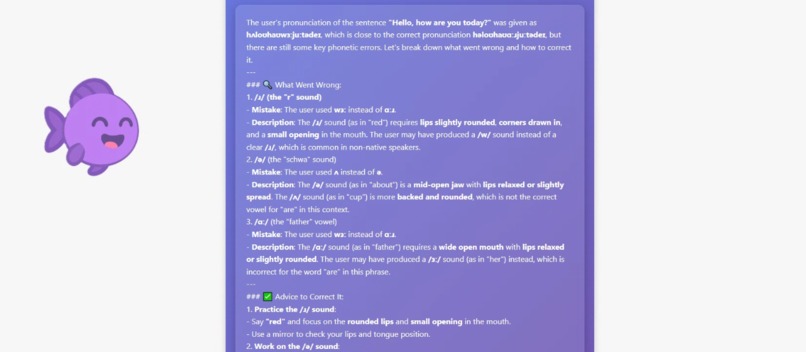

Human speech can be described in terms of phonemes, distinct sound units represented by symbols, much like an alphabet, often seen in dictionaries (e.g., "fɪʃ" which means fish). Spishy helps users to refine their speech by analyzing it at the phoneme-level and offering precise feedback and corrections to target specific sounds. The application presents words to the user to say, after which attempts at speaking them out are recorded and evaluated. The application then offers feedback by showing images that illustrate how to properly articulate one's mouth (visemes) and natural language feedback, explaining the mechanics of articulation, providing examples and tricks to master pronunciation without relying on hearing.

How we built it

Starting with a reference text, we use eSpeak-NG to generate the target phonemes. Then, we use wav2vec2, an encoder-decoder model that processes audio inputs into phonemes, to generate the user's phonemes. We then use Levenshtein distance and alignment to compute the precise errors in the user's phonemes. Spishy finds visemes and instructinons for the identified sounds that need improvement, while using Qwen3-8B to generate personalized feedback further detailing how to master articulation.

Challenges we ran into

We ran into a core problem in linguistics: the non-determinism of mapping between graphemes (text) and phonemes. Switching between text and phonemes is a difficult task that we ran into when attempting to find errors in non-phonemized text. Additionally, deploying Qwen3-8B for natural language feedback required additional debugging to reduce memory usage. Our solution was to run it using Ollama in 8-bit quantization to access its inferential capabilities without compromising on generation quality. Last but not least, since the feedback provided to the user carried a lot of information (e.g., visemes, feedback, indices, types of errors), getting the frontend to agree with the backend proved to be challenging, requiring agreement between the parties developing the respective parts.

Accomplishments that we're proud of

We're especially proud of being able to carry out speech evaluation at the highly granular level of phonemes. We think that our frontend is both aesthetically pleasant and functional, offering a decent experience to our users in order to empower them as best as possible. Our multimodal feedback (e.g., visemes, natural language feedback, specific targeted phonemes) is another point that we're proud of, since we think that this wealth of information could truly help the hard of hearing learn autonomously to speak.

What we learned

After relying on a single machine for inference with wav2vec2 and Qwen3-8B, we learned that this was not the best strategy, as at some point of our project, meaningful work could only be made on a single machine, representing a waste of our team's workforce. In addition, our solution turned out to be highly monolithic since we used pywebviewm, which tightly knit together our backend and frontend code, making independent development between the two sides nearly impossible. Pywebview, though convenient, offered a poor debugging experience, lacking any hot-reload and inspection capabilities of the generated HTML and CSS. Last but not least, we should communicate better between the frontend and the backend team to agree on how information is formatted and passed.

What's next for Spishy - The Speech Trainer

We think that the gaming experience could be highly improved, following in the foosteps of Duolingo. We could integrate games and levels of increasing complexity, challenging the user to improve their speaking while giving them practical phrases to use. We could also develop models that analyze rhythm and intonation, offering advice to align these with common speech. This application could also be used for people learning to speak other languages, as the phonemes that we use (International Phonetic Alphabet, (IPA)) work universally. Perhaps one day, we could solve the non-deterministic mapping between phonemes and graphemes, tackling at last the main issue that haunted us in this project.

Built With

- css

- espeak

- html

- javascript

- levenshtein

- ollama

- python

- pywebview

- transformers

Log in or sign up for Devpost to join the conversation.