Latch — Catch Drift Before the Auditor Does

AI-powered configuration drift detection for biotech cloud infrastructure.

Inspiration

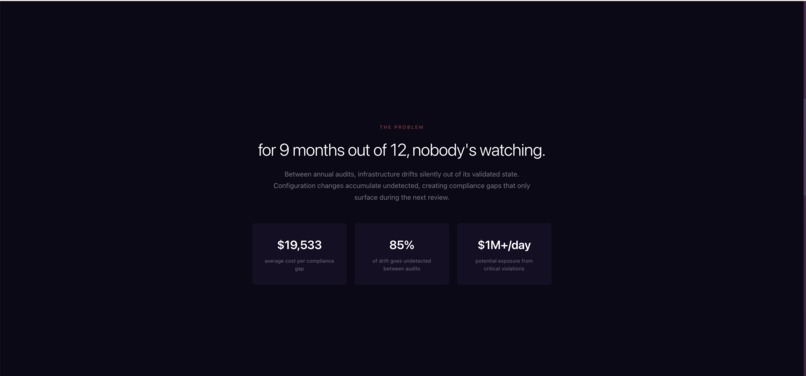

Anyone who's worked with databases and cloud infrastructure knows the pain: configurations silently shift over time, and nobody notices until something breaks. A security setting gets toggled during a sprint. An access permission is tweaked under deadline pressure. A firewall rule is loosened for debugging and never restored. Individually, none of it feels like a big deal. But the gap between "something changed" and "someone noticed" is where real problems live.

We experienced this firsthand working with cloud environments — the frustrating realization that what's running in production doesn't quite match what you think is running. We started asking: where does this problem hurt the most? Where is the cost of a missed configuration change not just annoying, but genuinely catastrophic?

The answer was biotech. Companies developing therapeutics operate their cloud infrastructure under FDA oversight, governed by a framework called GxP — a set of regulatory guidelines (including 21 CFR Part 11 and 21 CFR Part 211) that require production systems to remain identical to their validated baselines at all times. If an FDA auditor walks in and your system doesn't match the version you validated, that's not a bug — it's a compliance finding that can delay a drug approval. Biotech is one of the fastest-growing industries that needs better infrastructure to help teams manage the complexity of regulatory and laboratory work, and the tooling just isn't there yet.

We built Latch to close that gap — a platform that continuously watches cloud configurations, catches drift the moment it happens, and gives teams everything they need to respond before an auditor ever has to ask.

How We Built It

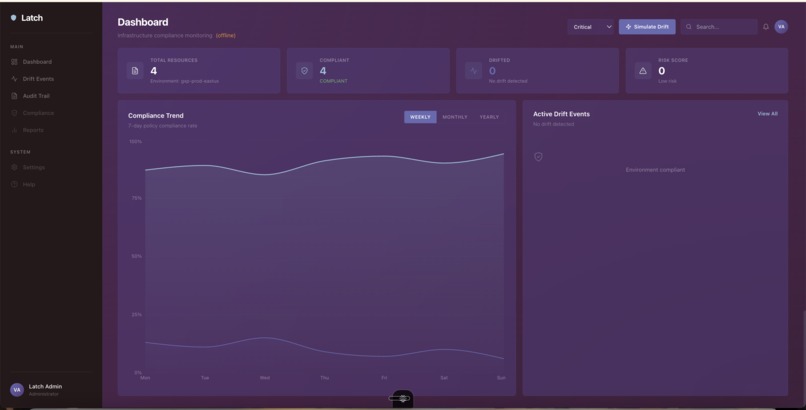

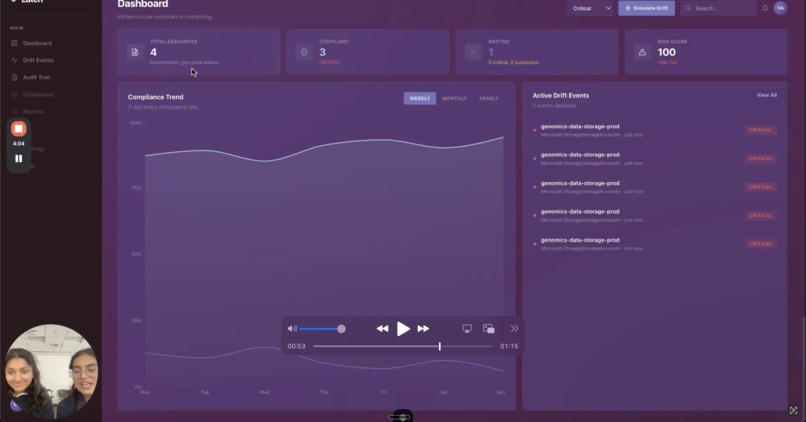

We started with the frontend. Building out the React dashboard first gave us clarity on what the user experience needed to be: a QA manager should be able to open Latch and immediately see which environments are clean, which have drifted, and what's being done about it. Designing the interface first forced us to define the core workflows — what data needs to surface, what actions need to be available, and how urgency gets communicated visually.

From there, we mapped out what the backend needed to do: poll cloud resource configurations on a schedule, store snapshots, diff them against validated baselines, classify each difference by regulatory severity, and trigger the right response. The question became: how do we build that entirely on Azure?

We matched each backend requirement to the best available Azure service:

- Azure Functions (timer-triggered) poll cloud resource configurations every 5 minutes — storage encryption settings, IAM permissions, network security groups, container versions, database configs. Read-only credentials ensure Latch never modifies what it monitors.

- Azure Cosmos DB stores both real-time configuration snapshots and the long-term audit trail. Every state, every diff, every classification decision is timestamped and immutable.

- Azure OpenAI (GPT-4o) powers the classification layer. Each detected difference is evaluated through a GxP-aware prompt that classifies it into one of three tiers:

| Tier | Meaning |

|---|---|

| Allowed | Expected operational change (e.g., auto-scaling) |

| Suspicious | Unplanned deviation requiring review |

| Critical | GxP violation — triggers immediate remediation |

The model doesn't just classify — it cites the specific FDA regulation that applies, giving QA managers exactly what they need for an inspection response.

- Azure AI Search augments classification with a semantic search index over the FDA regulation corpus. When GPT-4o classifies a drift event, it retrieves the most relevant regulatory passages as grounding context — making classifications explainable and audit-defensible.

- Azure Event Grid routes drift events by severity, decoupling detection from remediation and enabling the dashboard to update in real time the moment a drift is classified.

- GitHub serves as our repository layer. When Latch detects Critical or Suspicious drift, it automatically opens a pull request containing the exact Terraform code to restore the compliant state, a pre-written GxP change justification, a link back to the drift event in the dashboard, and the QA manager tagged as required approver.

Critically, Latch never auto-merges. Auto-applying a configuration change without human approval would itself be a GxP violation. The human stays in the loop. The paperwork is just already done.

The full data flow:

Azure Functions (poll cloud resources)

→ Cosmos DB (store configuration snapshot)

→ Comparison Engine (diff vs. validated baseline)

→ Azure OpenAI + AI Search (GxP classification)

→ Event Grid (route by severity)

→ GitHub API (auto-open remediation PR)

→ Cosmos DB (update audit trail)

→ React Dashboard (real-time status)

What We Learned

Building an agentic system taught us more than we expected. Latch isn't just answering questions — it's observing an environment, making classification decisions grounded in regulatory context, and taking action by opening pull requests with remediation code. Designing that autonomous pipeline, where AI detects a problem, reasons about its severity, and initiates the right response without a human prompting it, was a fundamentally different challenge than building a chatbot or a standard API integration. It pushed us to think carefully about when the system should act on its own and when it must defer to a human.

There is so much room to simplify how regulated industries work. Biotech QA managers spend an enormous amount of time on manual comparisons, audit documentation, and chasing down configuration changes after the fact. The workflows are painful and largely unchanged. Building Latch made us realize how much opportunity exists to take complex, high-stakes operational work and make it dramatically easier — not by replacing the human judgment, but by eliminating the busywork around it so people can focus on the decisions that actually matter.

The Azure integration was genuinely rewarding. Getting five Azure services — Functions, Cosmos DB, OpenAI, AI Search, and Event Grid — talking to each other in a live feedback loop, all updating a React frontend in near real-time, was the most technically satisfying part of the build. Making the round trip from detection to classification to dashboard update happen in under 60 seconds required careful orchestration, and seeing it work end-to-end was a highlight for the whole team.

LLMs can do real compliance work — but only when grounded. Pairing Azure OpenAI with Azure AI Search over the actual FDA regulation corpus was the architectural decision that made classifications defensible rather than just plausible. Without retrieval-augmented generation, the model's outputs were reasonable but not citable. With it, every classification comes with the specific regulatory passage that supports it.

Challenges We Faced

Domain complexity. Understanding 21 CFR Part 11, GxP validation principles, and cloud infrastructure configuration simultaneously is uncommon — and accurately modeling the intersection in prompts took significant iteration. Getting GPT-4o to reliably distinguish "Suspicious" from "Allowed" required careful prompt engineering and RAG grounding.

Defining the right scope of "drift." Not every configuration difference is a compliance event. Auto-scaling triggers look like drift. Temporary debug changes look like drift. Building classification logic to distinguish operational noise from genuine GxP violations — without creating alert fatigue — was the core product design challenge.

Keeping humans in the loop without creating friction. Latch had to thread a needle: automate enough that QA managers don't drown in manual work, but preserve enough human oversight that the remediation workflow is itself GxP-compliant. The PR-based approval model was our solution — low friction, full auditability.

Orchestrating a live demo under time pressure. Wiring Azure Functions, Cosmos DB, OpenAI, Event Grid, and the GitHub API into a live sub-60-second loop with a clean dashboard required getting all five services talking to each other reliably within a hackathon timeline — and that was genuinely hard.

Built With

- azure

- cli

- cosmosdb

- fastapi

- functions

- github

- javascript

- openai

- python

- react

- rest

- tailwind

- vercel

- vite

Log in or sign up for Devpost to join the conversation.