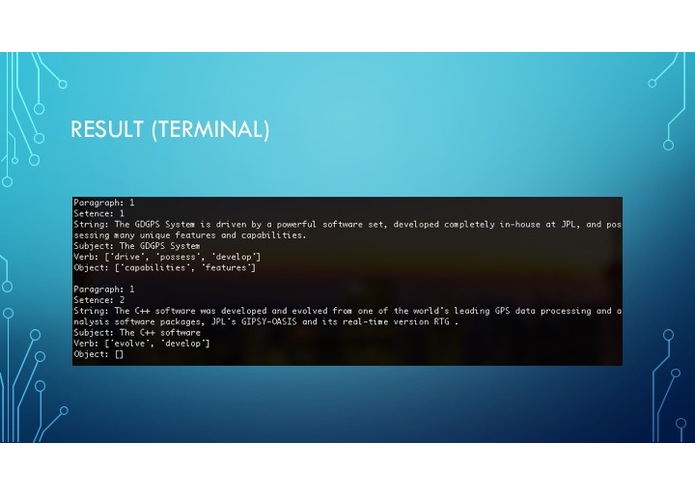

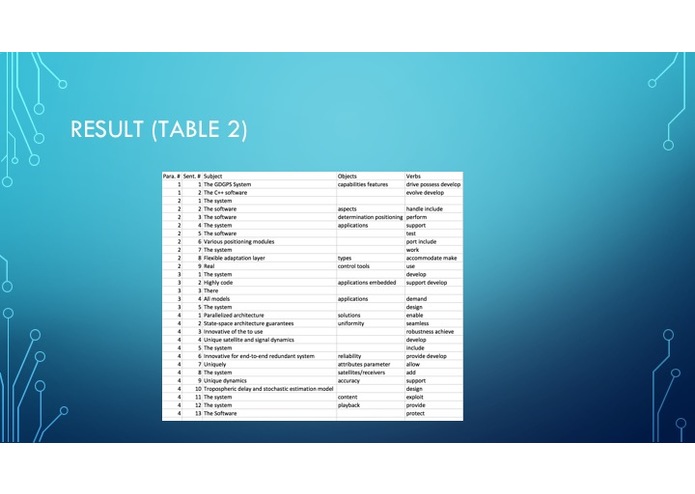

What it does

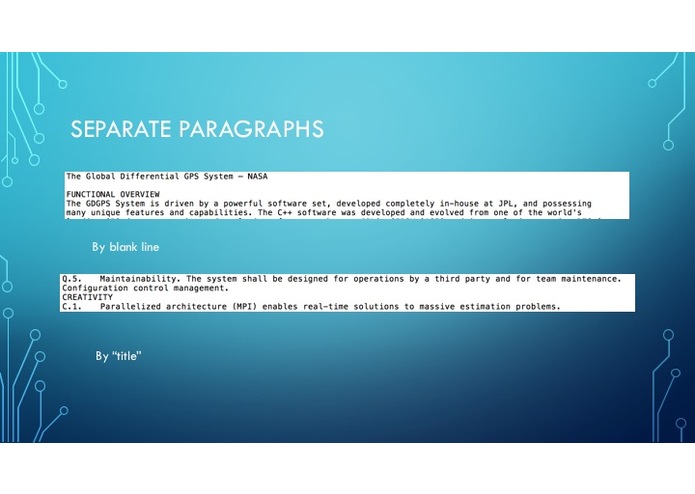

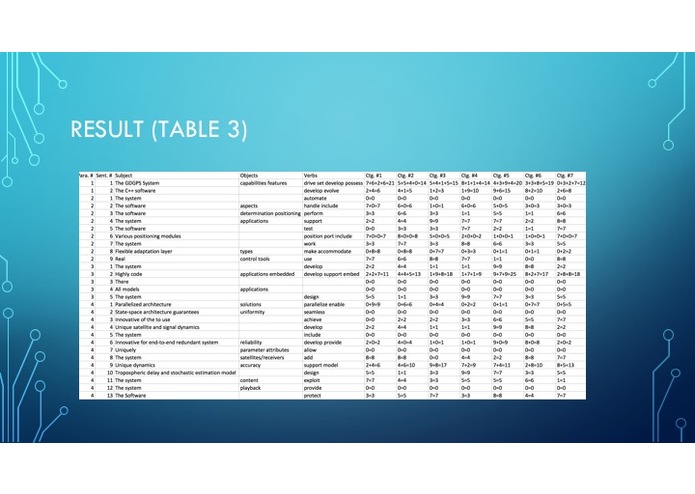

The program analyzes a paragraph of text, uses the nltk library to separate the paragraphs and sentences. Then, it use Stanford dependecies parser to find the subject, object, and the verbs of a particular sentence, and the frequency/ score of verb of different categories.

How I built it

We used Python as our implementation language, as we are most comfortable with language and it offers more methods with string. We used the NLTK extensively and studied its documentation, while using parser to accomplish the parsing functionality that NLTK library lacks.

Challenges I ran into

•Separate paragraphs with blank line and title (all upper case) •Divide sentences by dots, and sometimes by line break •Delete irrelevant sentences and paragraphs •Find and implement a parser to identify subjects and objects •Export result into a .csv file elegantly

Accomplishments that I'm proud of

Get it done. Separate the paragraphs and sentences elegantly, even in very strange scenarios.

What I learned

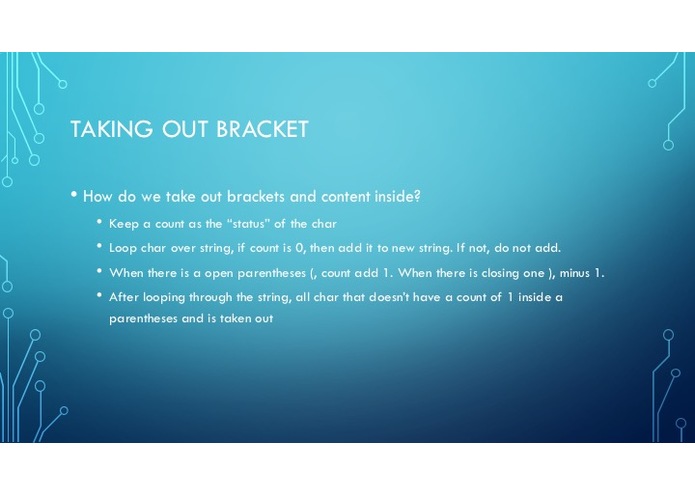

How to take out things inside parentheses and the logic behind it. How to utilize NLTK and parser API. How does a computer analyze natural language

Log in or sign up for Devpost to join the conversation.