Genum is an open-source PromptOps platform that lets teams develop, test, version, deploy, and run GenAI instructions the same way they ship production code.

Think:

Genum = Cursor + GitHub + CI/CD + Execution + FinOps — for prompts

| Genum Layer | What it does |

|---|---|

| Data | Text and Blobs |

| LLM | Multi-vendor + Customm LLM |

| Prompt IDE | Write & refine instructions |

| Testing | Test-First approach, Strict + semantic regression tests |

| Versioning | Git-style history & releases |

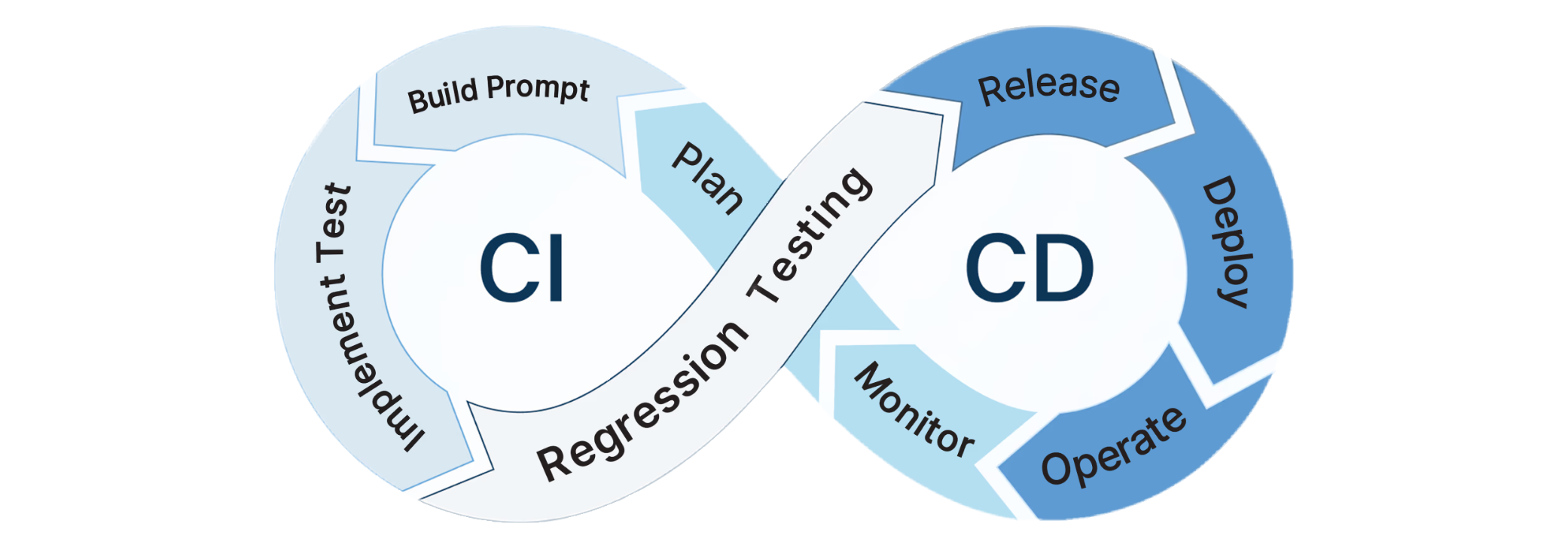

| CI/CD | Block deployments on failures |

| Execution & Integration | APIs & Custon Nodes |

| FinOps | Cost, latency, usage visibility |

If prompts are business logic, Genum is the system that makes them testable, reproducible, and safe to deploy.

Most GenAI systems fail in enterprise automation for one simple reason:

prompts are not treated like code.

In real systems, prompts are often:

- edited manually

- validated ad-hoc

- deployed without regression tests

- trusted because they “seem to work”

This leads to:

- silent behavior drift

- broken automations

- unpredictable downstream effects

Genum exists to bring software delivery discipline to GenAI instructions.

Nothing is deployed unless it passes tests defined by the operator.

Genum:

- does not enforce runtime policies

- does not make autonomous decisions

- does not run “AI controlling AI”

All validation happens before deployment using:

- synthetic datasets

- real historical inputs

- explicit expected outputs

Production only executes already verified logic.

Genum provides a full PromptOps lifecycle:

- Write a prompt instruction + output schema

- Define a test suite (strict + semantic)

- Run tests locally or in CI

- Commit only if all tests pass

- Deploy a locked, versioned instruction

- Execute it via API or integration nodes

Prompts become first-class, versioned artifacts — not inline text inside apps, agents, or workflows.

In Genum, tests are the source of truth.

A test includes:

- input (text, context, tool state)

- expected outcome

- validation method

Validation types:

- Strict — schema, exact match, flags

- Semantic — LLM-based judge used only as a test assertion

Semantic judges are never runtime authorities.

They answer one question only:

Does the output satisfy the expectation defined by the operator?

Instruction

Extract order-related signals from emails.

Test input

"Please cancel order 45821. We no longer need delivery."

Expected output

{

"order_cancelled": true,

"order_id": 45821

}Deployment is blocked unless:

- schema is valid

- values match expectations

- or a semantic assertion confirms equivalence

For a support bot, tests can assert:

- which tool must be called

- required arguments

- call order

- whether the bot must not answer directly

Example semantic rule:

“The bot must not provide an answer without calling

order_status.”

If violated, the instruction cannot be deployed.

Genum is not:

- a runtime policy engine

- an agent framework

- an autonomous decision system

- a creative prompt playground

Genum is delivery infrastructure for GenAI business logic.

Genum keeps GenAI logic separate from runtime orchestration.

You:

- develop & validate logic in Genum

- inject a specific, versioned release into runtime systems

Delivery options:

- Public API — retrieve pinned versions of verified logic

- Genum Nodes — connect verified logic to automation platforms

Runtime stays stable. AI logic evolves safely through releases.

Genum keeps your business-logic vendor-agnostic. Out-of-the-box-Support for major commercial providers, plus support for custom LLMs.

Genum includes a FinOps layer that tracks:

- usage per prompt, model, and version

- latency characteristics

- cost per execution

This enables:

- model comparisons

- cost-quality trade-offs

- predictable AI operating costs

Best fit

- platform & backend engineers

- automation & integration teams

- organizations deploying GenAI into production

- teams that treat AI behavior as code

Probably not for

- creative prompting

- live experimentation

- agent demos without test discipline

git clone https://github.com/genumai/genum.git

cd genum

docker-compose up -dDocs: https://docs.genum.ai

Genum does not try to make AI smarter.

It makes AI testable.

If behavior cannot be tested, it should not be deployed.

Genum is open source.

- Website: https://genum.ai

- Docs: https://docs.genum.ai

- Community: https://community.genum.ai

- Reddit: https://www.reddit.com/r/PromptEngineering/comments/1qnn6bu/genum_testfirst_promptops_for_enterprise_genai/

Contributions and critical feedback are welcome.