PerceptionAI

Detecting Human Emotion Through Voice and Language

Inspiration

As a student of psychology and computer science, I’ve always been fascinated by how humans communicate emotion through tone, rhythm, and subtle cues in speech. In therapy, counseling, and even daily conversations, emotion recognition plays a critical role in understanding what someone truly feels beyond the words they use.

That inspired me to build PerceptionAI — a system that listens to live audio and interprets emotions in real time, blending my background in psychology with modern AI.

What It Does

PerceptionAI listens to live speech through your microphone and analyzes both:

Acoustic signals (tone, pitch, energy, speech rate)

Linguistic meaning (the words and sentiment themselves)

It then fuses these two into valence–arousal coordinates — a psychological model that measures:

Valence → how positive or negative an emotion is

Arousal → how intense or calm it is

From there, it classifies a single dominant emotion such as Happy, Calm, Angry, Sad, Excited, or Worried — and returns a readable emotional summary in real time.

If both valence and arousal are neutral (near 0), it simply responds with “Neutral” — avoiding over-interpreting flat or ambiguous speech.

How I Built It

Frontend:

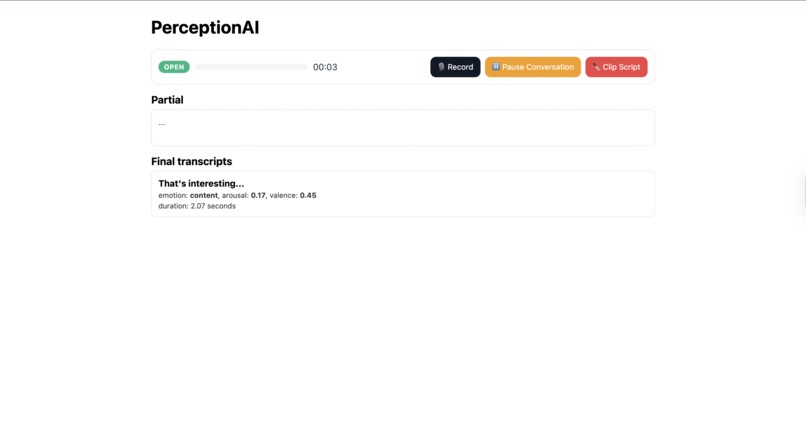

Built with React + TypeScript + Vite, featuring a clean dashboard that visualizes emotion states in real time.

WebSocket connections stream audio frames to the backend.

Displays live emotion updates (text label, valence, arousal graph).

Backend:

Developed using FastAPI (Python) for real-time WebSocket streaming.

fish.audio API provides speech-to-text transcription (or local Whisper fallback).

Sentiment & Emotion Analysis: implemented a custom valence–arousal mapping inspired by affective psychology research.

The system merges:

Lexical cues → emotion-laden words (“happy,” “angry,” “calm,” etc.)

Prosodic features → loudness (RMS), pitch variance, and speech rate

Intensity modifiers → exclamation marks, ALL-CAPS emphasis, and intensifiers (“very,” “so,” “extremely”)

Fusion Layer: The fuse.py module combines text-based scores with acoustic features into a single emotional state. If both |valence| < 0.1 and arousal < 0.1, the system automatically labels the result as Neutral to match the psychological model.

Tech Stack Overview

Layer Tools / Frameworks Frontend React, TypeScript, Vite Backend Python 3.13, FastAPI, WebSockets Audio Processing fish.audio API / faster-whisper NLP Custom Valence-Arousal Model, TextBlob Visualization Recharts, Framer Motion Hosting Local prototype (expandable to AWS / GCP)

Challenges I Ran Into

Realtime WebSocket instability: ensuring reliable bidirectional audio streaming between the browser and backend required fine-tuned buffering and reconnect logic.

Mapping subtle emotions: translating numeric valence/arousal values into human-readable labels (e.g., distinguishing “calm” from “content”) was non-trivial.

API limits: fish.audio sometimes returned 402 Payment Required — I had to implement graceful fallbacks to local Whisper STT.

Noise sensitivity: emotion detection can be heavily influenced by microphone quality and background sounds, so I had to normalize RMS levels dynamically.

Accomplishments That I'm Proud Of

Built a fully working live emotion analyzer in under 48 hours.

Designed a psychologically grounded model that maps valence–arousal data to intuitive emotions.

Integrated a smooth React + FastAPI + WebSocket pipeline for continuous, low-latency analysis.

Created a self-contained fallback pipeline using local Whisper when API access fails.

Learned to combine human psychology and machine intelligence in a meaningful way.

What I Learned

The value of combining quantitative AI signals (probabilities, polarity) with qualitative human interpretation.

How emotion models from psychology (like Russell’s Circumplex Model) can be directly encoded in modern AI applications.

The importance of fallback and fault tolerance when integrating third-party APIs.

Designing UX for something abstract like emotion is its own form of empathy challenge.

What’s Next for PerceptionAI

Emotion Timeline View: visualize emotional changes throughout an entire conversation or therapy session.

Speaker Diarization: detect emotions per speaker in multi-person conversations.

Fine-tuned models: integrate a lightweight transformer to predict emotions directly from audio embeddings.

Web & Mobile App: deploy as a cross-platform application using React Native or Electron.

Clinical Integration: explore use in tele-therapy or mental-health chat tools to help practitioners better gauge patient mood.

Built With

- fastapi

- fish.audio

- python

- react

- textblob

- typescript

- vite

- websockets

Log in or sign up for Devpost to join the conversation.