Inspiration

Inspiration for this project comes from the cursor, Kiro, and Claude. There are a lot of tools that help developers with development work and coding, but I want to help QA test with AI in plain English.

What it does

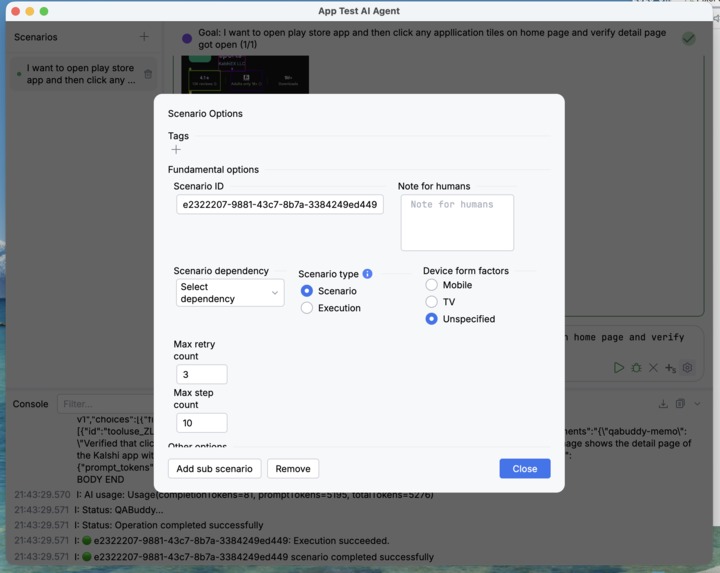

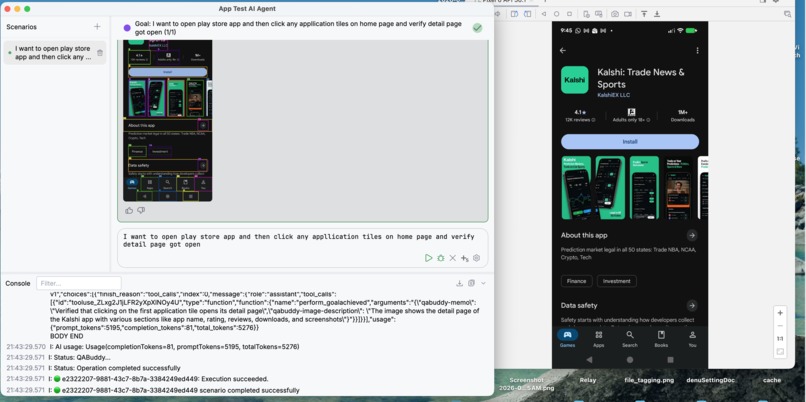

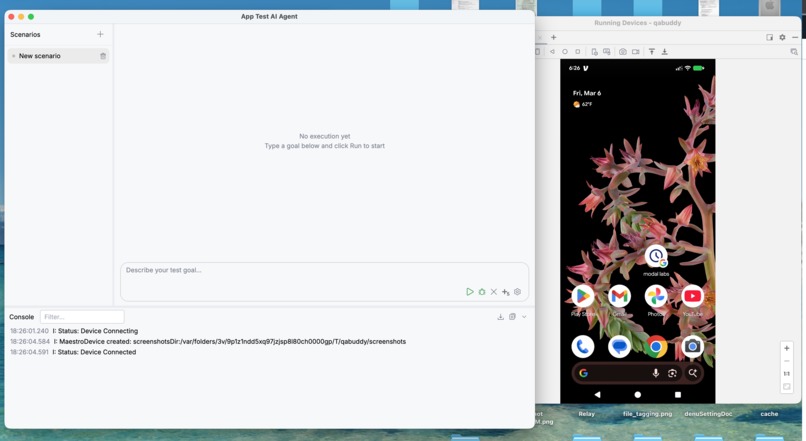

QABuddy is an AI agent testing framework that automates end-to-end testing of modern applications using AI. Traditional UI testing frameworks break easily when UI changes occur (A/B tests, dialogs, ads, layout changes). QABuddy solves this by enabling AI agents to interact with the application as a real user, making tests more robust. The framework allows complex testing tasks to be broken into smaller dependent scenarios, making AI-driven testing more predictable and scalable.

Key capabilities include: -AI-driven UI interaction across Android, iOS, Web, and TV -Scenario decomposition to manage complex testing flows -Natural language test goals instead of fragile selectors -Integration with Maestro YAML flows for deterministic setup -AI-powered image assertions to verify UI states -MCP tool support to interact with external systems -CLI + UI workflow for engineers and QA teams -Parallel test execution with sharding for CI pipelines -This allows teams to test real user behavior on real devices using AI agents.

How we built it

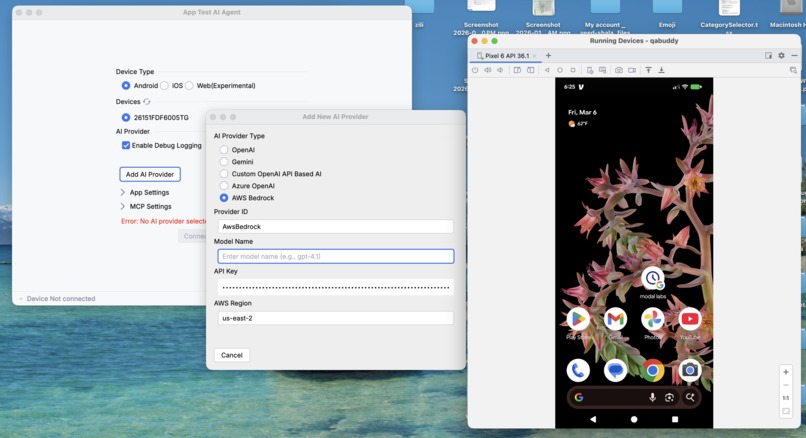

QaBuddy is built as a modular Kotlin framework (97.8% Kotlin) using a Gradle multi-module architecture with dedicated modules for core logic, AI integration (OpenAI/Gemini/Azure), CLI, MCP client, UI, and web reporting. Inspired by OkHttp's interceptor pattern, we designed pluggable interfaces (QABuddyAi, QABuddyDevice, QABuddyInterceptor) so users can swap AI providers, target OSes, and customize behavior. For device interaction, we leveraged the Maestro library to support Android, iOS, and Web. The orchestration layer breaks complex test goals into dependent scenarios defined in YAML, while the AI agent decides actions based on optimized UI trees and annotated screenshots. We also integrated Roborazzi for AI-powered image assertions and added MCP support for external tool integration. Core components include:

- AI Agent Engine The AI agent analyzes:

- the UI tree

- annotated screenshots

- the test goal

Then it decides the next action (tap, type, navigate, etc.). Supported AI providers include: -Aws-Nova -OpenAI -Gemini -OpenAI-compatible APIs

Challenges we ran into

The biggest challenge was making AI agents reliable — they'd click wrong buttons, open unrelated apps, or get stuck in loops on complex screens. We tackled this with stuck-screen detection, UI tree optimization (filtering noise so the AI understands the screen better), and a scenario decomposition approach that breaks large tasks into smaller, more predictable steps. Supporting multiple form factors (phone, tablet, TV with D-pad navigation) and OSes added significant complexity. Balancing speed with accuracy was also tough — AI-driven testing is inherently slower than traditional approaches, so we had to introduce sharding and result caching to keep execution times practical.

Accomplishments that we're proud of

I am proud of making AI agent testing accessible to non-engineers through a visual UI for scenario creation while keeping a powerful code interface for developers. The scenario dependency system that decomposes complex tasks is a meaningful contribution — it makes AI testing genuinely scalable. Supporting three OSes, multiple AI providers, TV D-pad navigation, Maestro YAML integration, and MCP-based external tool access in a single open-source framework is something no other tool offers with this level of customization. Achieving a 5/5 fidelity rating on real devices and a 4/5 maintainability score (tests written in natural language that survive UI changes) validates the core approach.

What we learned

I learned that AI agents alone aren't enough — orchestration and task decomposition are what make them practical. Raw AI capability matters less than how you structure and constrain the problem. We also learned that accessibility metadata is crucial for AI understanding of UIs, which led us to create the [[aihint:...]] format for apps to provide domain-specific context. On the engineering side, designing for extensibility from day one (via interfaces and interceptors) paid off enormously when adding new AI providers and platforms. Finally, we learned that hybrid workflows — combining deterministic Maestro flows for setup with AI-driven exploration for testing — deliver the best reliability.

What's next for QaBuddy

Publishing to Maven Central for easy adoption is the immediate priority. Beyond that, we plan to expand AI provider support (including locally-hosted models via Ollama), improve speed through smarter caching and parallel agent execution, and add more robust reporting and analytics. We want to deepen MCP integration so QaBuddy can verify backend state, check logs, and interact with CI/CD pipelines during tests. Community-driven contributions for new device types and AI providers are a key growth path. Longer term, as AI models improve, we expect QaBuddy's speed and reliability scores to rise naturally, making AI agent testing a practical default for teams everywhere.

Built With

- ai

- amazon-web-services

- android

- ios

- kotlin

- nova

- web

Log in or sign up for Devpost to join the conversation.