Inspiration

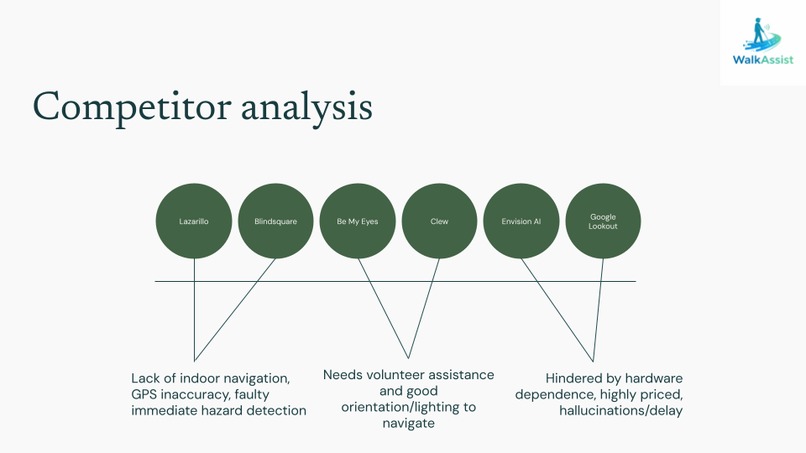

Boulder is an extremely difficult place to navigate for individuals with visual impairments due to hazardous weather conditions, steep walkways, and consistent foot/vehicle traffic. In hopes of making Boulder a more accessible place for those with disabilities, our team decided to explore different applications and tools that are currently utilized to aid visually impaired individuals in their everyday lives. Through our research and exploration, we saw a significant disconnect between current implementations and real-world adaptability with many solutions often utilizing preset routes or not working in realtime. Due to this shortcoming, we decided to focus on a real-time LLM application that would utilize a live glasses video feed to output auditory information about local surroundings. Utilizing an AI-based approach with various depth-sensing and categorization LLMs, we were able to develop a real-time product that provides an additional level of clarity and information for people hoping to traverse real-world environments.

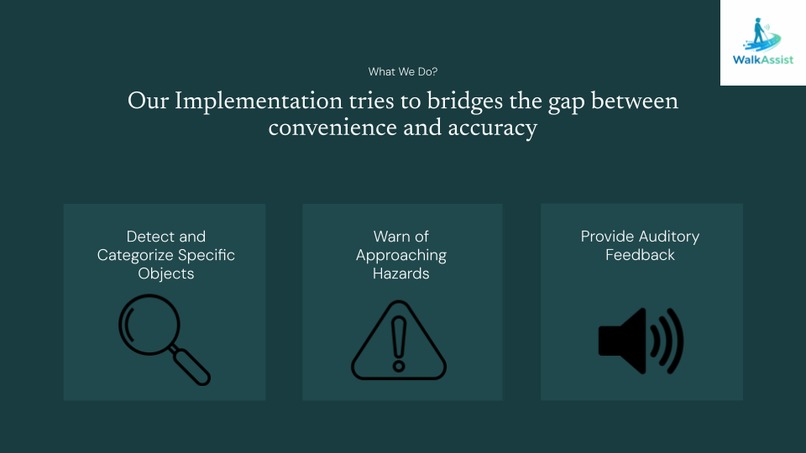

What it does

This innovative application of computer vision and detection LLMs uses a multi-faceted approach to increase information provided to visually impaired individuals, aiding them in navigating dangerous spaces. When the live feed is active information will be kept down about relative distances between the user and surrounding objects. When a human or any other selected detection object enters the frame, the user will receive an auditory voice line that indicates the object's general location and the object type. Additionally, if an undefined object comes close there will be a separate audio indicator for the user. Finally, the software will provide information about incoming hazards that enter within an allowed range, allowing for preemptive detection in the case of changes in environment. In our unique combination of various visual and detection technologies, users can understand their surroundings to a deeper level and move around more confidently.

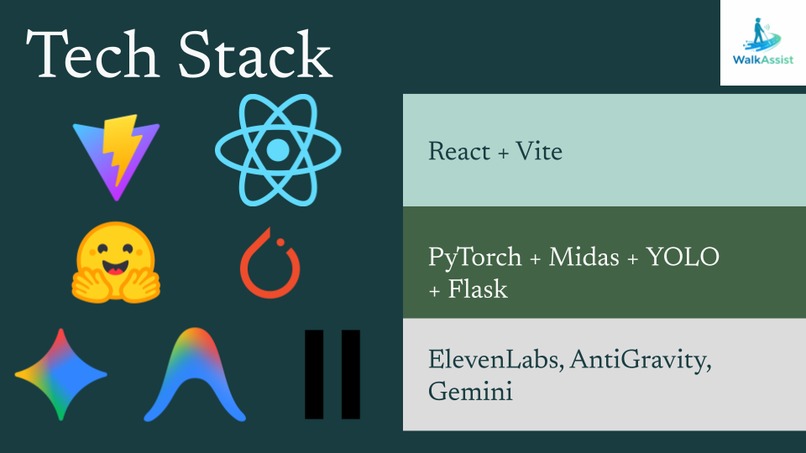

How we built it

Our project required a combination of different computer vision models that drew bounding boxes over known objects and detected relative distance from the point of reference. We then tied this into a voice agent that would provide live TTS output conveying relevant information and warnings. In order to combine these various different technologies, we spent a lot of time documenting interactions between live feeds and these various LLMs including tracking run-time and memory efficiency. At this point we were able to create a basic model that utilized camera footage to output raw relative data which could be connected to a mobile camera application. All of this data needed to be fine-tuned in order to output consistent detection metrics and sufficient confidence scores for object detection. Finally, we tied everything together with a backend server that uploaded new object detections and information to be spoken out loud with our TTS agent. Finally, we had to optimize for speed as this application has to be extremely fast and output information almost as soon as it’s provided which added another layer of testing and iteration.

Challenges we ran into

Throughout our development process we ran into a ton of different problems with available technologies and LLMs. The largest problem that plagued us during the process was the portability of our camera technology which required us to utilize live camera footage from a mobile application. Additionally, our models only provided relative depths which meant that we couldn’t define any real metrics without further mathematical calculations. To work around this, we assumed sizing information about known objects to configure some sense of general distance between the user and the object. We also had a lot of troubles with ensuring that the program ran in real time due to slow video feed on our web application which required separating our visual information from prevalent voice lines. Since we had so many different moving parts in our implementation, we had to work right up to the deadline to ensure that we had a reliable implementation that fit all of our engineering expectations which required some compromises. By limiting some of our functionalities to only the most critical systems, we were able to narrow our project’s scope and complete it in time for project presentations. Despite running into all of these problems, our team persevered through active collaboration and communication, leaving us with a satisfactory final product.

Accomplishments that we’re proud of

We’re extremely proud that we were able to manage a well organized repository throughout the entire competition despite having so many different factors. Our team also came into the Hackathon with some beginner members and were still able to get up to speed with the competition expectations and adapt to any last minute technical difficulties. Regardless of the difficult technical implementation and the various challenges that we encountered, our team stuck it through and were able to maintain our initial intentions throughout the process. Overall, our commitment to detail, efficiency, and teamwork allowed us to overcome all adversity and complete an in-depth technical implementation that stayed true to our project motivations.

What we learned

Due to the complexity of our solution and the various moving parts present, we were able to learn all sorts of things about emerging AI technologies and how to best optimize/utilize these tools. Additionally, we had to work around an extremely tight deadline, leading us to leverage a multitude of generative AI agents to supplement our normal coding workflow. Finally, we learned about real-world product creation and how to ensure that we’re only developing the most necessary features for an MVP and then to slowly build out from that point to create a more in depth solution. Looking forward, we hope to learn more about other more efficient Agents and LLMs in hopes of applying them to our work.

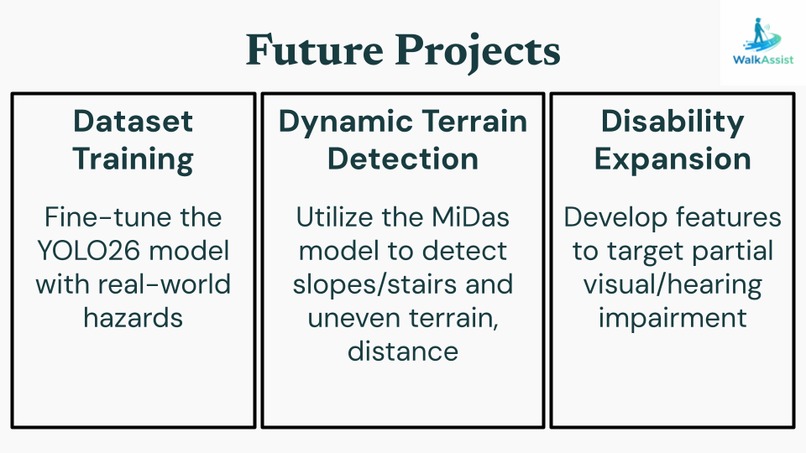

What’s next for WalkAssist

Looking at the next steps for our project, we hope to train our models to incorporate more detection categories such as icy spots and different road conditions. Additionally, we hope to expand into various other types of accessibility for various different disabilities in the hopes of providing a broader solution for lack of accessibility. There’s also a ton of avenues to look into to leverage new types of technology including distance metrics or other sensors to get a more accurate track of finite distances from the user. In terms of technical refinements, we also hope to cut down on latency and improve our algorithms to detect for more edge cases or noisy locations.

Log in or sign up for Devpost to join the conversation.