Inspiration

Every year, 5 billion smartphones are discarded worldwide — powerful ARM processors and HD cameras rotting in landfills. Simultaneously, 90% of schools globally cannot afford educational robots ($300–$1,000 each). I asked: what if the phone in the trash was the robot? That contradiction became BrainBrick.

What it does

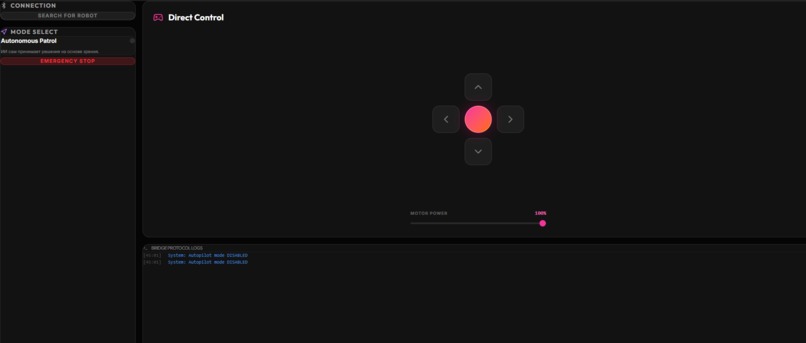

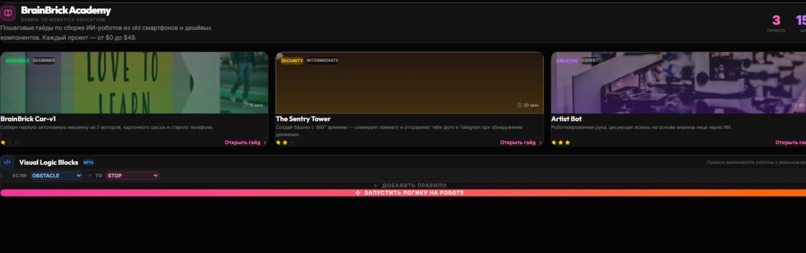

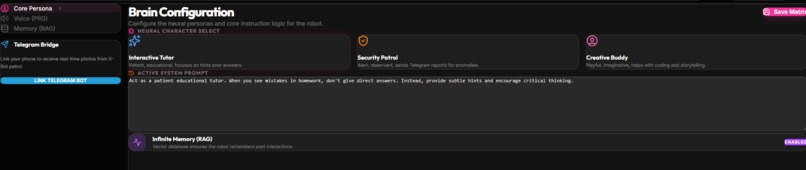

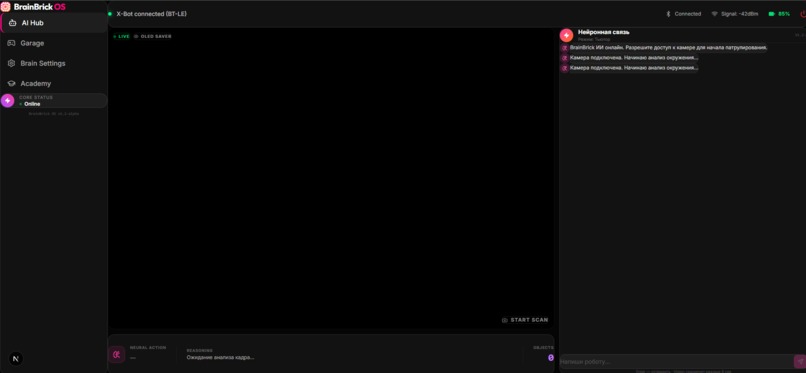

BrainBrick is a platform that transforms any old Android smartphone into the AI brain of a $15 autonomous robot. The BrainBrick OS app streams the camera to a vision LLM (Qwen2.5-VL), which returns JSON navigation commands ({"action":"FORWARD","speed":50}). The app parses them and sends byte-code via Web Bluetooth to an ESP32 microcontroller that drives the motors — closing a full agentic loop from perception to action. Users can switch between AI personas (Tutor, Security Guard, Creative Buddy), enable OLED power-save mode, build robot logic visually with Logic Blocks, and connect Telegram alerts for autonomous patrol.

How we built it

Frontend/OS: Next.js 16 + TypeScript, Web Bluetooth API, Framer Motion AI Engine: OpenRouter API → qwen/qwen2.5-vl-72b-instruct:free for vision, qwen/qwen3-30b-a3b:free for chat Hardware Bridge: ESP32 + L298N motor driver, custom byte-code protocol over BLE State sync: localStorage bridge between AI Hub and Garage for autopilot commands Agentic Loop: Camera frame → base64 JPEG → API → JSON action → Bluetooth TX

Challenges we ran into

Free vision models often return Markdown-wrapped JSON instead of raw JSON — solved with regex cleaning and safe fallback localStorage accessed during Next.js SSR caused crashes — fixed by moving reads into useEffect Web Bluetooth API is Chromium-only — designed a WiFi bridge fallback for iOS Keeping the $15 BOM target while including enough sensors for meaningful autonomy

Accomplishments that we're proud of

Full agentic loop working in browser: camera → LLM → JSON → Bluetooth → motors OLED Saver Mode with animated robot eyes — a live demo moment that judges will remember Complete BrainBrick OS with 4 functional modules built in under 48 hours Validated bill of materials under $12 per unit Visual Logic Blocks editor — no-code robot programming for children

What we learned

Prompt engineering for structured JSON output from vision models is harder than it looks The Web Bluetooth API is surprisingly powerful for browser-to-hardware communication without native apps "E-waste" framing resonates strongly — the same hardware story that sounds wasteful becomes inspiring when reframed as a resource

What's next for BrainBrick

AeroClaw — gesture control via MediaPipe hand tracking (code prototyped, integration in progress) RAG Memory — Pinecone/ChromaDB vector DB so the robot remembers each child over years On-device AI — Phi-3-mini / MobileNet quantized models for fully offline, privacy-first operation Teacher Dashboard — B2B school analytics (learning progress from child-robot conversations) Creator Kit v2 — $49 kit with servo arms, ultrasonic sensors, and LED rings

Built With

- bluetooth

- esp32

- framer

- l298n

- localstorage

- mediapipe

- motion

- next.js

- openrouter

- qwen2.5-vl

- tailwindcss

- typescript

- web

Log in or sign up for Devpost to join the conversation.