Inspiration

The spark for CampusCast came directly from the trenches of university student associations. Organizing major events—like a recent Annual General Meeting—highlighted a massive operational bottleneck: the sheer amount of time student committees waste manually drafting promotional emails, brainstorming flyer concepts, and writing detailed event scripts. Student leaders are managing tight weekend class schedules and academic workloads, so spending hours on repetitive marketing tasks is draining. I wanted to build an AI agent that acts as a digital creative director to automate this chaos, allowing organizers to focus on the actual event execution.

What it does

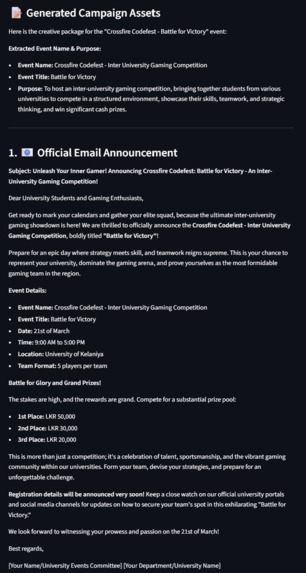

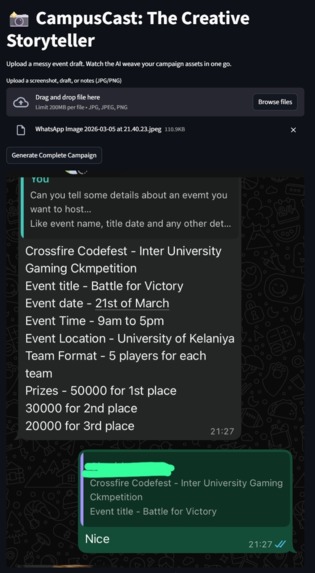

CampusCast is a multimodal "Creative Storyteller" agent designed to transform university event planning into a seamless, one-click creative workflow. Users provide a simple prompt about an upcoming event (like a guest lecture, a society meeting, or a student talent show). Leveraging Gemini's native interleaved output capabilities, the agent instantly conceptualizes and weaves together a cohesive, mixed-media promotional package. It generates engaging social media copy, and formats structured announcement scripts for event moderators—all delivered in one fluid, real-time stream.

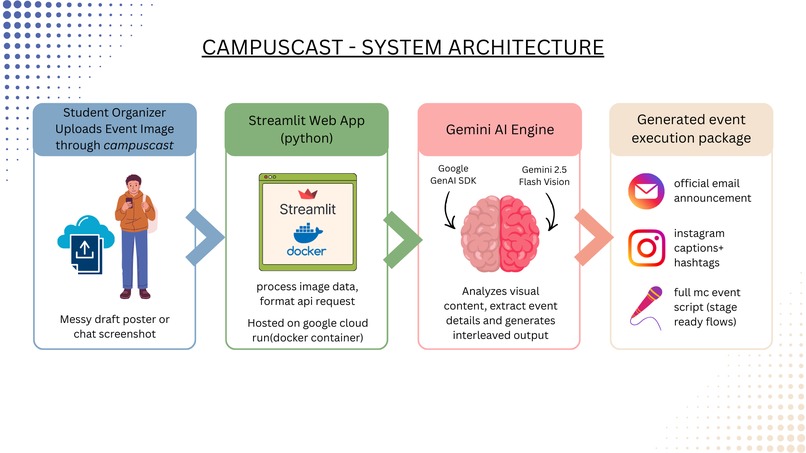

How I built it

I developed the core engine using Python and the Google GenAI SDK to interact directly with the Gemini model, focusing specifically on its multimodal capabilities to generate text. For the frontend, I built a lightweight, rapid UI using Streamlit.

To satisfy the cloud requirements and ensure reliable hosting, the entire application was containerized. Drawing on my experience with Linux environments, I utilized an Ubuntu-based workflow to build and test the Docker images locally before deploying the backend directly to Google Cloud Run.

Challenges I ran into

Time management was my absolute biggest hurdle. With crucial university exams looming right around the corner in mid-March, I had a very tight, unforgiving window to conceptualize, build, and deploy this project. I had to be extremely ruthless with our scope, strictly adhering to a rapid development cycle to ensure I had a working prototype deployed on Google Cloud before hitting the books.

Technically, mastering the precise prompting required to consistently trigger Gemini's interleaved text-and-image outputs without breaking the application flow took significant trial and error.

Accomplishments that I am proud of

I am incredibly proud of successfully deploying a fully functional, multimodal AI agent to Google Cloud under extreme time pressure. Moving beyond simple text-in/text-out interactions to actually generating a cohesive, mixed-media stream (text, scripts) feels like a massive leap forward. Seeing the app generate a complete promotional package for a campus event in seconds was a huge win.

What I learned

I gained deep, practical experience working with the Google GenAI SDK and understanding the nuances of multimodal AI. I learned that structuring prompts for interleaved output requires a completely different mindset than standard text generation. I also solidified our cloud deployment skills, learning how to bridge the gap between a local development environment and a live, scalable containerized application on Google Cloud Run.

What's next for CampusCast

In the short term, surviving exams! Looking ahead, I want to integrate CampusCast directly with university communication channels, allowing it to automatically draft and schedule emails or social media posts. I also plan to explore Gemini's audio and video capabilities to generate complete promotional voiceovers and teaser trailers for massive campus events.

Log in or sign up for Devpost to join the conversation.