Inspiration

We were interested in how people struggle with everyday smartphone tasks, especially older users who often get stuck and need to call family members for help. This inspired us to build a live AI agent that can watch the screen and guide users step by step so they can complete tasks on their own.

What it does

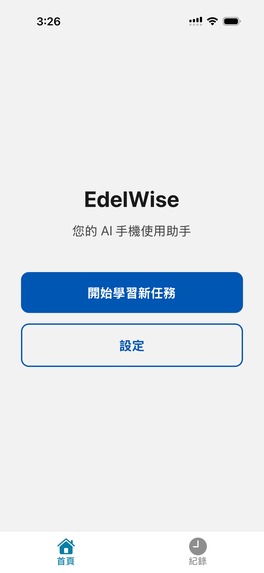

The EdelWise app is the user's trusty technology companion when navigating smartphone apps! When the user opens their mobile phone and begins a task they are unfamiliar with, for instance sending photos to friends, our EdelWise app can listens to the user's voice and observes the current screen to understand what they are trying to do, and then provides simple, step-by-step guidance through a live AI agent, helping them to complete the task. In effect, EdelWise becomes a personal technology coach, guiding users through confusing app interactions and helping them gain confidence using their devices.

How we built it

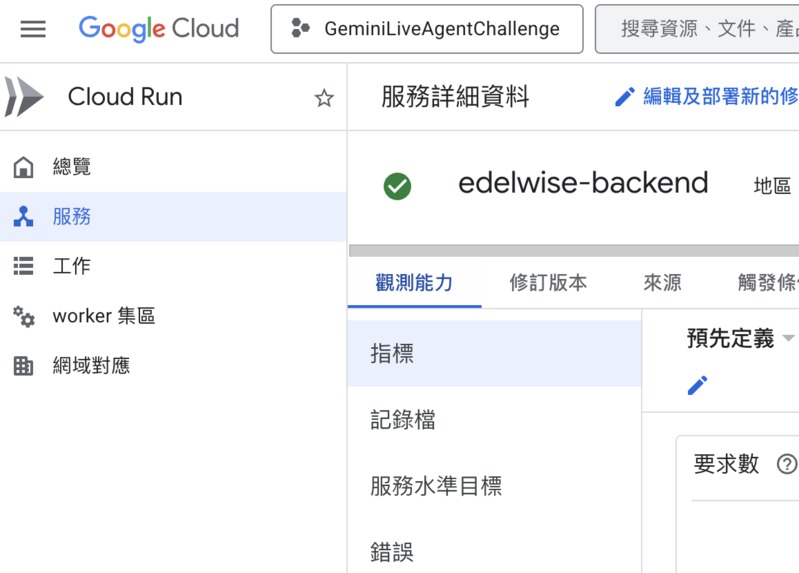

Using the Gemini Live API and Google Cloud services, we built a real-time multimodal AI agent that can understand both the user’s voice and the current smartphone screen. Our EdelWise mobile client captures voice input and periodic screenshots, which streamed to a backend service that manages our session context and task state. We then connected to GCP and it connected to Gemini API that can analyzes the screen content and conversation, to determine the user’s current step and generate the next instruction, allowing the agent to guide the user through the task one step at a time.

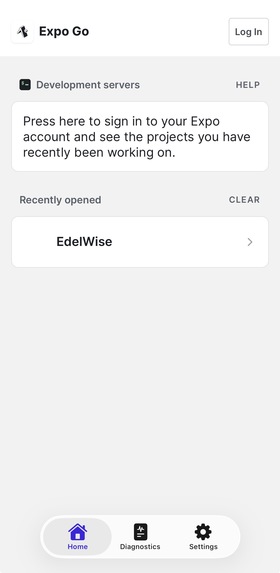

On the front end, we built a lightweight mobile interface that enables voice interaction, screen capture, and real-time guidance. We use EXPO so that we can deploy on both Android and ios systems. Our system presents instructions through both voice responses and simple on-screen prompts so users can easily follow each step without feeling overwhelmed. Our interface keeps the interaction simple and supportive, translating complex AI reasoning and screen analysis into clear guidance that helps users successfully complete the task.

Challenges we ran into

One big challenge was getting the GCP API key. At first we could not access the key, so we could not connect our system to the Gemini services. We had to spend a lot of time checking permissions and settings before it finally worked.

Another big problem is happened when my teammate and I were coding at the same time. When we implementing our work of different part to the system, sometimes our Git repository had conflicts, which made our code stop working until we fixed it. It took many time of us to make sure our changes could be merged safely.

We also had trouble when we first connected to Google services like speech and other cloud tools. Setting everything up and making the services talk to each other took longer than we expected. Overall, integrating all these cloud services was harder than we thought.

Accomplishments that we're proud of

One thing we are proud of is that we actually built a working live AI agent. It can listen to the user’s voice and look at the phone screen at the same time, then figure out what the user is trying to do. The agent can understand the current step and give simple instructions to help the user move forward. We are also proud that the system does not control the phone for the user. Instead, it slowly guides them step by step so they can finish the task by themselves. Another thing we are happy about is that we managed to connect many different parts together, like the Gemini Live API, Google Cloud services, and our mobile app. Getting voice, screen understanding, and real-time guidance to work together was not easy, but in the end we made it work for our demo.

What we learned

During this project, we learned that building a real AI system is harder than we first thought. Many small things took a long time, like setting up cloud services, fixing API connections, and solving permission problems. We also learned that when many parts work together, like voice, screen understanding, and backend logic, even a small bug can stop everything. But we also saw how helpful multimodal AI can be. When the agent can hear the user and see the screen at the same time, it understands the situation much better and can give clearer help.

What's next for EdelWise

(1) To improve the screen understanding system so the agent can recognize more phone screens and guide users more accurately step by step. (2) To expand EdelWise to support more apps and everyday tasks, so older adults can get help with many common things they want to do on their phones.

Log in or sign up for Devpost to join the conversation.