Inspiration

Autonomous vehicle perception systems need to be tested against a huge range of edge cases, but building those scenarios manually is slow, expensive, and hard to scale. We wanted to make that process faster and more accessible by letting someone describe a driving test in plain English and then automatically turning it into realistic 3D environments, detection outputs, and safety analysis. SynthDrive was inspired by the idea that AV testing should be as programmable and iterative as software testing.

What it does

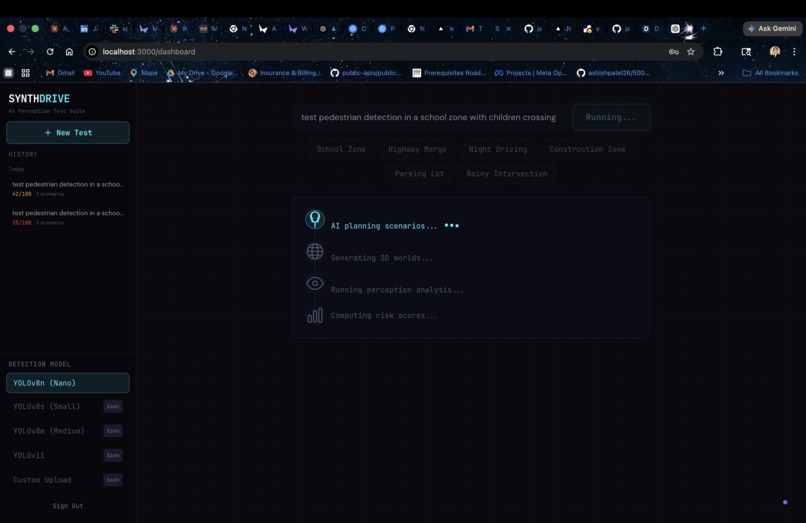

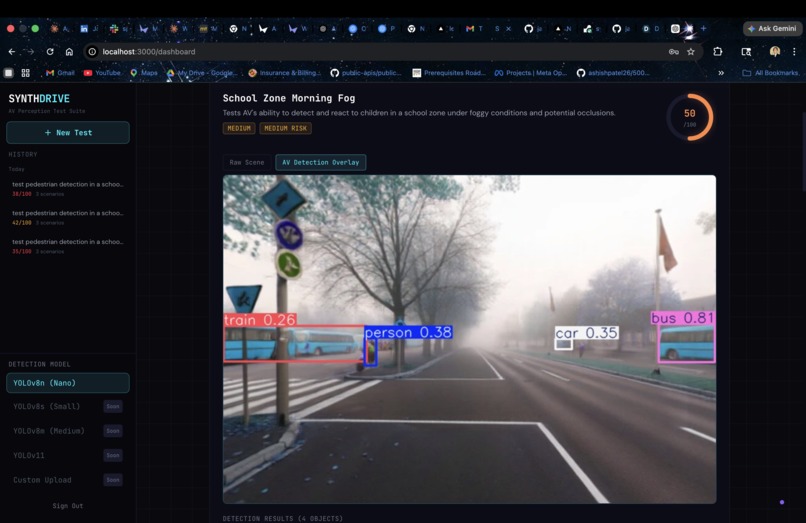

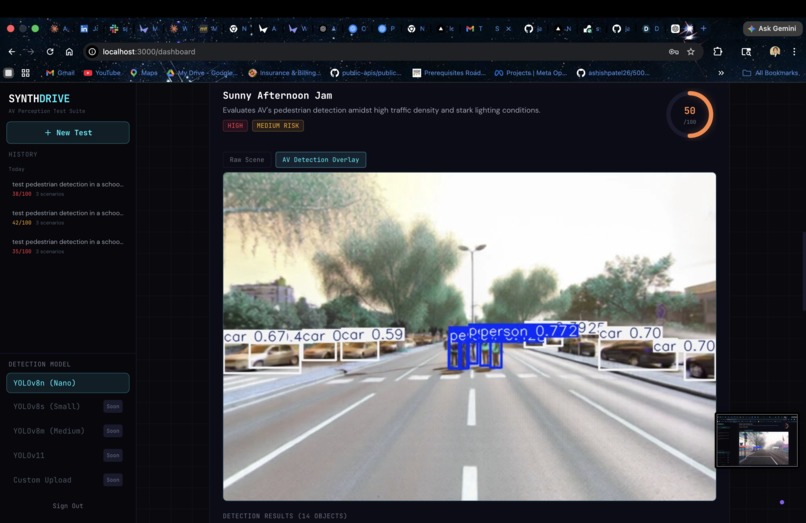

SynthDrive is an AI-powered AV perception test suite that generates synthetic 3D driving environments from natural language prompts, then evaluates how well an autonomous vehicle perception stack handles each one. A user can enter a prompt like “test pedestrian detection at night,” and the system generates multiple scenario variations, creates 3D worlds for them, runs YOLOv8 object detection on the resulting panoramas, and uses AI to produce a perception score, risk level, findings, and recommendations for each scene. The result is a full pipeline for quickly stress-testing AV perception in realistic and diverse conditions.

How we built it

We built SynthDrive as a full-stack web app using Next.js 14, TypeScript, and Tailwind CSS for the frontend and API routes, with a separate FastAPI Python microservice for computer vision inference. The pipeline uses GPT-4o to expand a short user input into multiple detailed AV test scenarios, the World Labs Marble API to generate persistent 3D driving worlds and panorama images, and YOLOv8 to detect objects inside those scenes. After detection, we use GPT-4o again to analyze the results and generate a perception difficulty score, risk classification, and safety-focused recommendations. On the frontend, we present each scenario with an embedded 3D world viewer, raw vs. annotated panorama comparison, detection tables, and AI-generated analysis in a mission-control style dashboard.

Challenges we ran into

One major challenge was orchestrating a multi-step pipeline where each layer depended on the previous one. Marble world generation is asynchronous and required polling until each world finished, which made reliability and state management important. Another challenge was making outputs from multiple AI systems work together cleanly, especially ensuring structured JSON from GPT, matching expected AV-relevant object classes to YOLO detections, and handling failures gracefully when a world generation step or detection result did not fully align with expectations. We also had to balance speed and quality, which is why we used Marble 0.1-mini and YOLOv8n for a faster demo-ready experience.

Accomplishments that we're proud of

We’re proud that SynthDrive connects four distinct layers into one seamless workflow: natural language scenario planning, 3D world generation, computer vision evaluation, and AI safety analysis. Instead of just generating pretty scenes, the system produces usable engineering feedback with scores, missed-object summaries, and risk reports. We’re also proud of the full-stack experience: users can go from a short text prompt to an interactive dashboard with 3D worlds, panorama comparisons, detection overlays, and downloadable assets in one place. Most importantly, the project shows how generative AI can become a practical tool for scientific and engineering validation, not just content creation.

What we learned

We learned how powerful multi-model AI pipelines can be when each model has a clearly defined role. LLMs were useful not only for generation, but also for structuring and interpreting outputs from other systems. We also learned that synthetic world generation can serve as a real testing interface for engineering workflows when combined with computer vision and clear evaluation metrics. From a systems perspective, we learned a lot about coordinating asynchronous APIs, parallelizing long-running tasks, and building a frontend that makes complex technical results understandable at a glance.

What's next for SynthDrive

Next, we want to make SynthDrive more realistic, scalable, and useful for AV teams. That includes supporting more advanced perception models beyond YOLOv8, expanding into segmentation and tracking, adding temporal testing across video sequences instead of single panoramas, and building a larger scenario library for common AV failure cases like occlusions, emergency vehicles, glare, and rare pedestrian behavior. We also want to improve benchmarking by comparing multiple perception systems side by side, storing historical test runs, and turning SynthDrive into a repeatable regression testing platform for autonomous driving safety. The long-term vision is to help engineers generate and evaluate hundreds of edge-case driving scenarios far faster than traditional manual simulation workflows.

Built With

- next.js-14

- numpy

- openai-gpt-4o

- opencv

- pillow

- react

- tailwind

- typescript

- ultralytics

- yolov8

Log in or sign up for Devpost to join the conversation.