by Malcolm Murray

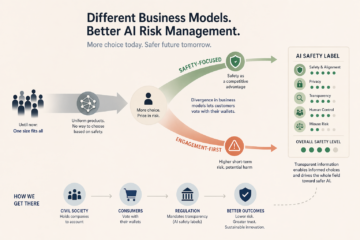

The AI market continues to evolve and surprise. In recent months, Anthropic withheld their latest model Mythos, OpenAI made a U-turn and started experimenting with ads, and Meta bought a “social network for AIs”. This could point to increased divergence in AI companies’ business models. While this might increase AI risk to society in the short term, it is likely a good thing for managing risks in the longer term. It should be encouraged.

The AI market continues to evolve and surprise. In recent months, Anthropic withheld their latest model Mythos, OpenAI made a U-turn and started experimenting with ads, and Meta bought a “social network for AIs”. This could point to increased divergence in AI companies’ business models. While this might increase AI risk to society in the short term, it is likely a good thing for managing risks in the longer term. It should be encouraged.

AI products have up until now been strikingly uniform

Until now, the AI market has been “one size fits all”. All main providers operate by the same playbook and offer similar products. After ChatGPT was launched, similar chatbots quickly followed from Anthropic and xAI. After Anthropic’s success with Claude Code, its competitors quickly launched copycat products. Each time a major model is released, it inevitably shoots to the top of leaderboards; just as inevitably, it is shortly thereafter dethroned.

The only difference so far has been “open-source” versus “closed-source” models. OpenAI, Anthropic and others have mostly released models as closed source. This means the company hosts the model and the user accesses it through an interface (e.g. a chat window). Revenue in this model comes from product subscriptions. Conversely, companies such as Meta have chosen to mostly release their models open-source. This means the user can run the model locally and make adjustments to it. Revenue in this case comes from hosting, consulting and partnerships. However, even this distinction has become more blurry. OpenAI has released its first open-source model for many years, and Meta backtracked on its “open-sourcing-to-AGI” strategy and released a closed model.

Increased product differentiation allows customers to take safety into account

Increased differentiation of products and business models would be positive for managing AI risks, allowing greater ability to “price in risk”, a finance term for allowing customers to take risk into account in their purchases. Other industries allow customers to “vote with their wallet”. When a consumer buys a household appliance, its energy rating shows its energy efficiency. Groceries have nutrition ratings and cars have safety ratings. In financial markets, ratings from S&P or Moody’s mean the buyer clearly knows how much risk they take on.

Recent events suggest potential differentiation in the making

Up until now, nothing similar has existed for AI. Products are uniform and the customer has no way of choosing based on safety. Recent events suggest this may now be changing. Read more »

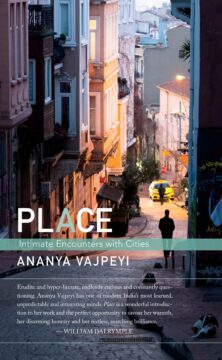

In the early 1990s, I began listening to qawwali in a serious way. In 1994 I happened upon a recording of one of the great performances of the Pakistani maestro, Nusrat Fateh Ali Khan. He must have been addressing an audience outside South Asia because he began the concert with a sentence in English (of sorts). “Now we are singing,” he announced in his gravelly voice and thick Punjabi accent, “a poetry in the Persian.” Without further preamble he and his troupe began to sing. For many years now I’ve tried to correct the sentence in my mind. Poetry in the Persian. Poetry in Persian. A poem in the Persian. A poem in Persian. But it never sounds quite right, except in Nusrat’s idiosyncratic grammar: Now we are singing a poetry in the Persian.

In the early 1990s, I began listening to qawwali in a serious way. In 1994 I happened upon a recording of one of the great performances of the Pakistani maestro, Nusrat Fateh Ali Khan. He must have been addressing an audience outside South Asia because he began the concert with a sentence in English (of sorts). “Now we are singing,” he announced in his gravelly voice and thick Punjabi accent, “a poetry in the Persian.” Without further preamble he and his troupe began to sing. For many years now I’ve tried to correct the sentence in my mind. Poetry in the Persian. Poetry in Persian. A poem in the Persian. A poem in Persian. But it never sounds quite right, except in Nusrat’s idiosyncratic grammar: Now we are singing a poetry in the Persian.

Set over a single weekend, Thammika Songkaeo’s novel

Set over a single weekend, Thammika Songkaeo’s novel

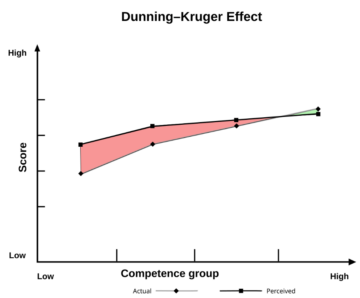

The Debunking Handbook, 2020,

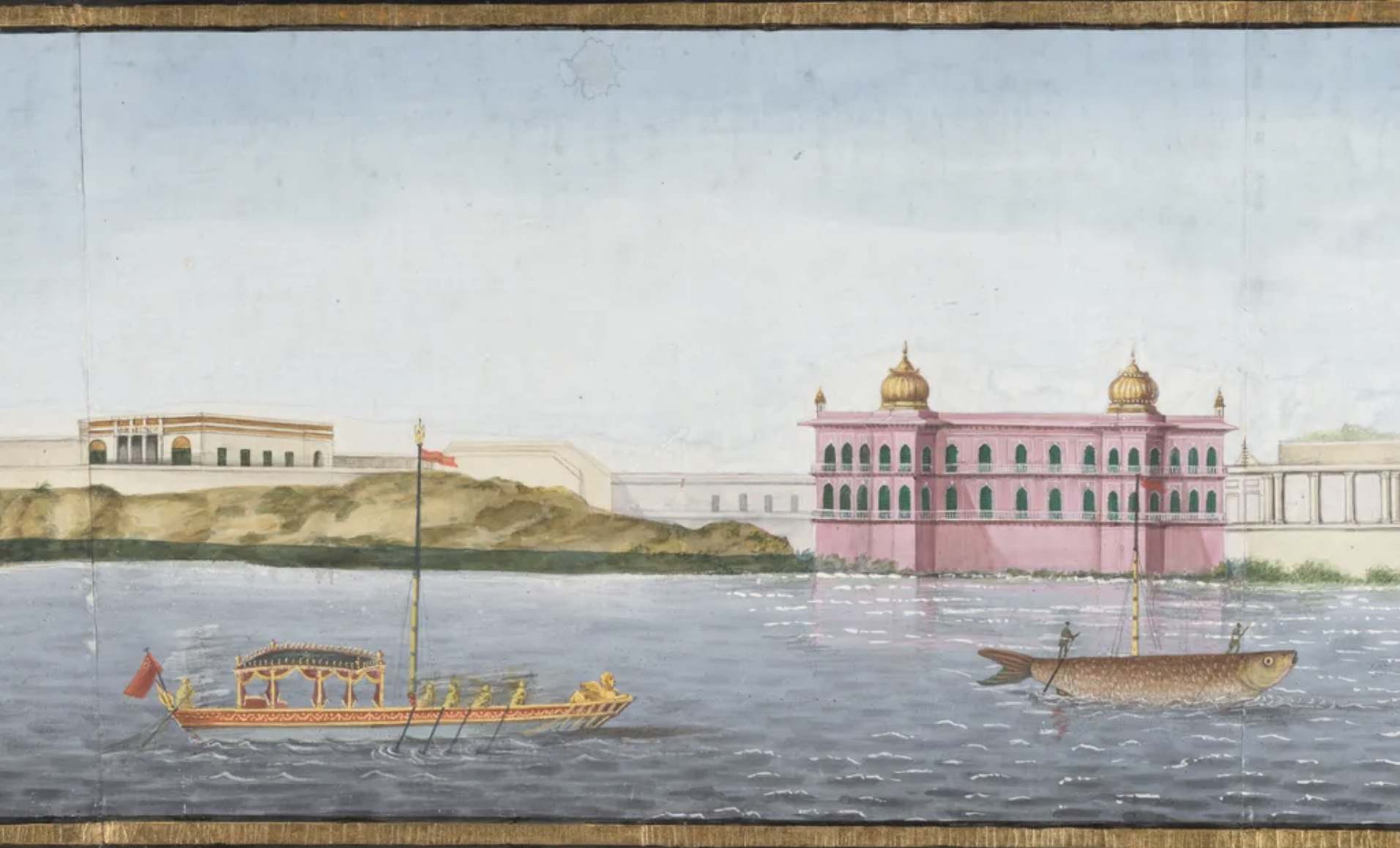

The Debunking Handbook, 2020,  Artist not known. Panorama of Lucknow From The Gomti, 1821-1826. (Detail from a scroll 31 cm x 1128 cm.)

Artist not known. Panorama of Lucknow From The Gomti, 1821-1826. (Detail from a scroll 31 cm x 1128 cm.)

On Thursday this week I will join two of my colleagues—the mezzo Annina Haug and the pianist Edward Rushton—to present a program of poems by French authors to a private audience. We are staging our concert in Zurich, at the home of a descendant of one of those authors, the renowned Swiss-French clown and musician

On Thursday this week I will join two of my colleagues—the mezzo Annina Haug and the pianist Edward Rushton—to present a program of poems by French authors to a private audience. We are staging our concert in Zurich, at the home of a descendant of one of those authors, the renowned Swiss-French clown and musician