Executive Summary

For over a decade, SOAR platforms defined SOC automation through static playbooks. That model has reached its ceiling: coverage caps at 30–40%, maintenance scales linearly, and novel attacks go uninvestigated.

The AI Autonomous SOC is a fundamentally different architecture. A purpose-trained cybersecurity LLM investigates every alert autonomously — tracing attack paths, correlating across the full stack, generating bespoke response playbooks at runtime.

D3 Security’s Morpheus AI is the operational standard for this model. Multi-dimensional Attack Path Discovery on every alert, L2 depth in under 2 minutes, self-healing integrations across 800+ tools.

Key question: What is the structural difference between SOAR and an AI SOC — and why does it determine whether your SOC investigates 30% of alerts or 100%?

Table of Contents

The SOC Reality in Numbers

Enterprise SOCs face a structural capacity problem. Alert volumes grow exponentially while analyst teams remain flat. The gap is not operational — it is architectural. Static playbook-based automation cannot bridge it.

| Metric | Industry Finding | Source |

|---|---|---|

| Daily alert volume | 4,400+ per enterprise; 10,000+ for large organizations | Industry benchmarks, 2025 |

| Alerts never investigated | 40% of total volume | SACR 2025 (300+ CISOs) |

| Confirmed threats ignored | 61% of SOC teams have missed real compromises | SOC analyst surveys, 2024–2025 |

| Analyst burnout | 70%+ report burnout | Multiple industry surveys |

| SOAR coverage ceiling | 30–40% maximum | Gartner; operational data |

| Morpheus AI triage time | < 2 min per alert at L2 depth | D3 Security production data |

The capacity gap is architectural, not operational. More SOAR playbooks, better SOC staffing, and faster tools cannot overcome the structural limits of static automation.

What Is SOAR?

SOAR — Security Orchestration, Automation, and Response — automates incident response through static, predefined playbooks. A SOAR architect designs multi-step workflows (250–500 steps) that execute the same logic every time an alert matches a rule.

What SOAR Does Well

- Standardizes response procedures across the SOC

- Orchestrates tool-to-tool actions without manual copy-paste

- Documents steps for compliance and audit trails

- Reduces analyst toil on routine, predictable tasks

Five Structural Limitations of SOAR

| Limitation | What Happens | Operational Impact |

|---|---|---|

| SOAR Architect Dependency | Every new workflow requires a specialist to design and test 100+ steps. | 6–8 week latency between threat discovery and playbook deployment. |

| Static Coverage Ceiling | SOAR can only respond to alerts it was explicitly programmed to handle. | 60–70% of daily alerts receive no automation — manual analyst review required. |

| Integration Brittleness | Tool updates, API changes, or credential rotation breaks playbooks silently. | Maintenance overhead grows 15–20% annually; failed automations undetected. |

| No Investigation Capability | SOAR cannot independently correlate signals or trace attack chains. | Analysts still perform 60–80% of investigation work manually. |

| Linear Maintenance Scaling | Each new playbook adds operational burden; no learning or knowledge transfer. | Maintenance cost per playbook ≈ design cost. System becomes increasingly fragile. |

What Is an AI SOC?

An AI Autonomous SOC replaces static playbooks with a purpose-trained cybersecurity LLM that performs autonomous investigation and response. The model investigates every alert — not just the ones matching a predefined rule.

How an AI SOC Works

The AI SOC performs vertical and horizontal correlation automatically. Vertical correlation traces a single alert back to root indicators — IP reputation, domain registration patterns, TLS certificates. Horizontal correlation finds signals across the full stack: network logs, endpoint telemetry, cloud events, identity systems. Attack path tracing connects these signals into a narrative that explains what happened and what to do.

Core AI SOC Capabilities

| Capability | Description | Why It Matters |

|---|---|---|

| Attack Path Discovery | Traces threats across every data source without predefined rules. | Catches novel attacks and attack variations SOAR cannot recognize. |

| Contextual Playbook Generation | Generates bespoke, one-time response workflows based on investigation findings. | No waiting for architects. Response generated in seconds, not weeks. |

| Self-Healing Integrations | Detects and corrects API breaks, auth failures, and credential drift automatically. | Eliminates 70%+ of SOAR maintenance overhead. |

| Continuous Learning | Model improves across the entire SOC population — not isolated playbooks. | Threat intelligence shared across all customers. Response quality improves continuously. |

The fundamental shift: SOAR automates tasks. An AI SOC automates outcomes.

SOAR vs. AI SOC: Head-to-Head

This comparison shows why the AI SOC model fundamentally changes SOC economics. It is not an incremental improvement — it is a structural shift.

| Dimension | Traditional SOAR | AI SOC (Morpheus AI) |

|---|---|---|

| Automation Model | Task automation via static playbooks | Outcome automation via LLM reasoning |

| Alert Coverage | 30–40% of daily volume | 100% of alerts investigated |

| Investigation Depth | Orchestrates predefined steps only | L2 analyst depth on every alert |

| Playbook Creation | 6–8 weeks by SOAR architect | Seconds at alert time |

| Time to Triage | 20+ minutes (manual analyst review) | < 2 minutes (fully autonomous) |

| Integration Maintenance | Reactive, 15–20% annual overhead growth | Proactive self-healing, ~5% overhead |

| Handling Novel Threats | Requires new playbook design | Investigates autonomously, learns |

| Staffing Requirement | SOAR architect + analysts | Analysts only (AI provides expertise) |

| Scaling Model | Linear: more playbooks = more cost | Logarithmic: knowledge shared globally |

| Analyst Experience | Still manual on 60–70% of work | Focused on high-value investigation |

Why SOAR Has Reached Its Ceiling

The market is signaling that static playbook automation cannot scale. Industry analysts, government agencies, and security leaders now openly acknowledge the limitations of the SOAR model.

Gartner — SOAR in the Trough of Disillusionment (2024 Hype Cycle)

Gartner’s 2024 Hype Cycle for Security confirms SOAR has entered the “Trough of Disillusionment.” Organizations report deployment challenges, playbook maintenance burden, and the realization that static automation cannot handle modern threat volume and complexity.

GigaOm Renames the Category (SOAR Radar → SecOps Automation Radar, 2025)

In 2025, GigaOm renamed their SOAR analysis to “SecOps Automation Radar” — explicitly moving beyond playbook-centric evaluation. The vendor landscape has shifted from “How do we build better SOAR?” to “What comes after SOAR?”

CISA and NSA Joint Guidance (May 2025)

CISA and NSA jointly published guidance stating that SOAR platforms cannot be deployed as “set-and-forget” solutions. Regular playbook audits, continuous integration testing, and aggressive investment in architect resources are now mandatory practices — revealing the true operational cost.

This is not vendor failure. It is structural failure. Static automation reaches a mathematical ceiling at ~40% coverage. The question is no longer whether to move beyond SOAR — it is how fast.

The Natural Language Overlay Trap

Some SOAR vendors have added natural language interfaces on top of their existing playbook engines. This is not an AI SOC. It is a SOAR system with a friendlier user interface — the underlying architecture remains static and limited.

What NL Overlays Do vs. What They Don’t

| Capability | NL Overlay on SOAR | AI SOC (True AI) |

|---|---|---|

| Convert text to playbook steps | ✓ Yes | ✓ Yes |

| Investigate alert without predefined rule | ✗ No | ✓ Yes |

| Correlate signals across data sources | ✗ No | ✓ Yes |

| Reason about novel attacks | ✗ No | ✓ Yes |

A natural language interface is a convenience layer. It speeds up workflow authoring but does not change the underlying fact that only 30–40% of alerts can be covered. The LLM is present only at design time, not at investigation time.

A true AI SOC deploys the LLM at alert time. Every alert, every signal, every piece of context is fed to a trained cybersecurity model that reasons about the threat in real-time. The LLM is the investigation engine, not just a development tool.

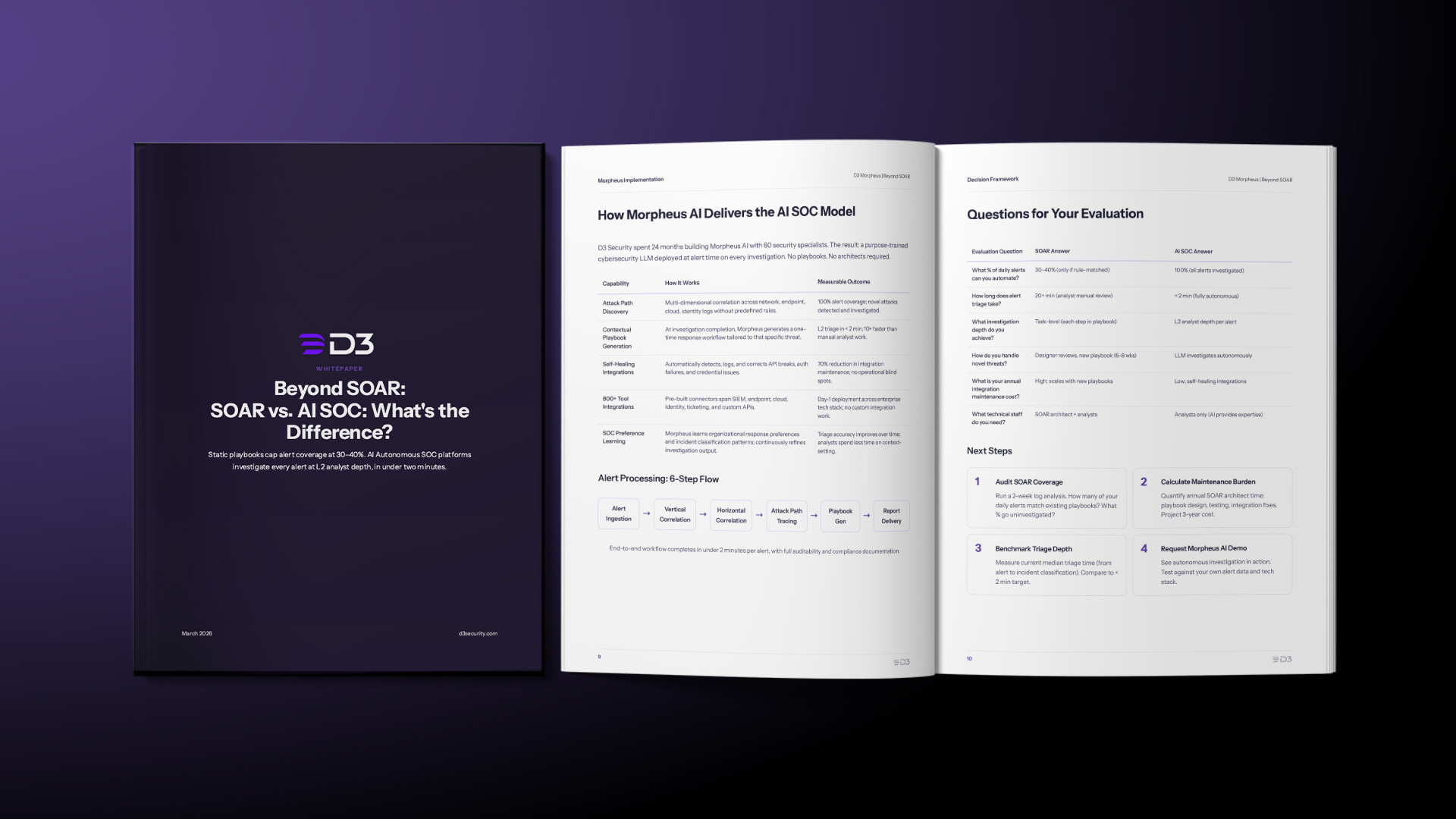

How Morpheus AI Delivers the AI SOC Model

D3 Security spent 24 months building Morpheus AI with 60 security specialists. The result: a purpose-trained cybersecurity LLM deployed at alert time on every investigation. No playbooks. No architects required.

| Capability | How It Works | Measurable Outcome |

|---|---|---|

| Attack Path Discovery | Multi-dimensional correlation across network, endpoint, cloud, identity logs without predefined rules. | 100% alert coverage; novel attacks detected and investigated. |

| Contextual Playbook Generation | At investigation completion, Morpheus generates a one-time response workflow tailored to that specific threat. | L2 triage in < 2 min; 10× faster than manual analyst work. |

| Self-Healing Integrations | Automatically detects, logs, and corrects API breaks, auth failures, and credential issues. | 70% reduction in integration maintenance; no operational blind spots. |

| 800+ Tool Integrations | Pre-built connectors span SIEM, endpoint, cloud, identity, ticketing, and custom APIs. | Day-1 deployment across enterprise tech stack; no custom integration work. |

| SOC Preference Learning | Morpheus learns organizational response preferences and incident classification patterns; continuously refines investigation output. | Triage accuracy improves over time; analysts spend less time on context-setting. |

Alert Processing: 6-Step Flow

Alert Ingestion

Alerts from SIEM, EDR, cloud, and identity sources are ingested into the Morpheus platform.

Vertical Correlation

Each alert is traced back to root indicators — IP reputation, domain registration patterns, TLS certificates.

Horizontal Correlation

Signals are correlated across the full security stack: network logs, endpoint telemetry, cloud events, identity systems.

Attack Path Tracing

Connected signals are assembled into a threat narrative explaining what happened and what to do.

Playbook Generation

A bespoke, one-time response workflow is generated based on investigation findings.

Report Delivery

Full investigation report with auditability and compliance documentation delivered to analysts.

End-to-end workflow completes in under 2 minutes per alert, with full auditability and compliance documentation.

Questions for Your Evaluation

| Evaluation Question | SOAR Answer | AI SOC Answer |

|---|---|---|

| What % of daily alerts can you automate? | 30–40% (only if rule-matched) | 100% (all alerts investigated) |

| How long does alert triage take? | 20+ min (analyst manual review) | < 2 min (fully autonomous) |

| What investigation depth do you achieve? | Task-level (each step in playbook) | L2 analyst depth per alert |

| How do you handle novel threats? | Designer reviews, new playbook (6–8 wks) | LLM investigates autonomously |

| What is your annual integration maintenance cost? | High; scales with new playbooks | Low; self-healing integrations |

| What technical staff do you need? | SOAR architect + analysts | Analysts only (AI provides expertise) |

Next Steps

Audit SOAR Coverage

Run a 2-week log analysis. How many of your daily alerts match existing playbooks? What % go uninvestigated?

Calculate Maintenance Burden

Quantify annual SOAR architect time: playbook design, testing, integration fixes. Project 3-year cost.

Benchmark Triage Depth

Measure current median triage time (from alert to incident classification). Compare to < 2 min target.

Request Morpheus AI Demo

See autonomous investigation in action. Test against your own alert data and tech stack.