Inspiration

One of our team members has worked with children who live with physical disabilities. Despite limited fine motor control, many still retain full use of facial muscles and larger muscle groups. This inspired us to imagine a more inclusive way to use computers—one that doesn’t rely on a keyboard or mouse. Our mission: give users complete control using only their head movements, eye gestures, and voice.

What it does

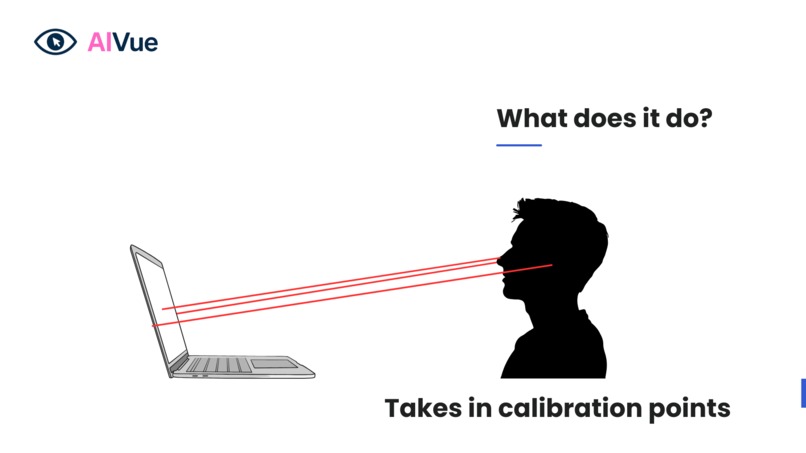

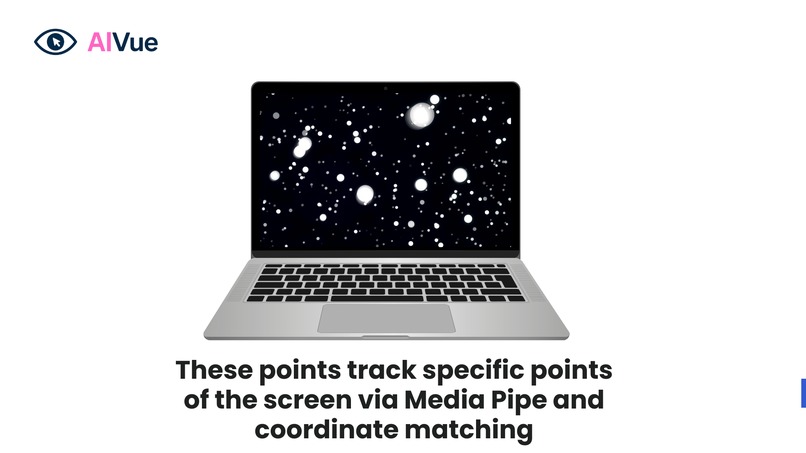

AIVue: Control with Vision is a hands-free computer interface that empowers individuals with physical disabilities. It combines: • Eye and head tracking to move the mouse using just head motion • Blink detection for left and right mouse clicks • Speech-to-text voice commands using Vosk • An intelligent agent powered by Gemini framework principles that understands intent and executes tasks like opening apps or browsing • A MongoDB cloud backend that logs real-time data—like eye visibility, click events, and head pitch—for debugging and adaptability

With AIVue, users can fully operate a computer without using their hands.

How we built it

We used a combination of technologies to make AIVue possible: • Python for core logic and integration • MediaPipe and OpenCV for real-time face, eye, and head tracking • PyQt6 for building a clean and intuitive user interface • PyAutoGUI to control mouse behavior • Vosk for offline speech recognition • MongoDB Atlas hosted on Google Cloud to log user actions and enable cloud-based analytics • Applied Kalman Filters to smooth cursor movements for better user experience • Built an intent-based system modeled on Gemini’s agentic architecture to enable natural language interaction

Challenges we ran into

• Ensuring reliable eye and blink detection under different lighting conditions

• Achieving smooth and responsive head tracking with minimal latency

• Designing a user interface simple enough for real-time accessibility

• Managing asynchronous data from both voice and vision pipelines

• Integrating cloud-based logging in real-time while maintaining performance

Accomplishments that we're proud of

• Successfully built a fully functional hands-free interface combining vision and voice

• Developed a real-time event logger to capture calibration, click, and movement data

• Created a modular and extensible system that adapts to different users’ needs

• Delivered a clean, fully integrated UI using PyQt6

• Enabled true accessibility for users who cannot use traditional input devices

What we learned

• How to integrate multiple input systems (gaze, gestures, and voice) into a single cohesive interface

• How to apply accessibility design principles in real-world software

• Importance of calibration and feedback loops in computer vision systems

• How to build agentic systems that adapt and respond intelligently to user intent

• Deepened our experience with Python, MediaPipe, OpenCV, Vosk, MongoDB, and PyQt6

What's next for AIVue: Control with Vision

• Expand click functionality to support dwell clicking and double-blink actions

• Add keyboard emulation for full text entry using only speech and gaze

• Train adaptive models that learn individual user patterns for better control

• Collaborate with accessibility communities to test, validate, and iterate on real-world use

• Open source AIVue so others can build on it and contribute

Log in or sign up for Devpost to join the conversation.