benchwarmer.ai

Inspiration

Everyone on our team has worked in research at some point. If there’s one thing you learn quickly in research, it’s this:

The idea is exhilarating.

The benchmarking is exhausting.

You devise a new algorithm. You’re confident it’s better. Now you have to prove it.

That means:

- Searching for multiple existing algorithms in the space

- Reading dense research papers

- Re-implementing those baselines yourself

- SSH’ing into different machines to run experiments

- Waiting for results

- Writing additional scripts to compile metrics

- Generating comparison plots

- Finally, analyze the results

Benchmarking is the most important part of research and also the most tedious.

We kept asking:

Why does getting an idea of how an algorithm performs, take longer than coding it?

What if benchmarking could be reduced to a few inputs?

Drop them into an engine.

Sit back.

Relax.

Warm your bench.

We couldn't believe such a technology did not exist considering today's advancements.

That’s where benchwarmer.ai was born.

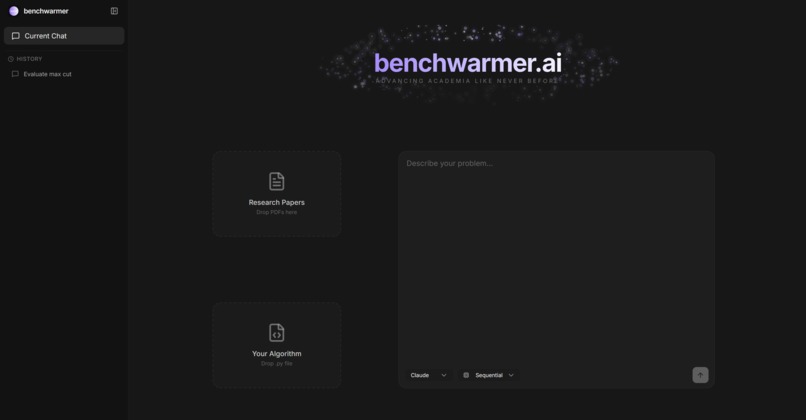

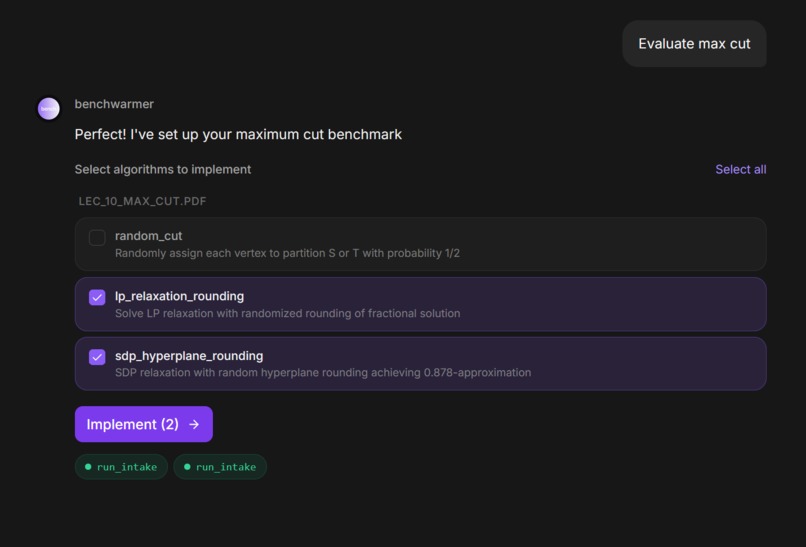

What benchwarmer does

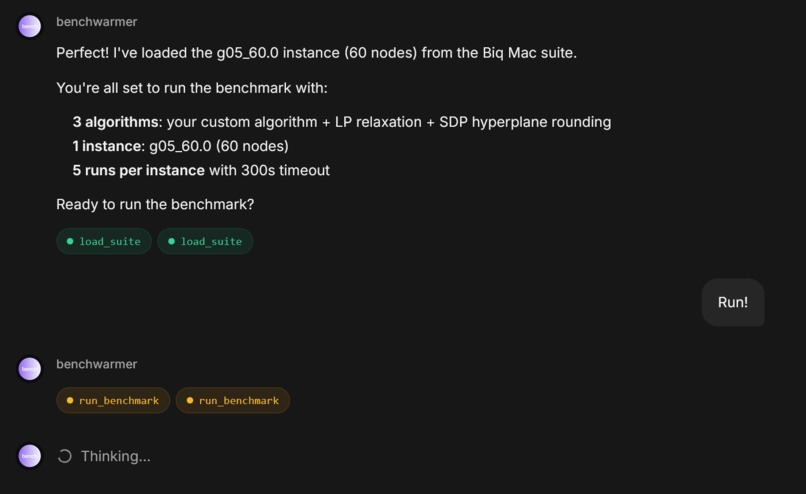

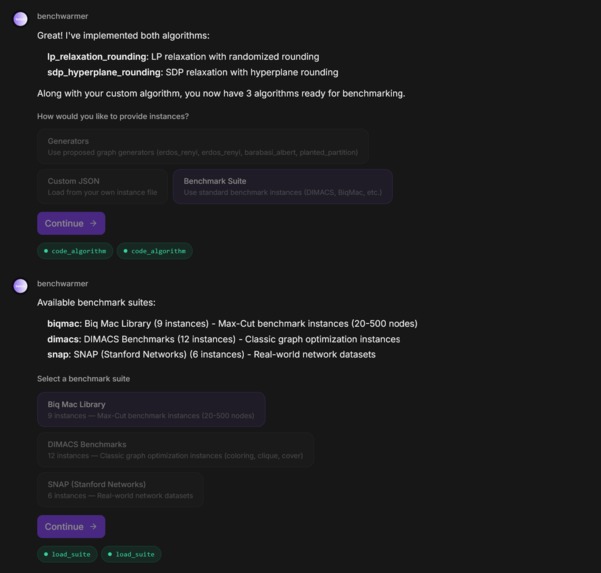

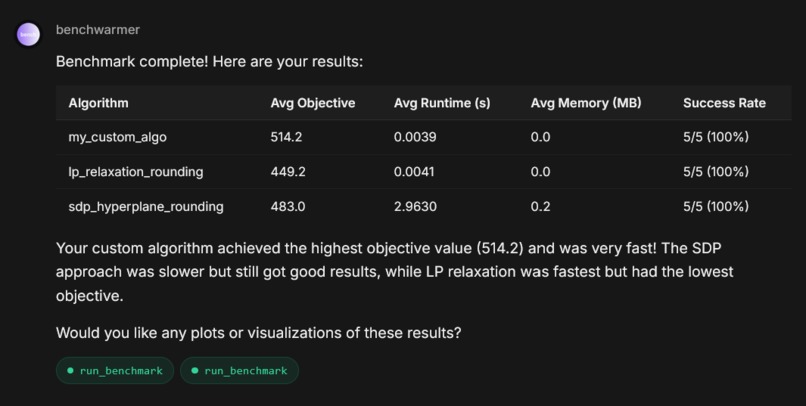

benchwarmer automates the painful workflow of algorithm benchmarking.

You upload:

- Your

.pyalgorithm - The research papers you want to compete against

Our multi-agent framework automatically:

- Extract algorithm logic from the papers

- Generate runnable challenger implementations

- Execute all algorithms in serverless sandboxed environments

- Aggregate results and generate comparison charts

Instead of spending days re-implementing baselines and running for results, it becomes a matter of minutes.

How We Built It

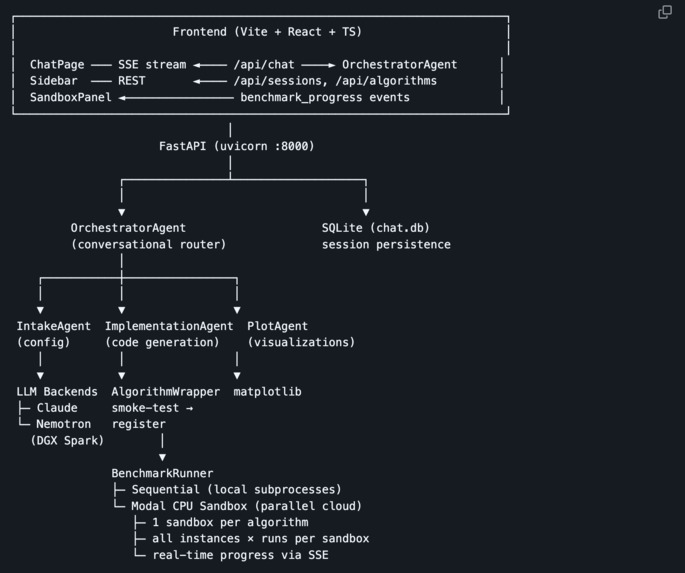

benchwarmer is a multi-agent AI + systems pipeline designed for reliability, isolation, and scalability.

Parallel Execution (Modal Sandboxes)

Each algorithm runs in its own isolated Modal sandbox.

- One sandbox per algorithm

- Parallel execution

- No shared state

- Fail-soft isolation

We are executing AI-generated code, so isolation is mandatory.

If one implementation fails, the rest of the benchmark continues.

Modal’s infrastructure is designed for exactly this workload: dynamic code execution at scale. Their platform supports scaling to 50,000+ concurrent sessions, making this architecture viable for large benchmark suites.

Paper Ingestion + Orchestration (NVIDIA DGX Spark + Nemotron)

Scientific PDFs are messy. Multi-column layouts, pseudocode blocks, dense notation.

We integrated with NVIDIA DGX Spark and used Nemotron-3-Nano-30B as both an ingestion and orchestration agent.

First, Nemotron extracts a structured representation of the algorithm including problem class, key steps, and assumptions.

Then, based on this structured output, Nemotron routes control flow through the pipeline:

- Determines which implementation template to use

- Passes structured inputs to the Claude implementation agent

- Validates required components before proceeding

- Signals whether to retry, reject, or advance to execution

Instead of relying on an external orchestration framework (e.g., LangGraph), we embed routing and control logic directly into Nemotron’s structured outputs.

This reduces orchestration overhead and keeps the pipeline tightly integrated.

Live Observability

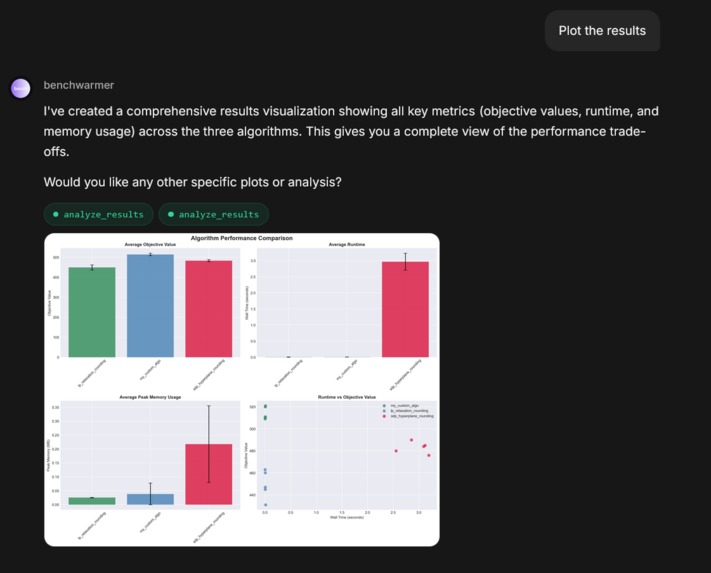

We built a Next.js frontend with WebSocket streaming so users can:

- See per-algorithm status transitions

- Watch live terminal logs

- Track benchmark progress

- View automatically generated comparison charts

Benchmarking becomes observable instead of opaque.

AI-Assisted Implementation (Claude Opus 4.6)

The structured summary from Nemotron is passed to implementation agent powered by Claude Opus 4.6

We specifically chose Opus 4.6 for its reliability in structured code generation and long-context reasoning across technical documents.

However, generation is only step one. Before any implementation is registered for benchmarking, we run automated smoke tests to verify:

- The algorithm compiles successfully

- Required interfaces are implemented correctly

- The function signatures match the selected problem class

- The algorithm produces valid outputs on small test instances

- No unsafe operations or obvious runtime failures occur

If the implementation fails any of these checks, it is rejected and does not proceed to benchmarking.

AI-generated code is treated as untrusted by default. Only validated implementations move forward to execution.

Challenges We Faced

PDF Extraction Reliability

Scientific formatting breaks naïve parsing. Structured ingestion via Nemotron significantly improved robustness.

Executing AI-Generated Code Safely

Generated implementations must be sandboxed and validated to prevent failures from cascading.

Infrastructure Pivot (brev.dev -> NVIDIA DGX Spark)

Mid-hackathon, we ran out of brev.dev compute credits and had to pivot to NVIDIA DGX Spark, a more complex, less abstracted infrastructure layer

What We Learned

- Parallel sandbox isolation is essential for safe AI execution

- Observability builds trust in automated systems

- Structured ingestion dramatically improves downstream reliability

- Most research tooling hasn’t evolved alongside AI capabilities

Most importantly:

Industry does not need another chat bot. They need tools that remove friction from validation.

Our Vision

Benchmarking should not become a bottleneck of innovation.

If you build a new algorithm, you shouldn’t spend days rebuilding baselines.

You should upload your code, upload the papers, and let them compete.

benchwarmer turns paper ideas into live competitors. Automatically.

Built With

- claude

- fastapi

- matplotlib

- modal

- nemotron-3-nano

- pandas

- python

- typescript

- vite

- websockets

Log in or sign up for Devpost to join the conversation.