Inspiration

We wanted to use biosignals and AI to reimagine music creation and expression.

What BioBeats does

BioBeats is a system of wearable sensors that channel biological signals for real-time music control and generation. Audio output is further processed in an AI studio to produce finished audio track with lyrics.

How we built it

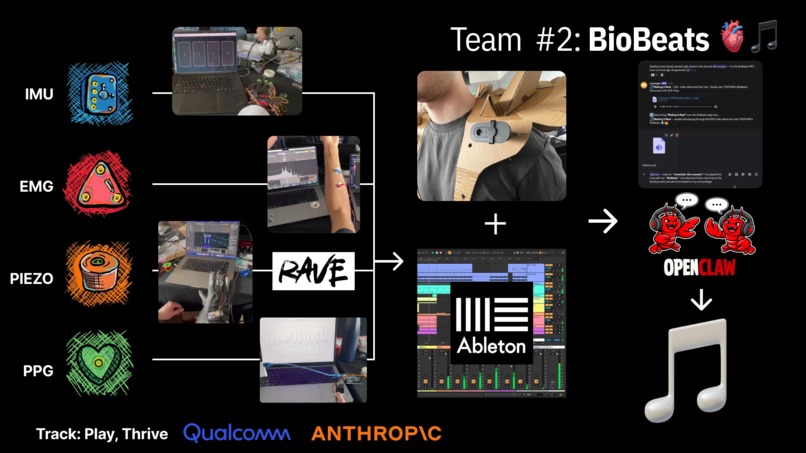

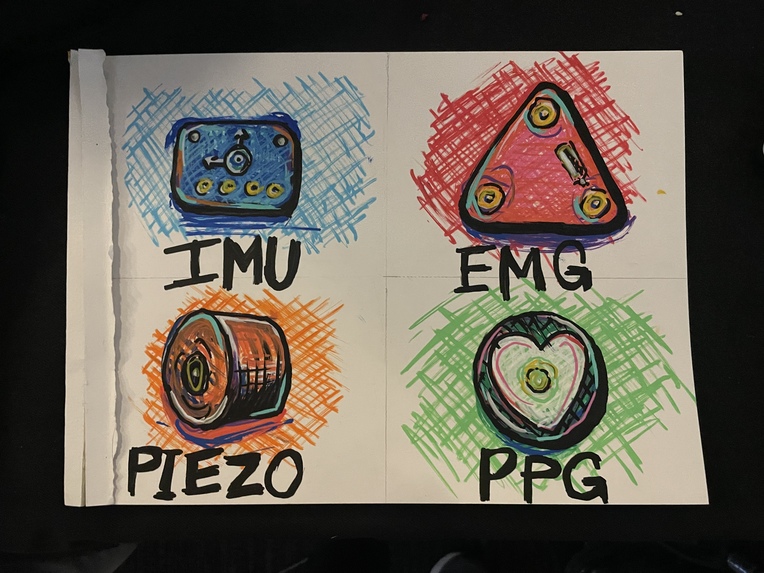

• IMU, PPG, EMG and Piezo: Sensors underlying our biosignal-based musical interface

• MaxMSP + AI models: For real-time parameter mapping and timbre transfer (RAVE: Realtime Audio Variational autoEncoder)

• AI Music Studio: 2 OpenClaw agents powered by Claude Sonnet, generating lyrics and audio (ACE-Step v1.5) based on biosignal audio output and environmental context (using a photo from a mounted camera) and outputting them through a wearable speaker

Further details on our GitHub: https://github.com/MattJmt/BioBeats

Additional footage: https://www.instagram.com/reel/DWB-cfxM2K7/

What's next for BioBeats

This is just a prototype of a much bigger vision that we wanted to bring to the hack: A system of playful, modular, wearable biosignal components that can be worn in a plug-and-play fashion for musical experimentation and expression.

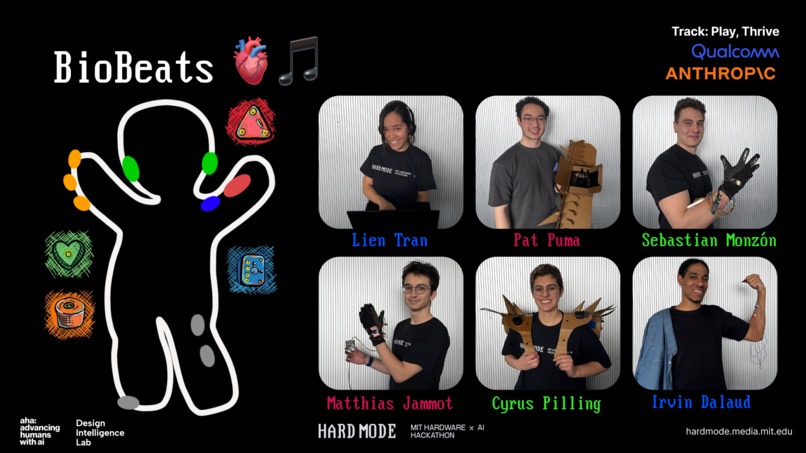

Team

Irvin Dalaud · Matthias Jammot · Cyrus Pilling · Patrick Puma · Sebastian Monzón · Lien Tran (LIÊNA)

Log in or sign up for Devpost to join the conversation.