Inspiration

Every 65 seconds, someone in America develops Alzheimer's. Of the 6.9 million people living with the disease, millions are in the early-to-moderate stages, still independent enough to live at home, but starting to slip on critical daily tasks like taking the right medication. These patients don't need a full-time caretaker yet, but one wrong pill can mean an ER visit. Current solutions only catch mistakes after they happen. We wanted to build something that steps in before harm occurs, extending the window of safe independence for as long as possible.

What it does

Buddy is a real-time AI caregiver for people with mild-to-moderate Alzheimer's and dementia, patients who can still live independently but need a gentle safety net.

A camera (simulating future smart glasses) monitors the patient's interaction with their medication. The moment they reach for the wrong pill, Buddy:

Detects the mismatch between what they grabbed and what they should take Intervenes with a calm, spoken instruction: "That's not the right one. Please take the red pill instead." Tracks whether the patient self-corrects Escalates if they don't, alerting the caregiver's dashboard and, for critical situations, triggering a phone call It doesn't replace a caretaker. It delays the need for one, giving patients more years of dignity and independence while giving families peace of mind that someone is watching.

How we built it

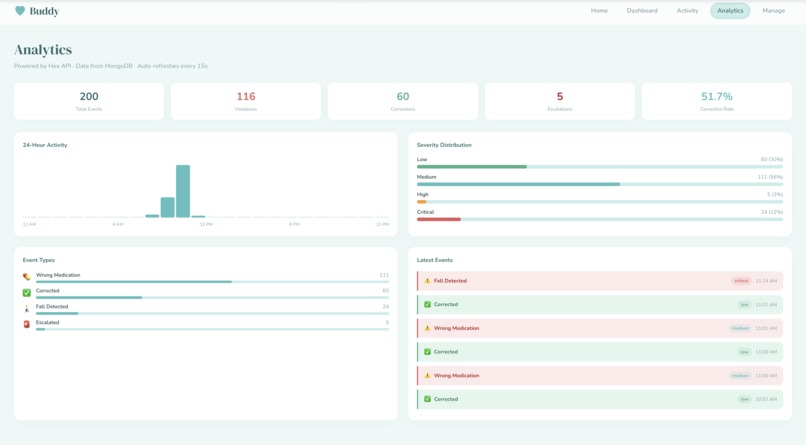

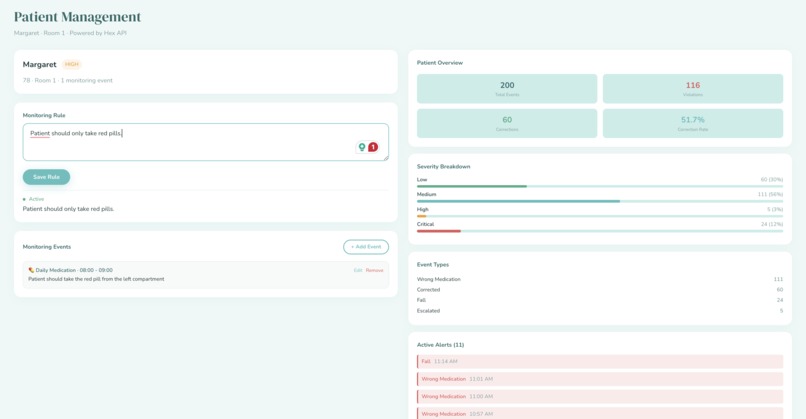

Vision — Viam platform running three color detection models on a live camera feed. For the demo, we use colored M&Ms to simulate medications. Detections are ranked by confidence and written to a structured JSON output every second. Decision Engine — A Python state machine that compares observed vs. expected medication color. It handles alert cooldowns (5s anti-spam), correction detection, and auto-escalation after 15 seconds of no correction. Intervention — Gemini 2.5 Flash generates context-aware spoken instructions. ElevenLabs converts them to natural speech and plays audio instantly. For critical events like falls, pre-cached audio fires in under 300ms. Dashboard — A Next.js frontend deployed on Vercel. It polls the backend every 3 seconds, shows a live event timeline, notable snapshots, and lets caregivers set monitoring rules. Room 1 derives its status from real events; the rest demonstrate multi-patient scale. Backend — FastAPI with MongoDB Atlas for event storage, a WebSocket endpoint for real-time push, and async queues that keep the hot path (event to spoken word) under 800ms.

Challenges we ran into

Data shape mismatch — The camera outputs detection objects, the logic engine expects structured dicts, and the frontend needs display-ready events. We built a transform layer to bridge all three without coupling any subsystem to another. Alert spam — Without throttling, one wrong pill triggered dozens of alerts per second. We solved this with a state machine that tracks cooldowns, correction windows, and escalation timers. Demo reliability over AI complexity — We deliberately chose color-based detection over complex object recognition. For a safety-critical system, a simple approach that works 100% of the time beats a sophisticated one that works 90%. Designing for our audience — Patients with mild-to-moderate dementia can still understand and respond to instructions, but the window is narrow. Interventions had to be simple, calm, and immediate — not a notification they check later, but a voice that speaks to them right now.

Accomplishments that we're proud of

End-to-end in 24 hours — Camera sees wrong pill, voice says "stop," dashboard updates, caregiver gets notified. The full loop works. Sub-second intervention — From detection to spoken word in under 800ms. For cached emergency phrases, under 300ms. Clean architecture — Each subsystem runs independently. You can swap Viam for OpenCV, Gemini for GPT, or ElevenLabs for any TTS — nothing breaks. Graceful degradation — If the backend goes down, the dashboard falls back to cached data. If Gemini is slow, the logic engine still tracks state. Nothing crashes. Built for real people — Every design decision was filtered through "would this actually help someone with mild Alzheimer's stay independent longer?"

What we learned

Viam makes hardware prototyping shockingly fast — we had camera + detection models running in hours, not days. Event-driven architecture is the right pattern for real-time safety systems: Async queues, fire-and-forget side effects, and a fast hot path. Simple beats clever for critical systems. Color detection isn't glamorous, but it doesn't hallucinate. Know your user. We scoped to mild-to-moderate dementia intentionally — severe cases need human caretakers, and pretending otherwise would be irresponsible. Building for the right audience made every design choice clearer.

What's next for Buddy

Expanded hazard detection — Stove left on, wandering toward exits, prolonged inactivity, falls. Wearable form factor — Moving from a stationary camera to smart glasses for continuous, portable monitoring. Clinical validation — Partnering with memory care facilities to test with real patients in the mild-to-moderate stage. Caregiver scaling — One caregiver monitoring 10+ patients through a single dashboard, with intelligent prioritization. Progression tracking — As the system logs corrections over weeks and months, it can detect cognitive decline trends and alert families earlier.

Built With

- accelerometer

- arduino

- atlas

- css

- elevenlabs

- fastapi

- gemini

- gyroscope

- hex

- javascript

- microphone

- mongodb

- next.js

- node.js

- python

- react

- tailwind

- twilio

- typescript

- vercel

- viam

- webcam

Log in or sign up for Devpost to join the conversation.