🚀 CacheFlow 💵

CacheFlow provides an intelligent semantic caching layer that learns from your past MCP tool calls to make your future requests blazingly fast by reducing LLM costs and latency! ⚡️ 🔍 Uses vector similarity search to match your query with previously executed tool call patterns 🧩 🤖 Employs LLM-powered refinement to adapt cached parameters for new contexts 🪄 🔗 Tracks MCP tool call chains and parameters to intelligently reassemble past workflows for similar tasks 🔮

💸 CacheFlow: Cache in, Cash out

What It Does?

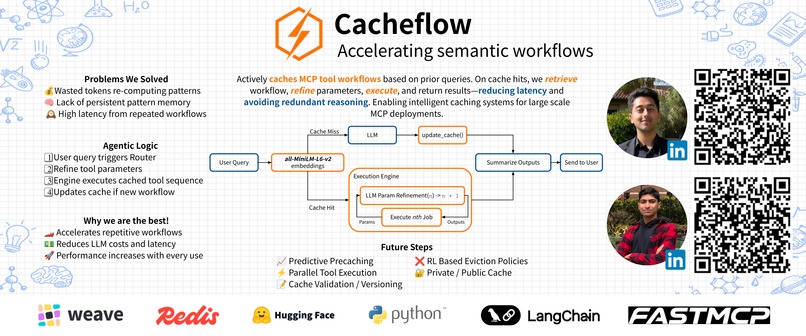

Cacheflow acts as a middleware MCP server that intercepts user requests, searches for semantically similar past queries, and reuses their tool call patterns when appropriate. Instead of reasoning through tool selection from scratch, it retrieves cached execution plans and adapts them to the current context, significantly reducing latency and token usage for repetitive workflows. TLDR: We create a "learned shortcuts" layer that captures common MCP workflows and makes them instantly reusable with context-aware parameter adaptation.

What Is It Useful For?

Accelerating repetitive workflows - When you frequently perform similar tasks with variations (e.g., "create a PR for the auth feature", "create a PR for the payments feature"), Cacheflow learns the tool call pattern once and reuses it with adapted parameters, bypassing expensive reasoning steps.

Reducing LLM costs and latency - Instead of full tool selection reasoning on every request, cached patterns are retrieved via fast vector search and only parameters are refined. This cuts token usage and response time dramatically for common operations.

Logic

- CacheDB: Redis-backed vector store using LangChain and HuggingFace embeddings (all-MiniLM-L6-v2). Stores user inputs as semantic vectors with associated tool call metadata (tool names, parameters, execution order).

- Engine: Execution engine that connects to GitHub Copilot's MCP API. Takes cached tool calls and uses an LLM to refine parameters based on the current user input.

- FastMCP Server: Main orchestration layer with two core tools:

- route_user_input: Mandatory first-call tool that performs semantic search (similarity threshold 0.85). On cache hit, triggers Engine to execute refined tool calls.

- update_cache: Post-execution tool that stores new query patterns with their tool call sequences.

List of Tools

- W&B Weave

- FastMCP

- LangChain

- HuggingFace

- Redis

Log in or sign up for Devpost to join the conversation.