Inspiration

Many recreational sports are played without referees, leading to constant disputes over close calls. Whether it’s a line call in pickleball, a boundary in volleyball, or a shot in badminton, disagreements interrupt the game. We wanted to build an accessible, AI-powered solution that settles arguments instantly using just a phone camera — no extra hardware, no human ref needed.

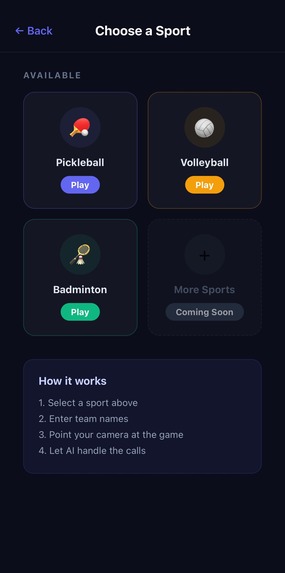

What it does

FairPlay is a mobile AI referee for sports. Point your phone at the court and the app uses real-time computer vision to:

- Track the ball

- Detect bounces or contacts

- Make IN/OUT line calls

- Automatically award points

- Announce decisions out loud via text-to-speech

No manual input needed during play.

How we built it

We built the mobile app with Expo and React Native, streaming camera frames over WebSocket to a Python FastAPI backend.

At the core of FairPlay is a real-time computer vision pipeline combining YOLO-based object detection with OpenCV processing:

- YOLO is used to detect and track the ball across frames with high speed and accuracy

- OpenCV handles image preprocessing (CLAHE), court line detection (Hough transforms), and geometric mapping

- Ball trajectories are analyzed using velocity and positional changes to detect bounce/contact events

- Detected bounce points are mapped onto court regions using point-in-polygon classification to determine IN/OUT calls

This hybrid approach combines deep learning (YOLO) with classical computer vision (OpenCV) to achieve both accuracy and low latency in real time.

Scoring logic runs server-side and pushes decisions back to the app instantly.

Challenges we ran into

False positives were the biggest challenge — bright objects, fast motion, and varying lighting conditions often interfered with detection.

We improved robustness by:

- Tuning YOLO confidence thresholds

- Adding brightness and saturation filtering with OpenCV

- Stabilizing detections across frames using temporal consistency

- Removing unreliable fallback court estimation

Handling different sports with varying ball speeds and court layouts also required careful calibration.

Cross-platform camera capture (web vs iOS/Android) and WebSocket reliability over local networks required significant debugging.

Accomplishments that we're proud of

- A fully working real-time YOLO + OpenCV pipeline for sports officiating

- Accurate ball tracking and bounce detection across multiple sports

- End-to-end integration: camera → inference → decision → voice output

- Demonstrated with pickleball, badminton, and volleyball

- Achieved < 500ms latency

What we learned

We learned that combining deep learning (YOLO) with classical computer vision (OpenCV) is extremely powerful for real-time systems.

YOLO excels at detection, while OpenCV enables precise spatial reasoning — and the combination makes reliable officiating possible.

The hardest problem is not detection, but consistency and false positive reduction in real-world environments.

What's next for FairPlay

- Further optimize and fine-tune the YOLO model for sports-specific accuracy

- Move inference on-device with CoreML / TFLite for offline use

- Expand support to additional sports with adaptable court detection

- Add video replay and decision visualization for close calls

- Improve robustness across lighting conditions and camera angles

Built With

- expo.io

- fastapi

- javascript

- numpy

- opencv

- python

- react-native

- uvicorn

- websocket

Log in or sign up for Devpost to join the conversation.