About the Project

Inspiration

CancerCare was inspired by the urgent need for early cancer detection. Cancer remains one of the leading causes of death globally, and detecting it early can greatly improve survival rates. I wanted to develop a solution that uses AI and genomic insights to assist healthcare professionals in diagnosing cancer at early stages and offering personalized treatment options.

What I Learned

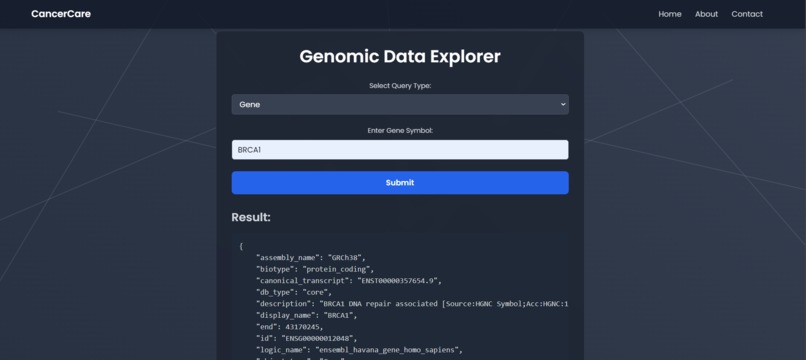

Throughout the development of CancerCare, I gained a deeper understanding of how to merge different types of data, including CT scan images and genomic sequences. I enhanced my knowledge of machine learning techniques, particularly in training convolutional neural networks (CNNs) for medical image classification. Additionally, working with genomic data provided me with insight into how genetic mutations influence treatment decisions, helping me explore the potential of precision medicine.

How I Built the Project

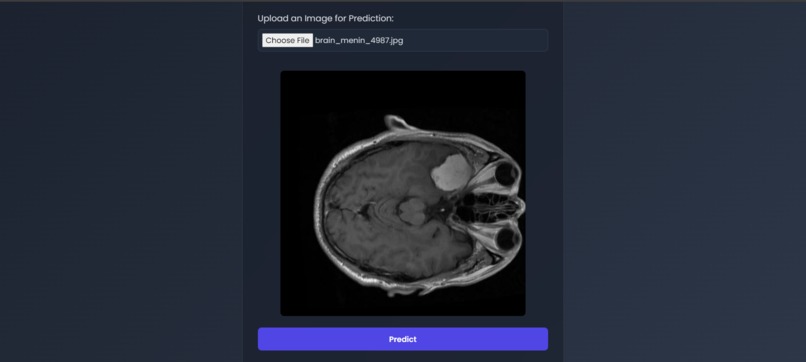

- Data Collection: I gathered over 100,000 CT scan images from publicly available datasets, categorized into 11+ cancer types, and integrated genomic sequencing data from cancer research databases.

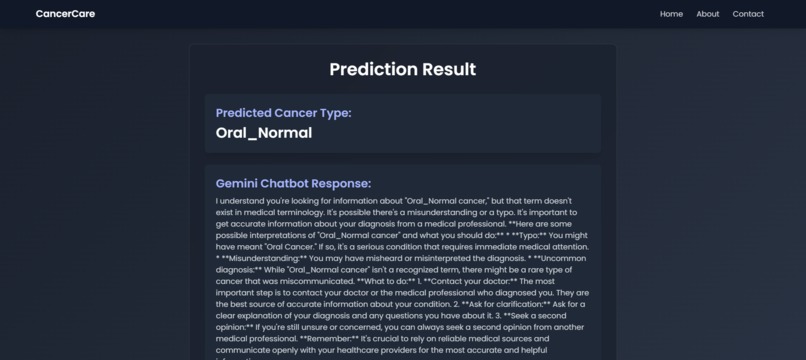

- Model Development: Using TensorFlow and Keras, I built and trained a CNN model to analyze CT scans and predict both cancer types and stages. Genomic data was processed using Python libraries such as Pandas and NumPy, which I then combined with the imaging model to offer genomic insights.

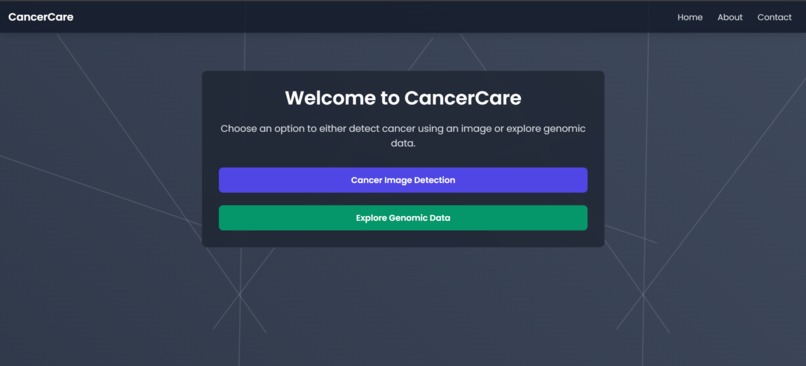

- Integration: I used Flask to build the web application that allows users to upload images and receive predictions. The app also provides genomic insights embedded in the results, offering personalized treatment options.

- User Interface: The front-end was designed using React to ensure an intuitive and user-friendly experience. I also integrated Contentstack to dynamically deliver cancer-related educational content and treatment information.

Challenges I Faced

Data Integration:

Integrating CT scan images with genomic data was challenging due to differences in data structure. I overcame this by creating a normalization process that standardized the data formats and automated the integration.Model Overfitting:

Initially, the model showed signs of overfitting. I addressed this by using regularization techniques such as dropout layers and data augmentation, which improved the model’s performance on unseen data.Prediction Latency:

As the model became more complex, real-time prediction speeds were affected. I optimized the model architecture and applied model quantization, which significantly improved the inference time without compromising accuracy.

Log in or sign up for Devpost to join the conversation.