Cat-AR-pillar – Project Story

Inspiration

Heavy equipment inspections are critical for safety, compliance, and operational efficiency. However, daily inspections of machines such as excavators and tractors are often manual, repetitive, and prone to human error. Inspectors must reference checklists, document findings, and visually confirm components; all while navigating large, complex machinery.

Our inspiration came from observing how much time inspectors spend switching between physical checklists, tablets, and the machine itself. We asked:

What if inspection guidance and documentation could exist directly in the inspector’s field of view, without occupying their hands?

With the rise of wearable mixed reality devices like the Meta Quest 3 and the newly introduced Ray-Ban Meta Smart Glasses, we saw an opportunity to merge computer vision and AR into a hands-free inspection assistant.

Thus, Cat-AR-pillar was born: a Mixed Reality application designed to reduce inspection time and minimize mistakes when evaluating Caterpillar-style heavy machinery.

What it does

Cat-AR-pillar is a mixed reality system that:

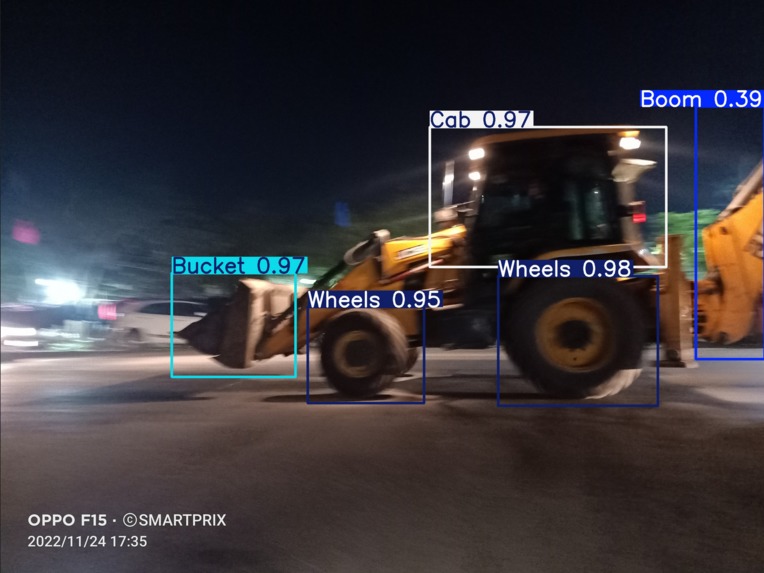

- Detects and segments major components of excavators and tractors for automatic inspection documentation.

- Classifies machine parts in real time.

- Overlays bounding boxes and labels in AR.

- Provides a hands-free inspection interface.

- Records inspection reports using speech-to-text and video snippets.

- Recordings are matched to labelled parts allowing for easy future data filtering

The Breakthrough: Automatic Part Recognition & Smart Logging

Traditional digital inspection tools still rely on manual input. Inspectors must select the machine part from a checklist before logging notes — adding time and increasing the chance of misclassification.

Cat-AR-pillar changes that.

Using real-time object detection, the system automatically identifies the component the inspector is looking at — whether it’s the cab, boom, stick, bucket, or wheels — and instantly categorizes notes under the correct section.

No dropdown menus. No manual tagging. No misplaced documentation.

Inspectors simply look at a part and speak or record. The system handles the rest.

This reduces friction, eliminates categorization errors, and significantly speeds up the inspection process — turning documentation into a seamless extension of vision rather than a separate task.

Manual selection still exists as a fallback, but the AR + object detection workflow is what unlocks true hands-free efficiency.

Object Detection and Segmentation

We trained a YOLOv12 Nano model on a custom hand-labeled dataset ~60 excavator images as although there are datasets of excavators, individual parts have been a overlooked CATegory. Despite the small dataset, the model can segment and classify novel images of tractors into:

- Cab

- Boom

- Stick

- Bucket

- Wheels

The system runs entirely locally on-device, eliminating dependency on:

- Cloud APIs

- Jobsite Wi-Fi

- Cellular connectivity

This ensures reliability in remote or low-connectivity environments.

AR Interface

Using mixed reality:

- Machine components are highlighted in the inspector’s field of view.

- The inspector can track checklist progress visually.

- Looking at their non-dominant (left) hand triggers a floating interactive menu.

Users can:

- Log issues

- Use speech-to-text for comments

- Record short video snippets for documentation

The goal is to make the AR glasses function similarly to required safety glasses—minimally intrusive and fully hands-free.

How we built it

1. Model Development

Selected YOLOv12 Nano for:

- Small dataset compatibility

- Lightweight inference

- Edge deployment performance

Trained on 60 images with varied:

- Angles

- Lighting conditions

- Machine variants

Focused on generalization despite non-standardized excavator designs.

The nano model was ideal because:

- Limited training data

- Need for real-time inference

- Local-only processing requirement

- Reduced computational load for wearable devices

2. Mixed Reality Integration

- Built prototype on the Meta Quest 3.

Integrated:

- Real-time camera feed

- YOLO inference pipeline

- AR overlay rendering

Designed a gesture-based and gaze-based UI system.

Implemented hand-on-hand interaction tracking for menu activation.

3. Reporting System

- Built in-app inspection tracker.

Integrated:

- Speech-to-text transcription

- Short video recording

- Structured inspection logging

This allows future inspectors or maintenance teams to review precise contextual notes instead of vague written comments.

Challenges we ran into

1. Extremely Small Dataset

Training on only 60 images posed significant risk of overfitting. Machines were:

- In obscure poses

- Captured at inconsistent angles

- Non-standardized across models

We had to:

- Carefully tune augmentation

- Optimize anchor settings

- Validate performance on unseen tractor images

2. Model Generalization

Excavators vary widely in shape and configuration. Unlike standardized objects, components like booms and sticks can differ significantly between manufacturers.

Ensuring accurate segmentation across variations was difficult.

3. On-Device Constraints

Running everything locally meant:

- Strict memory constraints

- Real-time inference requirements

- No fallback to cloud compute

Balancing latency time with usability was critical:

We had to keep total latency low enough for seamless AR interaction while ensuring that the models were accurate.

4. UX Design in Mixed Reality

Designing an interface that:

- Doesn’t clutter vision

- Doesn’t obstruct safety

- Remains intuitive in industrial environments

was more difficult than expected.

Accomplishments that we're proud of

- Successfully trained a working detection model from only 60 images.

- Achieved real-time inference on a wearable device.

- Built a fully hands-free inspection system.

- Designed a practical, jobsite-ready AR interface.

- Created a scalable framework for future smart glasses deployment.

- Aligned the solution with emerging wearable AR hardware trends.

What we learned

- Lightweight models can be extremely powerful when tuned correctly.

- Edge AI is essential in industrial environments with unreliable connectivity.

- Mixed Reality UX requires simplicity above all else.

- Even small datasets can work with the right augmentation strategy.

- Hands-free interaction with object detection dramatically improves workflow efficiency.

We also learned that reducing cognitive load is just as important as reducing inspection time.

What's next for Cat-AR-pillar

- Expand dataset to improve robustness.

- Add more machine component classes.

- Implement damage detection (rust, cracks, leaks).

- Deploy on lightweight smart glasses like Ray-Ban Meta Smart Glasses.

- Integrate automated inspection report export.

- Introduce predictive maintenance analytics.

Our long-term vision is to transform industrial inspections into an intelligent, contextual, and fully hands-free experience—where safety glasses double as an AI-powered assistant.

Log in or sign up for Devpost to join the conversation.