Inspiration

Walking out of our first NSBE National Convention in Baltimore, we had a problem every college student knows but nobody talks about. Over three days, we'd spoken to dozens of recruiters, engineers, and fellow students. We'd collected business cards, sent LinkedIn requests between sessions, and scribbled notes on conference programs. A Goldman recruiter mentioned summer applications opening in March. A Microsoft engineer said to email her directly. Someone from a startup described a role that sounded perfect.

By Monday morning, most of it was gone. Which Goldman recruiter was it? Was the Microsoft engineer named Sarah or Sandra? The business cards sat in a pile. The LinkedIn connections were names without context. The promises to follow up became vague memories. Your professional network is the single most important asset you build in college, and we were managing ours with our memories. Students from well-connected families have parents who maintain these relationships for them. First-generation professionals like us don't have that safety net. Every lost connection is a lost opportunity. We built CircleBack because we needed it the morning after that career fair.

What it does

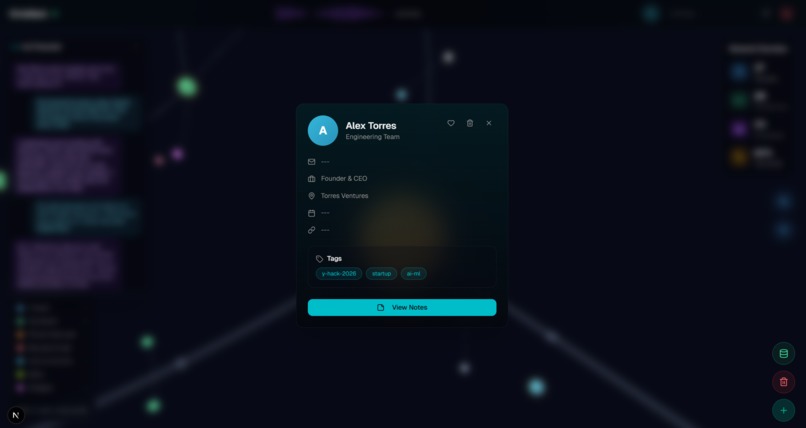

CircleBack is a voice-powered AI agent that turns the way you naturally talk about people into a structured, searchable knowledge graph. You just talk to it. "I met James Wright from Microsoft's Azure team at YHack. He's hiring for summer 2027 and he knows my friend David from NSBE." CircleBack extracts every detail, stores it in a knowledge graph, auto-tags James with the event you're at, and enriches his profile with real-time web data.

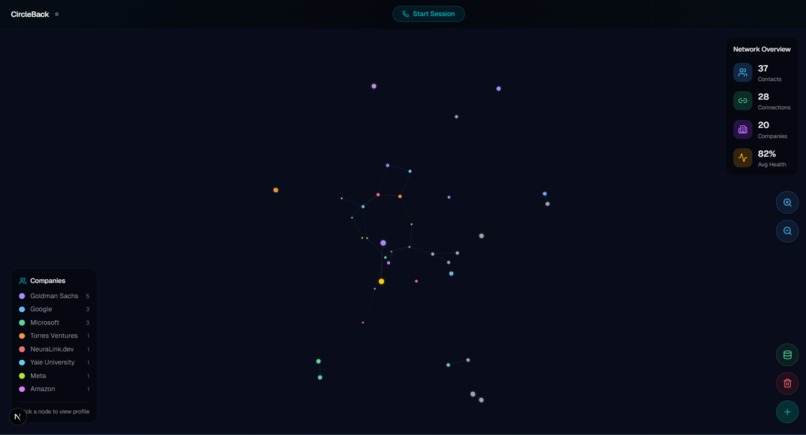

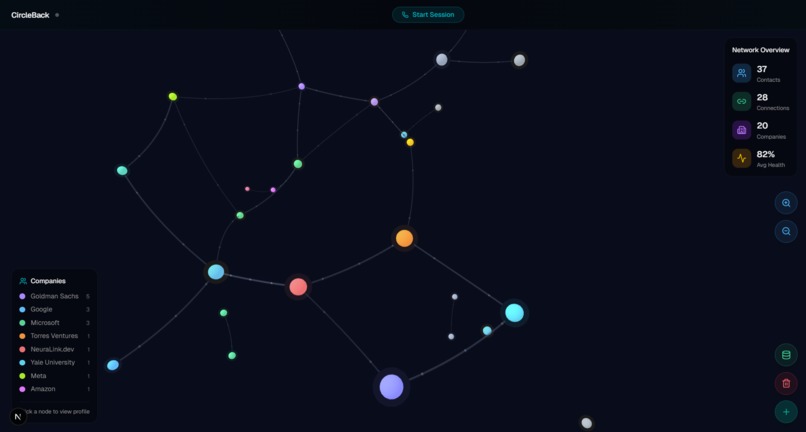

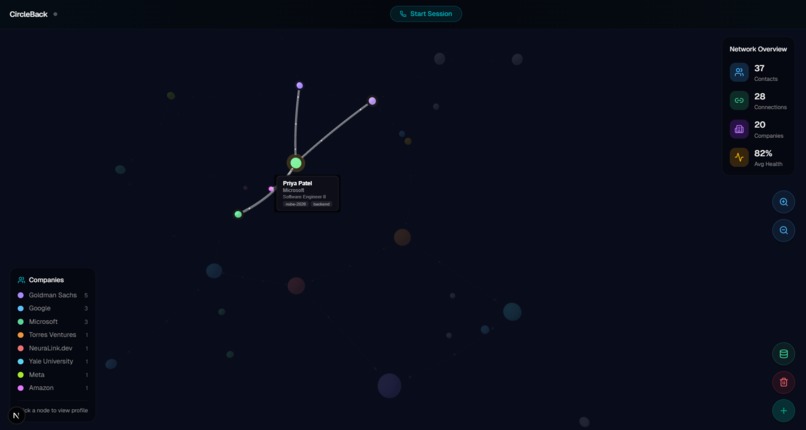

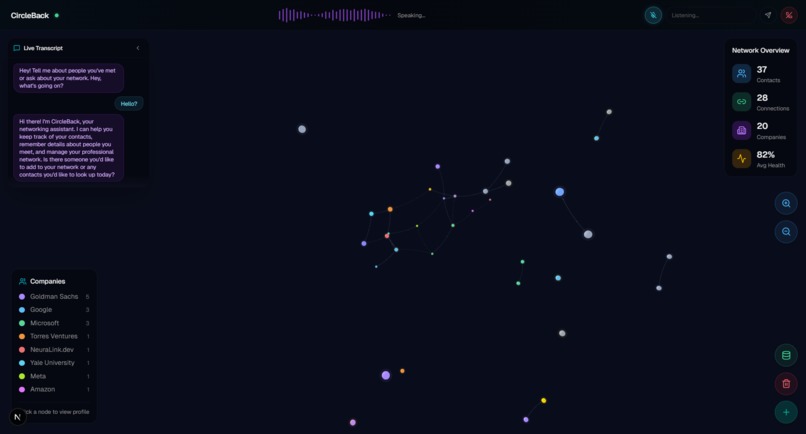

Later, you ask: "Who did I meet at NSBE last year?" or "Who in my network works in machine learning?" CircleBack searches your entire relationship history and gives you answers with full context: names, companies, how you met, what you talked about, and when to follow up. The frontend renders your entire network as an interactive 3D graph. You can see clusters form around events, companies, and shared interests. Click any node and see everything you know about that person. Your network stops being a list and becomes something you can actually explore and understand.

How we built it

We built CircleBack in layers. The backend is a FastAPI server with MongoDB, where contacts, relationships, events, and interactions are stored as documents. Graph traversal is handled using MongoDB's $graphLookup with a bidirectional relationships view, so we can trace multi-hop connections and shortest paths without a dedicated graph database.

The voice agent uses ElevenLabs Conversational AI with Claude 3.5 Sonnet as the reasoning model, connected to the browser through a WebSocket proxy. The backend tunnels audio and tool calls between the frontend and ElevenLabs, while the browser executes all 12 tools client-side: adding contacts, forming relationships, querying the graph.

The frontend is a full-screen 3D force-directed graph built with Three.js, with nodes color-coded by company, sized by health score, and connected by strength-based links. We designed the whole UI around a "command center" feel: a persistent voice bar at the top, a live transcript panel showing tool call badges in real time, and the graph responding instantly, highlighting nodes amber when searched, pulsing new nodes into existence, and flashing cyan particle bursts when connections form.

Challenges we ran into

The biggest challenge was making the voice agent and the graph feel connected. Initially they were completely separate: the voice overlay blocked the graph, and tool calls only triggered a delayed refresh. We had to lift the entire voice state into a shared hook so that every tool call could immediately drive graph animations, node highlighting, and camera movement.

Getting the agent to sound human was the other major hurdle. Early versions felt like a chatbot reading database results. Tuning the ElevenLabs voice, the system prompt tone, and how responses were structured so that CircleBack felt like talking to a friend with perfect memory, not a search engine, took more iteration than any of us expected.

Accomplishments that we're proud of

The real-time feedback loop between the voice agent and the 3D graph is what we're most proud of. When you mention someone's name and a node instantly appears, pulses, and forms connections right in front of you, it stops feeling like a demo and starts feeling like a real product. We're also proud of how natural the voice interaction turned out. The agent doesn't just log data, it has a genuine conversational tone, proactively saves detailed notes behind the scenes, and knows when to ask a follow-up versus just confirming.

The graph visualization was its own breakthrough. The force-directed layout with strength-based link distances means coworkers cluster tight while loose acquaintances span the space with elegant long-distance connections. There was a moment where it went from "cool tech demo" to something that actually communicates the shape of your network at a glance.

Beyond the product, four people who'd never built together before shipped a working, polished application in 24 hours. We stayed up all night coding, debugging integration issues at 3 AM, and bonded over the chaos of it all. We came in as classmates (and strangers) and left as a team that knows how to build together under pressure.

What we learned

We learned how to build under real pressure. Twenty-four hours to go from idea to working product with four people who'd never shipped together before. Scoping ruthlessly, cutting features that didn't serve the demo, and communicating across layers when things broke at 3 AM.

We learned how agents actually work from the inside: not just calling an API, but wiring tool definitions, handling messy voice input, managing state between the voice layer and the frontend, and making it all feel seamless. Building with Claude Code changed how fast we could move individually. And working with ElevenLabs taught us that making an AI sound human is a design problem, not just a technical one. The voice, the pacing, the tone of responses, those details matter more than the model behind them.

What's next for CircleBack

CircleBack currently relies on the user to verbally share everything about a contact. The next evolution is making the graph self-enriching. Gemini Vision would let users scan business cards or LinkedIn screenshots to capture contacts they didn't have time to mention. Web search enrichment with Tavily would automatically pull in a contact's latest role, recent projects, and company news the moment they're added, so the graph knows more than the user remembered to say.

A LangGraph briefing agent would chain together profile lookup, mutual connections, interaction history, and live company research into a spoken pre-meeting prep, turning the graph from a passive record into an active coach. Health score decay would trigger proactive nudges when important connections go cold. And a mobile companion would bring voice capture to live events hands-free. The goal is to take CircleBack from a networking memory to a full networking intelligence platform that captures, enriches, connects, and advises.

Log in or sign up for Devpost to join the conversation.