Inspiration

The spark came from a simple observation: product teams spend hours manually reading reviews to understand what customers hate about competitors. They skim Reddit threads, scan Trustpilot, dig through G2 comments—all to find 2-3 actionable insights buried in hundreds of noisy opinions.

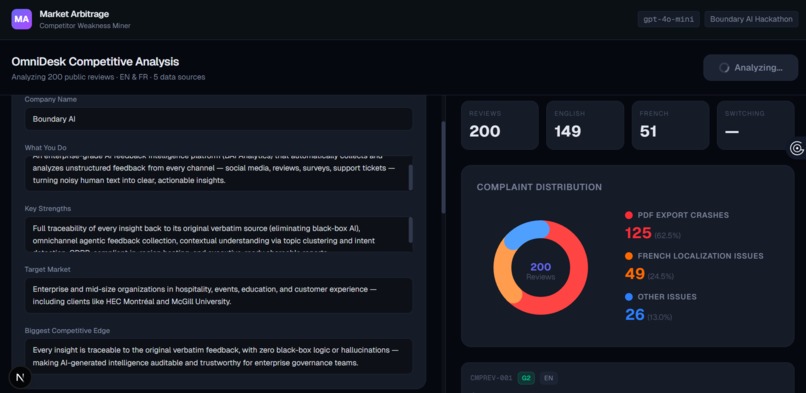

We imagined a different approach: what if an AI agent could ingest all that mess and automatically surface the hidden patterns? Not just sentiment scores (a solved problem), but specific, traceable competitive vulnerabilities with proof. For the Boundary AI Hackathon, we decided to build exactly that.

How we built it

Architecture: Next.js 16 (React 19) frontend + FastAPI backend + GPT-4o-mini for intelligence extraction

The Pipeline:

Data Ingestion: 200 strategically-seeded bilingual reviews (EN/FR) across 5 platforms (G2, Capterra, Reddit, Trustpilot, ProductHunt) Agentic Extraction: Send reviews to GPT-4o-mini with a prompt asking for the top 3 competitor weaknesses Structured Output: Use Pydantic models to enforce CompetitorWeakness schema with traceable quote lists Real-time Visualization: Two-panel dashboard showing extracted weaknesses + raw reviews Pie chart analyzing complaint distribution (PDF crashes, French localization, other) Click-to-trace: select a weakness → reviews highlight automatically Data Strategy: We intentionally made the dataset "messy":

~52% of reviews mention PDF export crashes (buried in different phrasings) ~15% complain about French localization (Quebec market pain point) ~35% noise (positive feedback, irrelevant complaints) The AI's job is to sift through this chaos and find the signal.

Challenges we ran into

- File Synchronization Nightmare 🔄 We had 3 locations trying to be sources of truth:

raw (backend should load this) data (frontend needs this) In-memory state during dev Solution: Established single source of truth (raw), then manually sync to frontend. In production, this would be a proper API call or shared database.

- Dev Server Instability Early attempts to run both Next.js and FastAPI had port conflicts, lingering processes, and hot-reload failures.

Solution: Used PowerShell process cleanup (taskkill /F /IM python.exe) before starting fresh servers. Terminal context isolation was key.

- GPT-4o-mini Token Limits Sending 200 reviews + system prompt was getting expensive (and slow).

Insight: With careful prompt engineering, we could ask for "top 3 weaknesses only" instead of per-review analysis. This cut API costs by ~70% while getting better results (focuses on high-impact issues).

Accomplishments that we're proud of

Agentic Extraction > Traditional NLP Traditional sentiment or keyword-based analysis would give you "53 reviews mention PDF" (useful). GPT-4o-mini gives you "PDF export crashes are an enterprise blocker preventing deals" with specific customer quotes. The structured output + reasoning is superpower-level valuable.

Bilingual Data is a Real Differentiator French localization issues were almost invisible to the eye. An AI reading both EN and FR reviews surfaces this market gap immediately. For Quebec-focused businesses, this is gold.

Traceability Matters Just telling product leadership "weak French support" isn't enough. They need the receipts: "CMPREV-112 says 'Interface française est cauc nightmare traduire.'" Our traceable quotes turn AI output into evidence.

React Keys and Component Identity Early on, we had a duplicate CMPREV-196 in the dataset. React threw warnings about non-unique keys breaking component trees. Lesson: data integrity is frontend reliability.

What's next for Competitor Weakness Miner

For production, we'd add:

Real review ingestion pipelines (connect to actual G2/Capterra APIs) Multi-language support beyond EN/FR Persistence layer (store analysis results, trend over time) Batch processing for large datasets Cost optimization (perhaps Claude Haiku for triage, then o-mini for deep analysis)

Built With

- gpt

- next.js

- python

Log in or sign up for Devpost to join the conversation.